Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

Beyond the State Machine

Jean Simonet takes a deeper look at how new language features, such as Coroutines, Closures and Continuations can help game programmers write more robust and readable Gameplay and AI code. This is a follow up to his article Logic Over Time.

This post is a follow up to the article Logic over Time in which I described why new language features, such as coroutines, can help game programmers write more readable and robust state machines. In this article, I’ll talk in more details about the specifics of my implementation of coroutines and its advantages and uses beyond state machines themselves. Specifically, I'll talk about concurrency and synchronization.

I have posted the sources on GitHub, under an MIT license, feel free to use this framework in your own projects. The code is written in .NET 3.5 (the version supported by Unity).

https://github.com/jeansimonet/Coroutines

I will start by showing you an example, and highlight how these coroutines are nicely composable. After that I will dive deeper into the implementation of the framework, and answer some of the more common questions related to using coroutines in game code.

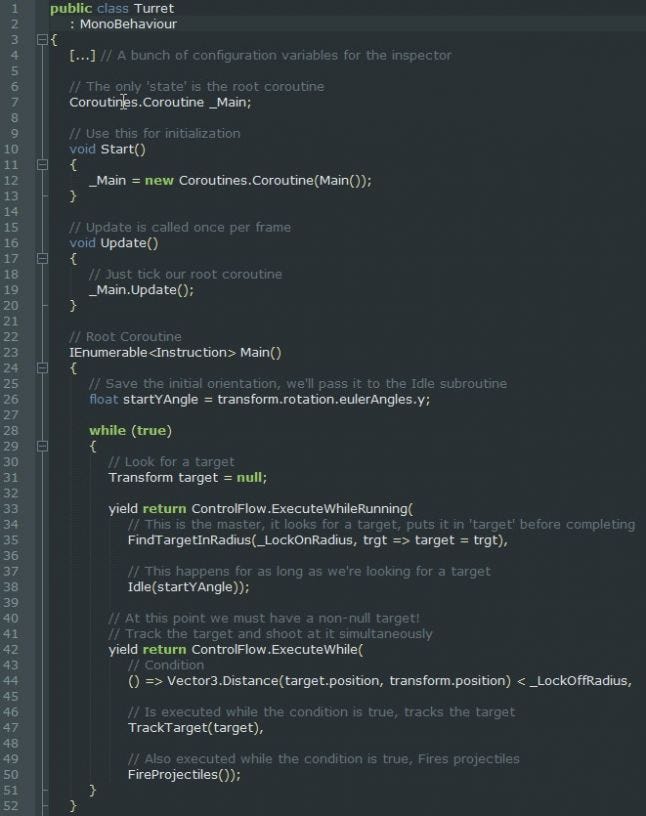

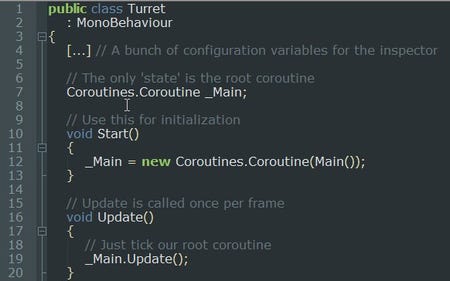

A Simple Turret

Picking up where I left off in the first article, I went ahead and wrote a simple Turret behavior for a mock game (sources of which are also available on the repository).

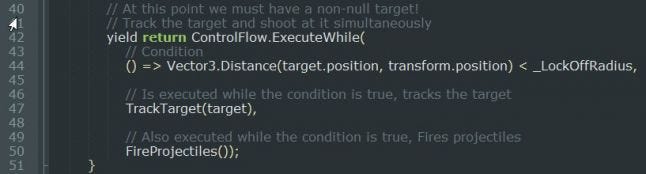

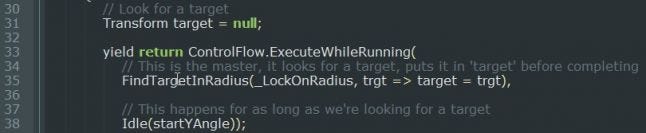

To summarize, the turret does the following: it looks for a target (the player) within a given radius. Once it finds a target, it does two things at once. It shoots projectiles, and it tracks the target. If it loses lock on the target (player moving too far away), it returns to its original orientation and starts over. Here is the relevant piece of code, let’s dissect it right away!

Let’s start by looking at the IEnumerable<Instruction> type of the Main() coroutine. As I mentioned in my previous article, this function generates an iterator block, that yields intermediate results of type Coroutines.Instruction. These intermediate instructions tell the framework how to proceed.

By default the framework enumerates the iterator block, which is what executes the actual coroutine code. It does this until a value is yield-ed by the coroutine code. Depending on what that value is, it will do one thing or another.

ControlFlow.ExecuteWhileRunning() and ControlFlow.ExecuteWhile() are of course special instructions that tell the framework what to do: mainly to execute other (sub)coroutines under certain conditions. Instructions like ExecuteWhile() or Call() are how we can compose coroutines, let’s look at them more closely.

Coroutine Instructions

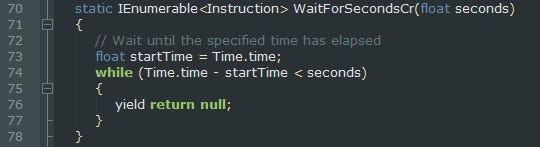

To understand how instructions works, let’s start by looking at a simpler example:

This is the utility coroutine that waits for a specific amount of time. It just sits in a loop checking the time since it was first called, and yields null. Once the time has elapsed, it simply terminates. null is interpreted by the framework to mean ‘sleep until next frame’. (Note: it is the exact same meaning as it is for Unity’s coroutines. How convenient!)

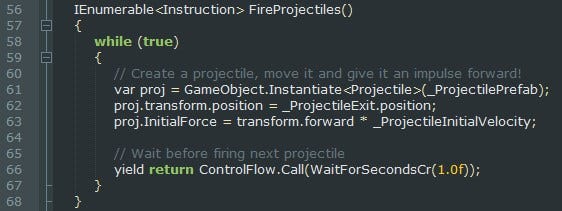

A coroutine can also yield a Call instruction, passing in another coroutine. This is in fact what the FireProjectiles() coroutine of our turret does to wait between firing projectiles.

ControlFlow.Call(...) is a utility method that returns a derived class of Instruction, and has a special meaning that the framework understands. In this case it means ‘start executing the coroutine I am passing in (stored in the instruction), and resume me once it has terminated’.

As you would expect, there are other control flow methods that return different Instructions, which in turn have different meanings. ControlFlow.ExecuteWhile(...) is an example of that.

The ExecuteWhile instruction passes a number of (sub)coroutines and a predicate, and the framework understands it to mean ‘Run all the coroutines in parallel for as long as the predicate is true’. But before diving into the details of the ExecuteWhile(...) code, we need to take a step back and explain how the runtime works.

It’s always Graphs

Behind the scenes, the framework is building a graph structure. The runtime executes the user code, until the user code yields an instruction, and then the runtime interprets that instruction accordingly. Depending on the yielded instruction, the runtime can create different kinds of sub nodes. The most common coroutine node is the one that executes an IEnumerator<Instruction> iterator.

.png/?width=646&auto=webp&quality=80&disable=upscale)

The graph is stored by the user however, by manually instantiating a root coroutine node. In our turret example, the root of the graph was declared when we added _Main to the Turret class.

There is no global coroutine manager or anything like that in the framework. If you want to use a coroutine, you instantiate it yourself, and then ‘tick’ it yourself as well.

After this, the graph structure is built on-demand, based on the instructions yield-ed by the user code. If the user indicates it wants to ‘Call’ a subroutine for instance, the runtime creates a new coroutine, sets it as the child of the current coroutine, and passes execution to it.

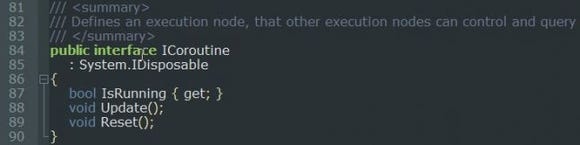

Which brings us to the interface that coroutine nodes need to implement: ICoroutine.

A basic node of the coroutine graph needs to be able to perform the following:

A basic node of the coroutine graph needs to be able to perform the following:

Be updated, of course, to do some actual work!

Indicate whether it is running or finished. That value is used, among other things, to determine when to return execution to a parent coroutine node.

Be reset, that is: restart whatever it is doing from the beginning.

Be disposed. This is crucial so that nodes can make sure they clean up after themselves in a predictable fashion. It also has the nice advantage that we can easily build a node pooling system once we know for a fact that disposed nodes are no longer used.

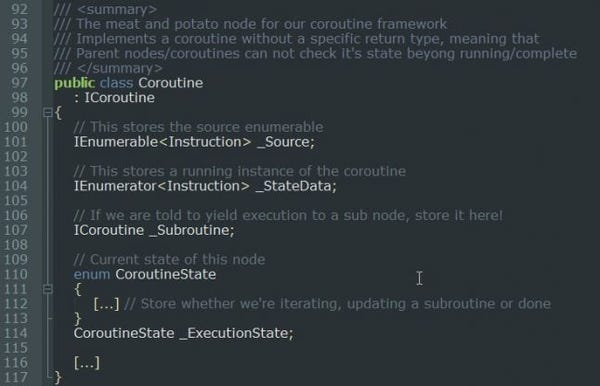

The Coroutine Node

The Coroutine node is the main workhorse of the framework. It is the node where user code is executed. The coroutine node is the one that understands the ‘Instructions’ I mentioned earlier. It can be represented like this:

.png/?width=380&auto=webp&quality=80&disable=upscale)

And in practice, it stores the following data:

The coroutine needs to know the original IEnumerable so it can restart enumeration from the beginning when reset. Of course it stores the IEnumerator to keep track of where it is in the coroutine (for all intents and purposes, the IEnumerator is the auto-generated state machine). After that, it has two extra members: a state variable and a subroutine.

The subroutine is null until a control-flow instruction is yield-ed and tells the coroutine node how to create the child node. In the case of a CallInstruction as seen earlier, the Coroutine.Update() method sets a flag indicating that instead of iterating its iterator (the user code), it should instead create and then execute a child node. Once that child node completes, it can reset the flag and continue iterating its iterator (the user code). In most cases, the subroutine it creates is itself an iterator-based Coroutine, but in other cases, such as with ExecuteWhile(...), it is a node of a different type.

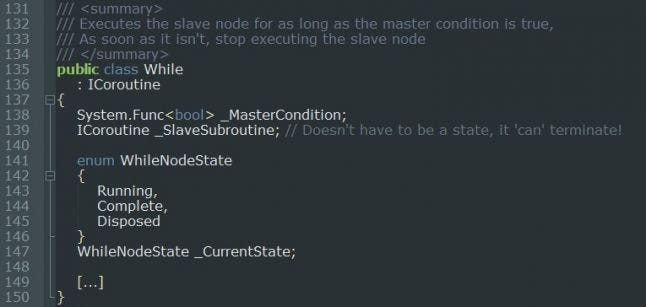

The While Node

.png/?width=343&auto=webp&quality=80&disable=upscale)

The While node stores two things:

A predicate (or in other words a function returning a boolean) that it will use to determine if it needs to continue executing its child.

A child node that it will execute normally, but interrupt as soon as the predicate becomes false.

There is also a state variable, but it isn’t strictly necessary. We’re using it to avoid having to check the master condition again and again when asked what the Running state of this node is.

Of course the Update() method for the While node is very straightforward: the While node checks the predicate every update, and if the returned value is true, executes the subroutine. Otherwise, it interrupts the subroutine (calling Dispose() on it so it gets a chance to clean up), and considers itself finished.

Note that the predicate isn’t evaluated at the time of the call, but instead at every update of the While node. Going back to the Turret example, you can see that the Condition passed to the While node is in fact a lambda expression, also called an Anonymous Closure.

But how does ExecuteWhile() take more than one node? And what happens to those nodes?

The Concurrent Node

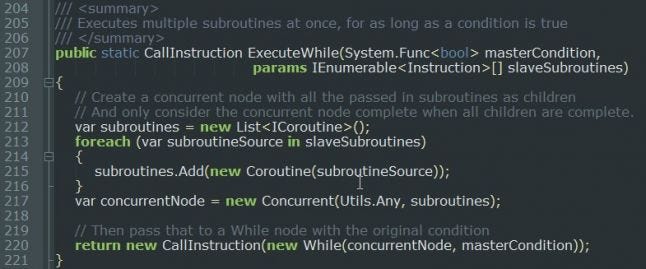

ExecuteWhile() is a utility method that takes a variable number of parameters (variadic function). Let’s look at it!

ExecuteWhile() does two things. First it creates a Concurrent node, passing it the subroutines, and then it creates the While node, with the predicate and the Concurrent node as a child. Finally it returns a CallInstruction indicating that the calling coroutine node should wait until the While node is finished to continue its own execution.

ExecuteWhile() does two things. First it creates a Concurrent node, passing it the subroutines, and then it creates the While node, with the predicate and the Concurrent node as a child. Finally it returns a CallInstruction indicating that the calling coroutine node should wait until the While node is finished to continue its own execution.

In essence our call to ExecuteWhile() in the turret example builds the following graph:

.png/?width=449&auto=webp&quality=80&disable=upscale)

If things are starting to look like Behavior Trees at this point, that’s normal, this is pretty much what we are building here. In fact, it is a fairly simple affair to implement more of the behavior graph nodes, such as priority nodes. But let’s look at a the concurrent node in more detail first, there is more than meets the eye there. Indeed, what does this Any() mean?

Well, as I hinted at the end of my previous article, as soon as you start talking about concurrence, you need to think about arbitration. And the question we need to answer is this: what is the ‘Running’ state of the concurrent node itself? Is it ‘Running’ as long as all its child nodes are ‘Running’? Or does it terminate as soon as one of its child nodes does?

The answer is that it depends, of course! It depends on what the user wants. So we need a way for the user to be able to specify how to arbitrate the ‘Running’ state. This is what Any() is in this case. It is telling the concurrent node that it should be running if any of the child nodes are running.

.png/?width=598&auto=webp&quality=80&disable=upscale)

IsRunning_Arbitration() takes an array of boolean and returns a boolean, and the Any() method that ExecuteWhile() is passing in simply performs a logical OR. We could of course implement any behavior we want, for instance indicating that the concurrent node should be running only as long as all its children are running.

The ExecuteWhileRunning(masterCoroutine, slaveCoroutine) call seen at the beginning of the Turret example is yet another specialized version of the While node that treats the running state of the master node as the condition whether or not to continue executing the slave node.

Disposing Coroutines

So now that we understand how the ExecuteWhile(...) coroutine works, what exactly happens to the slave node(s) when we decide to stop updating? What if a coroutine was holding onto some sort of resource, like a particle effect. Does it get a chance to turn it off, or does the coroutine stay in some sort of limbo state until garbage collection?

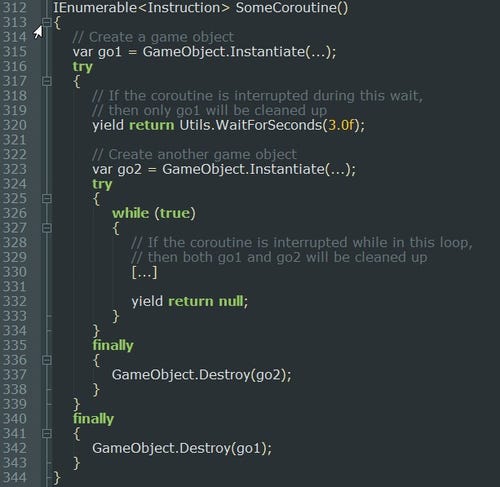

This is where the IDisposable interface comes into play, and more specifically, the fact that C# enumerators implement it. In fact they implement the IDisposable interface for this specific reason. If you provide an IEnumerator that reads from a file, you would want to make sure that the file gets closed when your user code stops enumerating, regardless of whether they reached the end of the file.

So when it came to C# iterators (the building block of our coroutines, the auto-generated state machines), the designers of the language had the great idea to come up with the following convention (from an MSDN article)

If you have a try ... finally block in your iterator, the language executes the finally block under the following conditions:

After the last statement of the try block is executed. (No surprise here.)

When an exception propagates out of the try block. (No surprise here either.)

When execution leaves the try block via yield break.

When the iterator is Disposed and the iterator body was trapped inside a try block at the time.

That last case can occur if somebody decides to abandon the enumerator before it is finished.

The language guarantees that if we dispose the iterator, then the finally block will be executed! And so, the coroutine framework makes sure to do just that whenever a node is disposed or reset, and this is how it can make sure that user code gets a chance to clean up when interrupted.

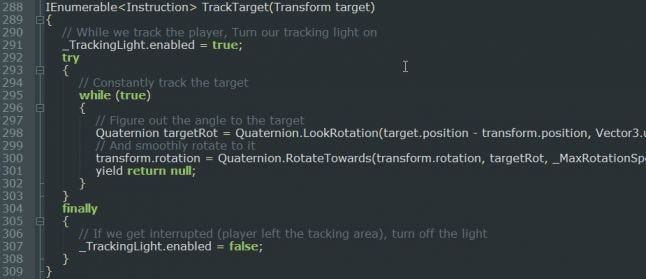

Looking at the Turret example, this is what the TrackTarget() coroutine looks like:

Even though the meat of the coroutine is an infinite while loop, we specify that once we’ve enabled the tracking light, we want to make sure we turn it off again, if the coroutine ever gets interrupted (disposed).

Even though the meat of the coroutine is an infinite while loop, we specify that once we’ve enabled the tracking light, we want to make sure we turn it off again, if the coroutine ever gets interrupted (disposed).

Note: Don’t let the finally keyword scare you into thinking we’re triggering exceptions here, we’re not. The finally block will get executed if an exception occurs, of course, but for the regular case (coroutines completing or being interrupted) we are not incurring the heavy cost of exception stack unwinding.

You can have as many try/finally blocks as you want, and they can also be nested, so you can make sure that only the things that need to be cleaned up are.

Returning values to caller

The last big thing to look at is how Coroutines return data to their caller. And unfortunately, that’s something that they just can’t do (at least not in .NET 3.5 where we don’t have support for async/await). It’s easy to understand how that’s not a trivial feature though, since Coroutines are meant to return intermediate values, they don’t have a notion of final return value.

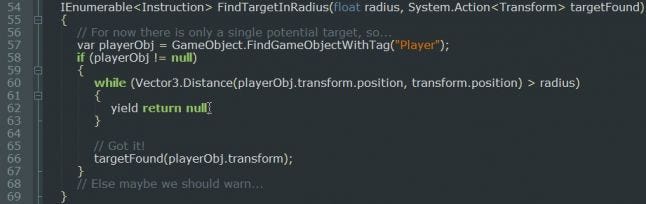

So in order to return values to parent coroutines, we rely on Closures again (or lambda expressions). Looking at the turret example one last time, and the FindTargetInRadius() coroutine specifically, we can see how that works.

FindTargetInRadius() takes a regular parameter (the radius), and an action (a function that returns void). When it finds a target, it invokes the action with the found target. From the Main() coroutine, we use it like this:

You can see the local variable target, and the trivial Lambda Expression being passed to FindTargetInRadius() which simply assigns the local variable. It’s a little roundabout way of doing things, but thanks to the shorthand that the compiler allows, it is not too bad.

More Nodes Types and Applications

Of course the real value of a framework such as this one is in whether it is easy to extend and modify to suit the needs of your game. In fact, these coroutines are a great starting point to build more complex graph-based AI systems. They give us an elegant way to compose and synchronize procedural behaviors.

This is what I’d like to investigate in more detail in the next article. Coroutines with concurrency and synchronization are really close in concept to Behaviour Graphs, which are extremely popular for AI these days. I'd also like to try out writing more traditional Hierarchical State Machines, as well as other AI structures such as the Voting and Subsumption architectures; all constructs that can be best represented by a graph of (mostly) asynchronous code.

Read more about:

Featured BlogsAbout the Author

You May Also Like