Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

Are Hybrid AIs the Answer to Better Gaming AI?

Hybrid approaches, combining machine learning with design-based AIs, are the delivering the most promising results for state-of-the-art AI for modern games. In this blog post we describe how we are approaching this in Unleash.

Introduction

There is much talk about machine learning in games, and how the technologies that are revolutionizing other industries one by one will completely change the future of gaming. However, games are still in many ways much more complex than driving a car, flying a drone, or correctly identifying a person in an image.

Nowadays the game industry is still dominated by traditional AI technologies such as state machines, behaviors trees, and - increasingly - utility-based AI systems (article). These AIs are typically referred to as design-based AIs or expert systems. What is becoming increasingly apparent to many in the industry, and perhaps especially to the players, is that these systems often fail to create really advanced AI opponents in games that can match the experience of playing against human opponents, in areas such as fun and creativity. The underlying reason for this is often the inability of AI developers to take into account all the variations of tactics and strategies and implement these reasonably well in the design-based systems. For players, this often leads to the experience of repetitive and uninspired play by the AI, which is boring and easy to predict and counter once the player has memorized the patterns that the AI utilizes.

There are several reasons for this, many of which point to the lack of learning on behalf of the AI. There is thus a natural drift towards machine learning as the answer to better AI opponents, especially with the breakthroughs seen in AI these days.

There are several aspects of learning that applies to game AI in the same respect as they apply to players. The AI has to learn how to play the game, it has to learn how to adapt and take advantage of any given situation, and it has to learn how to adapt to different playing styles by the player or other opponents.

The State-of-the-Art

Google Deepmind has recently shown how AIs can learn by themselves to play games, understand the rules, and find ways to complete or win games, although only in simple games such as early Atari games. When it comes to understanding a given situation, results within the areas of Chess and Go, show that AIs can obtain reasonable understanding of its situation in the game. When it comes to adapting to different playing styles, results become more sparse.

In other areas of machine learning, deep neural networks and other architectures are showing amazing feats such as being able to recognize images and driving cars, to name some of the more well-known and popular application areas. One aspect of these applications is that they can often be implemented with relatively simple machine learning architectures, such as deep neural networks, even though these can be quite big and quite deep. The deep learning networks for image recognition at facebook are known to be over 100 layers deep. They thus begin to resemble biological brains in some ways, in the complexity and number of interconnections residing within one big network.

AI for the Gaming Industry

However, machine learning based systems for games often don’t have that luxury, as they have to exist under a number of constraints that limit the use of more general architectures. Some of these constraints are performance requirements and available CPU budgets, the ability to handle the complexity of the game state, and design directions to match the requirements for e.g. storytelling or gameplay in the game in general.

When it comes to performance requirements, game AI in many games cannot afford running on massive hardware or server clusters, such as e.g. Facebook’s networks for image recognition can. In some cases, multiple AIs must be able to run in parallel on e.g. mobile devices or similar relative low-performing platforms. This puts limits to the size and complexity of the machine learning architectures, as all computations have to happen with a frame budget of e.g. 1 or 2 milliseconds. Of course, different optimizations or load balancing technologies can be used, so that computations can be balanced across several frames, but there are still relative limits to be taken into consideration.

This is especially challenging as the game AIs have to handle the complexity of the game state, which in many cases can be a significant challenge. It has already been acknowledged that in games such as StarCraft II, the game state is several orders of magnitude more complex than in games such as Chess and Go. Hence, to handle the complexity of games, it cannot yet be expected that machine learning can, within reasonable time and performance budgets, automatically learn the complete game state and act within it. Instead, solutions often require human insights or intuition to create ways of preprocessing the game state to reduce complexity and dimensionality. Examples of this are for instance the feature maps in one of the most recent Starcraft II APIs that select and display specific information that developers have determined are important for that particular game.

The third aspect is especially relevant to games. Game AI is often not about optimal AI solutions to problems. AI opponents, for example, are often not required to win the game, or play the game as well as the technology allows, as is often the case with machine learning applied to Chess and Go. Instead, the role of the game AI is to improve the experience for the player, i.e. make the game fun, entertaining and immersive. The game AI is for example required to play a role, behave in certain ways that are in line with the characters in the game that the AI is controlling, etc. The game AIs are therefore tightly linked to narrative- and game design, and are required to have tools available for game design to control and design their behavior to fit these aims. Pure machine learning-based AIs do not necessarily provide for these aims, and hence other approaches are required.

The Practical Challenges of Machine Learning

In our forthcoming game Unleash, we experienced these challenges firsthand in our quest to develop challenging, machine learning-based AIs for the virtual opponents. Basically, these AIs should behave and play in the same way as human players, and thus be fun, challenging and creative.

Unleash is, alongside many other games, a game with a complexity beyond that of Chess and Go. Although the gameplay in itself is rather easy to learn, there are several layers of meta game that you need to master to truly excel at the game. Being a game based on the Line Tower Wars mods from WarCraft 3, you need to build mazes, spawn monsters, and balance economy, offense and defense throughout the game. You need to bluff, predict, and act on opportunities in similar ways that you do in other complex games such as StarCraft. The player also needs to manage the psychological metagame that make games such as Poker more than just a game of statistics.

In the beginning of our AI research, we started with several advanced technologies such as neuroevolution and deep learning that we more or less threw directly at the game in the shape of opponent AIs, to see how they performed in their raw form. They performed abysmally. We quickly realized that there are many hard problems in Unleash that are actually rather difficult to solve for existing machine learning based technologies.

One them is how to build an efficient maze. As with many tower defense games you need to build a maze, where the monsters can pass through, while you damage them with weapons of different dispositions. The maze should ideally be as long as possible and ensure that the maze deals as much damage as possible to the monsters, so no monster reaches the end. However, the monsters are vulnerable to different types of weapons, and some weapons need to be placed before others in the maze to be really effective. Unleash is also special in the way that we have invented all sorts of monsters that bypass certain maze designs in certain ways, meaning that there is no perfect maze (that we know of). Any maze has to be adapted to the monsters sent by the other players. Hence, we were not only faced with having the AIs learn how to build a maze in the first place. We also needed the AIs to learn how to build a smart maze in the face of all the different scenarios, early and late game, they could be faced with.

Another problem was for the AIs to learn which monsters to spawn. This is kind of the reverse problem of building a maze. In Unleash, as in many other games, it’s often not enough to just build a generic army, and send it towards your enemy. Instead, you need to spy on the enemy’s defenses, and configure your army to take advantage of your enemy’s exact weaknesses. In addition, the army - in Unleash, the wave of monsters - must be made up of the right combination of monsters that can boost or interact in ways that enable them to better bypass the maze as efficiently as possible. There might in many cases also be a timing or order of battle aspect, as some monsters should ideally enter the maze first or last, depending on their role. The number of combinations available explode in this case.

Finally, as the player in Unleash both builds a maze and spawns monsters, the AIs need to learn how to balance offense and defense. One very important point of Unleash is that the resources you have to spawn monsters and build a maze are increased every time you spawn an additional monster. Offense is therefore critical to your economy, and critical to winning the game. A large part of mastering the game, or even posing a decent threat to players, is the ability to optimize the amount of the AI’s resources allocated to spawning monsters, without jeopardizing the strength of the maze. Too much spending on monsters means a strong economy, but runs the risk of the maze being penetrated by the opponent’s monsters. Too much spending on the maze means a strong defense, but that you fall behind on economy. Both scenarios usually mean losing the game. There is thus a significant optimization problem that is specific to the context, the actual situation, and introduces the many advanced aspects of decision-making known from games such as Chess and StarCraft, such as sacrifice and delayed rewards.

There are many more aspects to Unleash, such as upgrading technology, rushes, information warfare, etc., that pose even more challenges to the AIs, but I will not cover them here.

We realized that there were many underlying challenges that kept emerging when we trained the AI. In many cases in the beginning, the AI would reach a certain plateau, where it started to understand parts of the game, such as which weapons to build in the maze to defend against certain monsters, or which monsters to spawn to best penetrate certain mazes. But learning in general was slow, and often resulted in very monotonous strategies. Many of these problems could be traced back to fundamental problems of setting up a machine learning environment, such as how you define the game state, how you define the action space, and how you set up the reward function.

The Need for Parallel Approaches

While the training of the machine learning-based AIs progressed at a slow rate with few successes, we in parallel needed better AI and more reliable opponents for many other testing and development tasks. We implemented these through our Utility AI, where we could design specific-purpose AIs for testing and quality assurance, such as AIs that spawned specific monsters, or build specific mazes, in-game testing or balancing of weapons and monsters, or AIs that could adapt to specific defenses and provide a decent playing experience.

However, as the game development progressed, we also became better players of Unleash ourselves, and we used the knowledge we acquired to start building very hard utility-based AIs. In that process, we realised that many of the problems that plagued the machine learning-based AIs could easily be solved with utility-based systems using our expert knowledge, while some of the problems that were hard to solve using utility-based systems, could theoretically be solved using machine-learning based approaches.

Building efficient mazes, based on best-practices from our internal playtests, was for example easy to build using utility-based systems. We could quite easily describe and design the algorithm that would build the maze and place weapons in places we knew from experience were good to defend against certain monsters using designed configurations.

Spawning monsters based on information about the opponent’s base however, was a complex task in utility-based systems as the number of combinations and the conditions that need to be taken into account are staggering. Consequently, finding the right combinations of monsters for a given task thus ended up as an ongoing task when designing the utility-based AIs. We realized that deep learning could be ideal for this type of optimization under multiple constraints.

Enter the Hybrid AIs

In the process, we realized that we could merge the two approaches, to create hybrid AIs - i.e. combinations of machine learning-based and utility-based AIs. The idea is that for specific areas where the combinations, possibilities or game state are huge, or where e.g. learning is required, machine learning techniques can be used to an advantage. Whereas in other areas where more algorithmic implementations are possible, or where we as human designers know good or perhaps the best solutions in advance, utility-based approaches can be used. It also carries the advantage that we can better control the behavior of the AI where relevant, to ensure that it adheres to our design goal. This was the third requirement for game AIs, as mentioned above. We can for example use the utility-AI to tweak the balance between offense or defense to create different levels of aggression, or we can make different configurations of mazes available to different AIs to give them a playing style. It might also be that we train multiple specialized networks on different reward functions to simulate preferences for spawning air versus ground monsters, and thus give some AIs a personality or specific playing style. There are many options here to implement design decisions, and still maintain the strengths of both AI paradigms, especially machine learning.

The hybrid approach also answers another question that arose during the process of designing the AIs for Unleash. This was the discussion about whether we should apply one large, deep learning-based neural network to handle all inputs and output, or whether we should design the machine learning-based AI in a more hierarchical form.

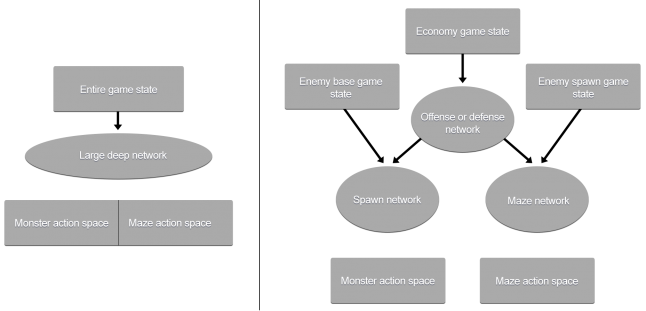

Figure 1: Two Different AI Architectures of Unleash

The figure to the left shows one large, deep network, shaping its own architecture. The figure to the right shows the hierarchical architecture, with each network having specific tasks.

The challenge here was that on the one hand we would like to create a general approach to AI, where we as designers did not impose our view on the architecture of the AI. However, with the number of inputs from the game state and the number of outputs in the action space, the network quickly became very large. At the same time, we could not do compartmentalized training, i.e. only training the network in offense or defense. Thirdly, we feared that performance-wise it would result in a lot more computations. Hence, we also investigated the option of creating a hierarchical architecture, where specific decisions could be carried out by smaller networks, with specialized tasks. The idea here was that one network would have the task of deciding between allocating resources for offense (spawning monsters) and defense (building the maze). Once this decision was made, the network that was chosen could then access the relevant part of the gamestate and make detailed decisions about which monsters to spawn or what weapons to build in the maze.

Conclusion and Next Steps

With the hybrid approach, the utility-based AI that encapsulates the specialized deep learning networks resembles the hierarchical architecture. This architecture also resembles how the biological brain is organised, which specific centers taking care of specific tasks.

What we see now in Unleash, is that the opponent AIs are very hard to beat, they adapt to the situation in the game, and we can still control and design them to our liking. We are still working on them, and they will become even more advanced and adaptive. It is our expectation that in the years to come, the hybrid approach will be seen in many games that start using machine learning for opponent AIs. However, eventually, we do expect the complete machine learning-based solutions to take over. The ultimate aim is to have an architecture that morphs itself to the tasks it needs to accomplish, and ideally finds the optimal shape for this. But, it will likely take some time before we see this architecture emerging.

Read more about:

Featured BlogsAbout the Author

You May Also Like