Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

Optimizing AI for The Magic Circle

The Magic Circle places no artificial limits on the number of creatures you can drag around with you in the world. This is a simplified description of some of the major optimizations that went into making that possible.

WARNING: This post contains spoilers on the gameplay mechanics of the game.

The Magic Circle is a game about a broken game in which you eventually gain the ability to edit properties within almost any dynamic entity you come across. This gives players the ability to turn any creature in the game into an ally that can follow it around the map.

During development, we had originally planned to limit the number of AIs that the player can drag around the map for performance reasons, but every in-game fictional justification we could think of just felt overly contrived. Why would creatures care if you had too many allies when it has already been established that their behavior is nothing more than the properties you set? Our design aesthetic for the game was to say yes to the player's ideas as much as possible.

We thought about perhaps having some finite resource such as "ally slots" or "Hack juice" that maintained a hacked status on a creature's allegiance, but it was important to us, as developers, to make the player not feel like they had to micromanage their army based on some invisible abstract resource when the fictional simulation is telling them that they have stolen the powers of the game's creators. Ultimately, we want the player to feel more like a designer and not like some kind of weirdo programmer mumbling about the Hack juice. As a result, a lot of optimizations went into The Magic Circle to enable an army that includes all of the creatures in that world.

I'll mainly concentrate on the two major parts of AI that took up the most CPU time: Visual Perception and Hazard Avoidance. There were plenty of other optimizations done beyond just these two aspects, such as minimizing dynamic allocations/garbage collection and various graphics optimizations, but those solutions go beyond the desired scope of this post.

VISUAL PERCEPTION

Hearing simulation and touch are relatively cheap compared to the ability to see things and react to them. On a regular basis, every active creature in the game will need to do the following for EVERY potential active target (creatures and player)

Determine if within visual range

Determine if it has line of sight

Determine if friend or foe

... in that order, with early exits at every possible chance. Even if a creature currently has an enemy, scanning continues to find out if there is a better enemy to target. There are 81 creatures in our main gameplay map (Overworld), and the player can add to this number by spawning more creatures with the Parasite special. If we were to do those every frame against every other creature plus the player, then we would have 81 x (80 + 1) = 6,561 scans happening every frame, and the framerate would slow to a crawl on most machines. Incidentally, we do place a limit on the number of parasites the player can spawn, and that is conveyed through the resource known as "life" in the game, which is an analogy to memory allocation.

Concept #1: AI Level of Detail (AILOD)

To reduce the number of creatures that need scanning and need to be scanned, we have an AILOD system that is constantly determining whether a creature is gameplay-relevant based on distance, screen rendering detection, and recent/current gameplay status. These conditions for determining gameplay relevance have been tuned and iterated on many times throughout development. Some manifestations of this are as follows:

If a creature is an ally of the player or is in combat or is onscreen, then it will remain relevant to the scanning process until those conditions have ended for a certain amount of time. With this concept, we are able to reduce the number of scans that need to happen in order for creatures to seem responsive only in situations near the player.

Creatures won't bother with the extra calculations and frame-by-frame raycasts that lead to smoother, more curved, steering when following their path.

Creatures will not try as hard to avoid bumping into solid obstacles (using raycast whiskers) when they are offscreen

If a creature is completely dormant because the player has been away for a long time, then everything gets shut off, and its body no longer contributes to the CPU load of AI or physics.

In essence, creatures tend to be a little drunk when you aren't looking and will sometimes fall asleep if you stay away from them long enough.

Concept #2: Intermittent Thinking

Creatures do not need to scan for targets every frame. Players tend to be forgiving of minor reaction delays as it matches real world expectations for "sentient" beings. For this reason, the functionality for scanning is wrapped inside the Think() function of the creature's brain, and that function does not get called every frame, but induced round robin style by both, the global NPC manager as well as the player's update function, which also induces thinks for one ally and one enemy per frame, round-robin style. In Update #10, a limiter was placed on responses to Think induction to prevent most creatures from running its think function until 0.3 seconds have elapsed since the last Think(). This greatly decreased the CPU load per frame without a noticeable sacrifice in fidelity for those who accept that creatures may have a 0.3 second reaction time.

Incidentally, this concept of amortizing the cost of multi-part operations over multiple frames is used throughout various parts of the game, such as A* traversal and dynamic generation of the three dimensional navigation grid used by flying creatures.

Concept #3: Cache friend/foe results

The evaluation on whether to aggro or follow another creature is based on a very complex set of rules that can change at any time based on player action. For example, creatures will respect bodyguard duties and attack other creatures that threaten a fellow bodyguard as long as it is not allied to the attacker's creatureID. They will also attack neutral creatures if that neutral creature is currently engaged in combat with a victim that evaluates as ally. Contrary to my own instincts, I was surprised when deep profiling revealed that friend/foe determination was more expensive than line of sight determination. The friend/foe evaluation process involves iterating through the player-controlled list of allies or enemies for each creature in order to figure out if this is a combat, follow, or ignore situation... as in ignore a fellow bodyguard who happened to hit it with radial AOE flameburst damage. I began by creating a hashtable of past results that would get cleared if the player altered a creature's behavior. The hope would be to change the cost of lookup from O(n) for each possible ally/enemy in the list to O(1) for the hashed value of the creature's typeID being queried for that creature. It turned out that the overhead of updating the hashtable and checking to see if a cached result exists cost more than the O(n) lookup when iterating through a simple list. What I did instead was cache only the most recent values for true or false:

if (queryID == mostRecentQueriedIDThatWasTrue) { return true; } else if (queryID == mostRecentQueriedIDThatWasFalse) { return false; }

This was enough to greatly reduce the cost of friend/foe determination as most queries happen to hit the same value multiple times in a row for a single creature's scanning process as it scanned multiple creatures around it that happened to have the same ID.

HAZARD AVOIDANCE

Creatures avoid zap walls unless they have lightning rod. They also avoid lava unless they are fireproof. This avoidance behavior can be overridden by the player's waypoint tool, but outside of that, the creatures are constantly feeling around with invisible whiskers to see if there is lava or electricity nearby to steer away from. Those whiskers are expensive because they involve multiple raycasts every frame for every moving creature in multiple directions horizontally and then downward in the case of lava detection.

Concept #1: Roving Whiskers instead of Starburst raycasts

Raycasts are expensive. Wherever we could get away with it, we use whiskers that move very quickly to feel out for certain obstacles, such as the player or other creatures, instead of a multi-directional starburst pattern in a single frame.

float fWhiskerCycle = 0.5f * (1.0f + Mathf.Cos(Mathf.PI * m_whiskerCycleTimer / kWhiskerCycleDuration)); float fWhiskerAngleRad = 0.5f * Mathf.PI * fWhiskerCycle; ... //Right whisker... whisker = Vector3.RotateTowards(intendedDirection, m_owningBrain.transform.right, fWhiskerAngleRad, 0); ... //Left whisker... whisker = Vector3.RotateTowards(intendedDirection, -m_owningBrain.transform.right, fWhiskerAngleRad, 0);

In situations where it makes sense, objects felt by whiskers are managed separately to ensure proper avoidance as movement continues.

Concept #2: Hint Volumes

Hazards such as electrocuting zap walls and lava are not present everywhere in the level, and so it seems wasteful to pay the cost for those whiskers whenever there is no hazard nearby. We eventually introduced the concept of hint volumes that wrap around the area near hazards and will turn those whiskers on only when the creature enters that volume.

As a workflow rule of thumb, we consider it important to require as little manual placement by humans as possible because more often than not, content is the bottleneck over code. Plus humans are prone to error... especially when it comes to repeating the same thing in multiple places. So having another human manually place hint volumes around hazards and set its data properly was not an option we wanted to explore.

Instead of manual placement, we autogenerate hint volumes upon startup by analyzing every damage volume in the world and then creating an extended bounding box around those damage volumes to serve as hint volumes. The cost of this startup evaluation was negligible compared to the noticeable performance gain in areas away from the lava river in Overworld.

OPTIMIZATIONS NOT DONE

Some potential optimizations were considered, but ultimately not done...

Cliff Edge Detection

In addition to avoiding hazards, creatures avoid cliff edges, which also involve multiple raycasts. Overworld is filled with cliff edges all over the place. Autogenerated axis-aligned hint volumes for edges would cover most of the world's playable space, which would make performance with those hint volumes generally on par with that feature always being on. Manual placement would give better results, but that would require extensive human labor that might not be worth the return on investment given that our current performance is generally good enough for most semi-modern machines (such as a 4 year old MacBook Pro running Linux in addition to Playstation 4 and XBox One).

Multi-threading

The Magic Circle was developed with the Unity Engine. Currently, Unity does not allow calls to engine functions from a separate thread. Coroutines are not true separate threads as they actually run on the main thread and merely act like a logical separate thread for organizational purposes as opposed to performance purposes. We can separate some data into a generic format for marshalling to another thread for deferred calculations, but those would result in negligible savings compared to marshalling raycasts. The pathfinding and visibility management middleware we use (AStar and SECTR) already make use of this technique for multithreading. For gameplay, Raycasts comprise a good bulk of the CPU cost when running The Magic Circle, and those can only be called from the main thread. There is now a feature request for Unity to support asynchronous raycasts, which would allow us and many others to make use of multiple cores by marshalling any raycast that does not need an instant result onto another core:

https://feedback.unity3d.com/suggestions/asynchronous-raycasts

Any votes on this feature would be greatly appreciated.

STRESS TESTING

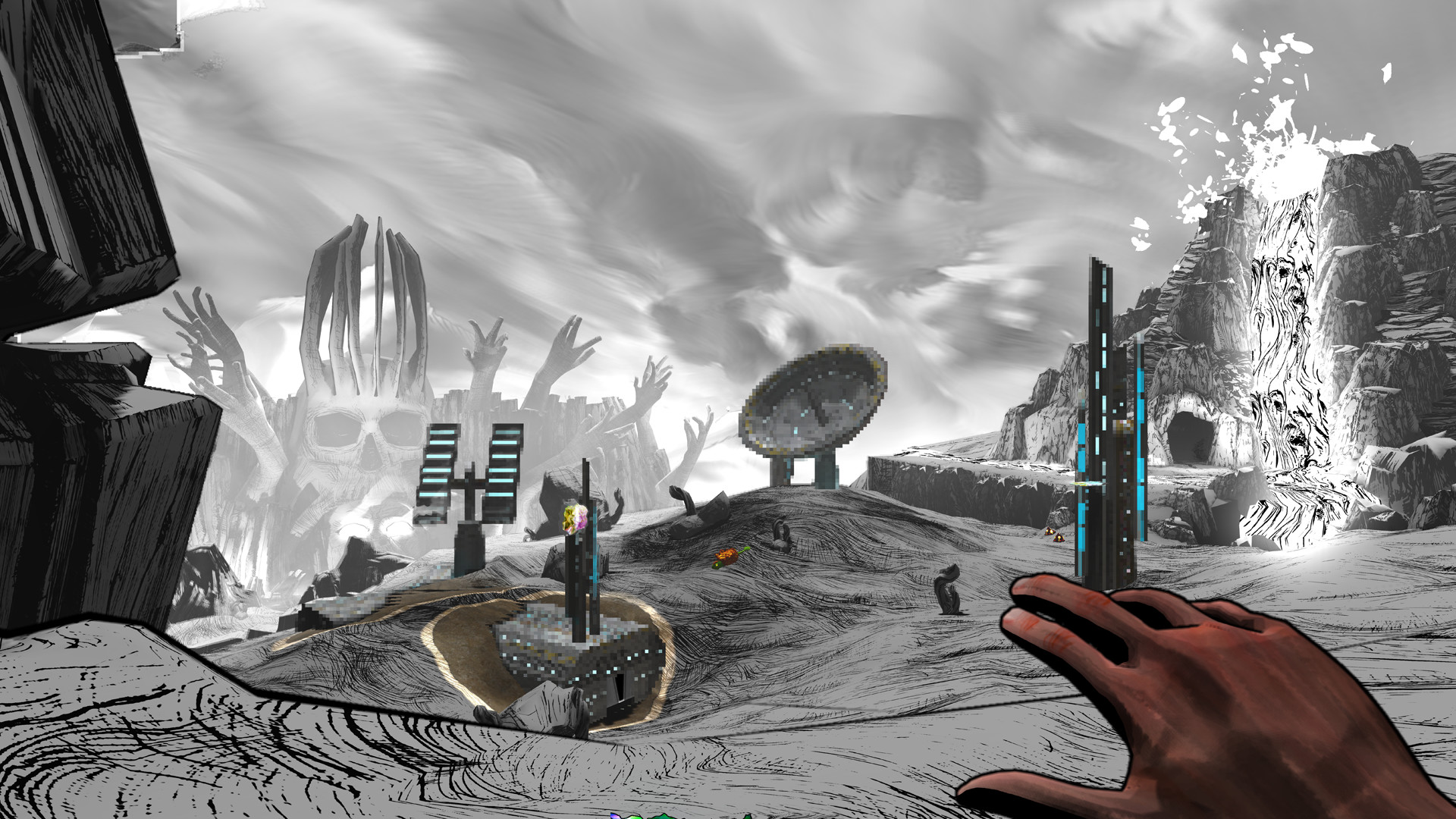

We needed to establish a baseline to compare results with as changes were made. I had various stable scenarios in save files to reload from. This particular scenario was my favorite mix of high numbers, movement variety, and feature testing:

CHERRY-PICKING STRATEGIES

AI veterans will likely consider these tricks standard practice that they've done in various games, but perhaps this breakdown may be interesting to those who might find some of these techniques worth cherry-picking from... or at least validating their own strategies with. My purpose in writing this here is partially to share our techniques with the community at large but also to open a dialog with others who might be willing to share their tips and tricks for making the world feel as full as possible. Feel free to comment below.

Read more about:

Featured BlogsAbout the Author(s)

You May Also Like

.jpeg?width=700&auto=webp&quality=80&disable=upscale)