Trending

Opinion: How will Project 2025 impact game developers?

The Heritage Foundation's manifesto for the possible next administration could do great harm to many, including large portions of the game development community.

Experienced audio designer Rob Bridgett (Prototype) lays out a practical roadmap for an entire project's audio design, explaining how to allow for changing development situations across all disciplines while still providing a clear vision of how everything fits together.

This feature takes a brief look at how to tie audio production dates into the overall delivery schedule of a large-scale video game -- including the key dependencies you should be fully aware of.

During production, it is often the case for each component of game audio to be either dependent on some other area of work to be completed, or some other area of the game is dependent on audio finishing a particular piece of work. Understanding how all these pieces are inter dependant is the key to not only locking-in and delivering on-time, but also to staying agile and having a production schedule that is simple to read and aides communication across groups.

The working practice behind scheduling for video game production is an increasingly complicated one and boils down to an approach that embraces locking-in tightly and firmly committing, yet being ready and prepared for changes at any time.

At the same time, you must also fully understand that any changes will have implications elsewhere in the production.

I certainly don't wish to get into different development styles here, such as scrum, which have their own implications on task types, but I do want the "audio schedule", to be simple and clear to understand.

In order to do this I am defining two simple kinds of task: long-term, and short-term.

Long-term tasks can be thought of as either time-boxed "rapid iteration" and experimentation, or equally much longer sound implementation and development duties which do not have fully defined end-goals, but which allow time for constant iteration and direction changes, usually following in the wake of design and art feature-development and implementation.

These tasks may never be really finally completed until the end of post-production, at least from a "final sound" point of view.

Short-term tasks, on the other hand, are considered to be tasks with definite start and end dates and much more specific, defined outcomes, usually with heavier dependency. This would include audio feature code work, dialogue recording sessions, sound effects recording sessions, and so on.

A detailed and bespoke audio production schedule will only be arrived at by going through a rigorous pre-production process in which all the key components and needs of the project, such as music, dialogue quality and all other requirements are broken down broadly into these two kinds of tasks.

No matter how "agile" or "iterative" your development process is in the pre-production phases, production schedules, and the discipline of sticking to them (or at least being able to demonstrate what happens to the estimated ship-date when a date is moved out or a feature is redesigned), are absolutely necessary in game development for a number of reasons.

Firstly, they allow you to "book" often expensive outsource services and talent for a specific period of time, and to plan dollar costs. This is a similarly important element of being able to stay agile with the dollar budget for the audio on a game, as spending may adjust depending on when the item is billed.

Secondly, establishing solid dates also makes apparent a very important chain of dependencies for other areas of production, for example cut-scene production in which animation or motion capture may be unable to begin work until the voice recordings have been completed, edited and handed over as .wav files to the cinematics department.

Finally, the schedule becomes an essential communication tool between departments and allows its users to highlight changes to both in-house resources and external contract workers who may be scheduled outside of the game team, should anything significantly shift, such as ship date.

One of the most effective ways of filling out a schedule is to approach it from the ship date. Working backwards from the ship date is often the easiest way to work through the dependencies on a schedule. Each major milestone is usually established by the development director, or project manager, and once this basic ladder of milestones has been established, those responsible for planning audio can begin to fill in the detail of when the audio deliverables and various kinds of work need to happen.

This, of course, does not happen in isolation, but through working closely with the scheduling being donw in all other departments, and looking at the game schedule as a whole.

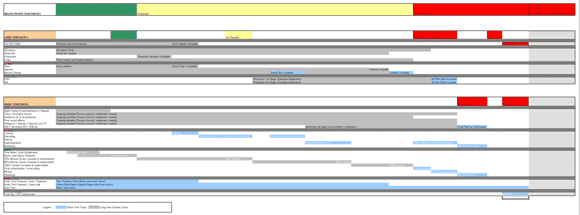

Below is what could be described as a common development production schedule for a triple-A console title, in which each time-dependant discipline, tech, art, design, cinematics, etc is broken down on separate time-lines.

You will see that I have broken down each task, in both the general production schedule, and the audio schedule, into the two different types of task described earlier, short and long term, as this allows much higher level visualization of the schedule and tasks for developers with different scheduling approaches.

Figure 1: An example production schedule (top) and accompanying audio production schedule (bottom) depicting a hybrid-task view (short & long-term tasks) of audio asset production and implementation tasks. [Click for large version]

These tasks (audio tool and pipeline features, not content) are almost certainly always short-term tasks with definitive start and end dates. They usually imply some audio-centric pipeline or tool feature work that requires completion before the system can be used by the developer who commissioned it. In this sense the audio tech-task schedule will represent a chain of tasks, often inter-related, which require a very definite order of completion based on milestone deliverables and target dates for demos.

Many of the tasks, such as integrating a dialogue solution will also be gauged by other areas within the production, such as when the dialogue implementation features, will be required during the production. There are two core milestones outside of the audio schedule that further affect the development of tech features, and those are the overall tech complete dates and the game's feature complete dates. Audio code, as it falls under the tech schedule overall, needs to comply with these two dates on account of overall stability.

For audio content then, here is some further explanation of the key dependencies that may arise when plotting audio dates for each of the four main areas of audio; Dialogue, Sound Effects, Music and Mix:

Story Complete (Cannot start casting / planning and recording dialogue before this date)

Mission Design Complete (Cannot finalize script/writing of in-game mission dialogue before this stage has been competed)

AI dialogue designs and categories complete (Cannot begin categorizing and writing in-game AI driven dialogue before this has been completed)

Character Lists, Character concepts (Cannot begin casting until this stage has been completed)

Cut-Scene Schedules & work-flow (These schedules will require heavy collaboration with the audio schedule. Cut-scenes, if relying on separately recorded dialogue rather than performance capture, rely heavily on receiving the final dialogue before work can begin)

Localization (In-Game / Cut-Scene) (Usually occurring towards the end of the project, localization dependencies rely on as close to finished dialogue as possible for in-game and cut-scene content before work can begin)

While it is true that many of these tasks cannot be started until the dependant item has been complete, some preparatory tasks can be started, such as preparing templates for character casting. This prep work will allow the task to run much more smoothly once it finally gets the green light.

Note: "Complete" means that the task has not only been finished, but it has been signed off and approved by all key stake holders, in the case of story, this may mean getting approvals from third-parties, particularly in the case of licensed IP, so for example recording of the script should not be undertaken before these kinds of approvals have been satisfactorily completed.

World art production (ambience and surface / impact effects) - (implementation of ambient sound effects rely on several passes by the world art team, a basic ambience can serve well during production, however the more final the world art becomes, the greater the need for further detailed ambient work, such as positional ambience, place-able prop-based sound emitters etc)

Prop production (inc. weapons etc) - (ongoing throughout production, and requiring constant iteration, weapons, state-changing-props, all require several sound passes as the work is iterated throughout production)

Recording, editing and implementing effects (usually based off of lists of in-game content, such as props, ambient locations, weapon types, etc, the earlier lists can be obtained from the production artists, the sooner a list of potential sound effects can be gained for recording. Editing and implementation is an iterative process that is ongoing throughout production)

Cut-Scene Sound Design (big dependencies here between sound and cinematics teams, as previously stated, the cinematics teams will be unable to begin work until they have received dialogue, however the ongoing iterations and passes on the sound effects and music that support the cut-scenes will occur during production. By Alpha, there needs to be full audio passes on all the cut-scenes in the game, for which the audio team will require locked edits of the scenes, this work often comes in on the Alpha date itself, necessitating further time for the audio team to work on these scenes past alpha, which is where a 'sound alpha' date needs to be drawn up.

Animation. Vocal efforts and character movement foley all need to be added constantly during production as part of the iterative process. This can mean many passes and tweaks of the audio content.

Art Direction established (working together at a fairly high level with the game design team and the art director to establish the 'feel' of the game will feed heavily into the music direction. If this is done early enough, the music direction can influence the art direction too)

Story & Mission dependencies (breaking down the in-game music cues in order to establish the amount of music required for the game is usually based on number of missions, or types of generic missions, as well as cut-scenes and main menu themes, this will enable the creation of a cue-sheet, which can be given to a composer as part of their commission)

Composition, recording and delivery (usually occurs during production with several deliverables along the way to Alpha, at which point all music is delivered. Beyond Alpha there may be music mixing and mastering, but all cues will be in place and be easy to replace with final assets.)

Planning / Communication (Early in planning stage, working alongside project managers to establish the scope and scale of post-production audio requirements - ensuring audio schedule is visible to all disciplines on a master schedule, ensure all disciplines understand the impact of any dates being pushed-out by showing how this also pushes out audio post-production dates and risks the ship date)

SFX replacement (this is an optional quality phase that can be done after production beta, in which key sound effects are critiqued and replaced with more appropriate sounds of equal or lesser memory footprint in order to improve their quality)

The mix itself can have three phases, both technical and artistic, firstly (technical) ensuring that the overall output levels are consistent with established reference listening levels, secondly (artistic) tuning sound effects levels and mixer snapshots through the entire game-flow, and thirdly (technical) checking all the various outputs and mix-down configurations available on the various consoles, e.g. stereo through tv speakers. Being able to perform a stable mix often carries a solid dependency on a stable Beta build of the game, but of course can also be done at any-time during the run-up to Beta. Stability is key however to being able to work fast with as few hitches and crashes as possible.

Once you begin to plot elements of the audio production onto a graph or timeline, you will begin to notice there are a great many questions. These generally relate to dependencies within other areas of the game's development.

Some of the more obvious are "When is the story script and in-game dialogue script going to be complete so we can begin casting and subsequently voice recording" alternatively there will be questions from other areas and disciplines, like "When will the cut-scene voice over be recorded and edited so we can begin animation?"

This is where the involvement of a project manager or game director is highly advised, as they have either already plotted out these items, and already have a list of questions for you, or they can help to answer the dependency date questions on your behalf.

Perhaps the single most important aspect to all of these schedules is that they need to be agile enough to accommodate constant changes in delivery dates, feature lists, mission design and even game direction.

Due to the component and dependency-based nature of interactive audio and game design, most of these areas need to be moveable against different production scenarios.

For example, cutting one character out of the game may affect more than just the dialogue production; it may leave holes in the story that require new content from other characters to fill in, it may affect the music if themes have been assigned to that character and his or her motivations, but it may not affect the sound effects design and production, which could remain unchanged.

Changes run deep through the audio production and budgets and having a flexible enough (and visual) representation of your schedule in order to accommodate changes quickly is essential.

Some of the more common practices of date adjustment are project extensions, and these usually affect or eat into post-production audio time (if it is planned). If you have your schedule mapped out in a flexible and easy to visualize format, the easier it is to run and visualize different scenarios and quickly adjust dates accordingly.

Similarly, ship dates may be brought forward, in which case would require a certain degree of re-consideration of what is absolutely essential to the product's quality goals and what can be re-aligned to reflect the new ship-dates.

Quality will inevitably drop if time is decreased, particularly from the post-production and polish end of the timeline. The game will almost certainly ship with more C-grade bugs and the ability to hit the target of overall quality percentage will drop. This is time to decide what is the most important use of time, and to schedule that accordingly.

Perhaps the easiest thing to forget about a schedule is that once it is done and looks great, everyone feels happy with it, then it must be clearly and effectively communicated to the team, as must any tweaks or updates. For myself, as an audio director, this falls to me working with the project managers, however this could also fall solely to any number of staff, such as a producer or game director.

Printing out and hanging the schedule on a wall space with high visibility has often worked well for teams that I have been a part of. This process helps to solidify the upcooming work in the minds of the team, and any team member can easily see any dependencies or risks writ large. Having enough confidence in the schedule to commit it to a giant printout on a wall will also ensure that enough work has been put into the scheduling process to roll it out to an entire team.

This seems like an odd thing to say, but, in the end, the contents of the schedule give the audio team a roadmap of the entire production, and even reveal an audio direction, not just in terms of dates and dollars, but in terms of priorities and where time and money is being invested more heavily in accordance with the overall goals of the product.

Not only are these numbers and dates able to determine the quality levels and thresholds that need to be met within the specific product, but I genuinely believe the schedule and budget also form an expression of the audio direction itself. As a case in point, the amount of time given to post-production is one particular area where traditionally there is little or no time scheduled.

However, this is changing as the industry, the tools and perceptions of audio quality changes. Even in the instances where an overall audio budget has been set prior to production, the schedule and budget can still be re-arranged in order to better represent where the money will best benefit the product for instance by moving money into the music budget from the sfx budget, or by scheduling more time, planning and iteration into specifically important areas of production.

Read more about:

FeaturesYou May Also Like