Trending

Opinion: How will Project 2025 impact game developers?

The Heritage Foundation's manifesto for the possible next administration could do great harm to many, including large portions of the game development community.

Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

Today, we will tell you what role volumetric lighting plays in graphics, how games tried to emulate its effect in the past, and how we implemented this new feature in The Schmetterling engine.

Hello everyone!

As you might already know, The Riftbreaker runs on our own game engine, which we call the Schmetterling. Although working with your own tech is not always easy, it gives us the freedom to implement any number of new features and techniques to make the game look and feel better. During the development of the Into the Dark expansion, we realized we needed one key rendering feature to significantly improve the biome’s atmosphere. The effect in question is volumetric lighting. Today, we will tell you what role volumetric lighting plays in graphics, how games tried to emulate its effect in the past, and how we implemented this new feature in The Schmetterling engine.

Atmospheric scattering is a phenomenon that affects everything we see around us. Even though we don’t often think about it, the air around us is a mixture of various particles that can interact with one another. They are also large enough to interact with photons - particles (and waves, but let’s not get too deep here) that carry electromagnetic radiation, including light. As a result of these interactions, some light gets scattered, some is absorbed and transformed into kinetic energy, and some passes through. Thanks to this, we can observe the blue sky above us and atmospheric fog when looking into the distance and many other beautiful effects.

The same principles apply on a smaller scale. Imagine entering an old, dusty house on a sunny day. As you enter, the microscopic dust particles covering the floor are set in motion and suspended in the air for a while. You look up to the window and see a beam of light cutting through the mix of air and dust. The light illuminates the room, but you can see that it is dispersed unevenly, as the varying density of the air-dust mixture creates a mesmerizing spectacle of light and shadow. The same thing happens during concerts when spotlights cut through the thick smoke. Or when a single beam of light cuts through a hole in the clouds. Or when your car’s headlights create a ‘wall of light’ effect when driving through fog.

The point is - it is rare for light to get from its source to the final point of its journey without encountering any obstacles. Each interaction with other particles along its way will change the light’s color, direction, or intensity. The result would look ‘flat’ and artificial without considering all this when rendering computer graphics. However, when appropriately implemented, the light and objects on the scene work together to create a cohesive and natural-looking image.

Several techniques have been developed to replicate the scattering effect and achieve realistic results at an acceptable performance cost. Some developers used particle effects to emulate this (we do this too). Still, it was a very tedious process - the particle had to be created with a specific, static scene in mind and didn’t work in any other circumstances. Other developers used post-processing shaders that added lighting effects based on screen-space information. Those could adapt in real-time, but only as long as the light source was on the screen. In The Riftbreaker, we limited ourselves to exponential distance fog - a simple algorithm that created a fog that got denser the further it was from the camera. We could change its color to adapt it to the time of day. It worked okay in open areas, but we needed something better in Crystal Caverns.

The technique we decided to use is called Volumetric Lighting. It can emulate the previously mentioned effects based on a couple of relatively simple steps. Instead of painstakingly calculating light scattering for individual pixels by casting rays, the scene is simplified by dividing it into larger volumes. We conduct calculations for all of these volumes individually and later combine the results into the final rendering output for the visible scene. This dramatically reduces the workload while giving us an approximation that is good enough for in-game use.

The scene is sliced up into cubical volumes calculated from the camera frustum perspective. Their exact number depends on the game’s rendering resolution, which we divide by 8. If we take 1440p as an example, we get 320 volumes by width and 240 volumes by height. When it comes to depth, the resolution is fixed - it’s always 64 layers, which, given The Riftbreaker’s isometric camera placement, gives us roughly 300 meters of depth to work with.

At this point, the volumes are empty, and the light would travel through them unobstructed, like through a vacuum. We must add a transfer medium to the equation for the desired result. As we mentioned earlier, the air serves that function in the real world, along with all the suspended particles. We generate a 3D texture to simulate this phenomenon. It uses the exponential height fog at its core, generating a dense mist at the deepest points of the scene that becomes thinner as the distance from the ground increases. The texture carries information about density, which varies slightly from point to point. A new texture with different values is generated every frame. We can control its density and variability using a set of parameters to get the desired artistic effect.

We can now move on to the next stage of the process. Using the density volume grid from the previous step, we can calculate how light would behave while traveling through each volume. When we combine the air density data and the light parameters, we approximate how the light would scatter in that area. We perform these calculations for the entire grid, creating a 3D map of light scattering on the visible graphics scene.

A sample scene we used for development. It features multiple sources of light, which sets a good benchmark for us.

64 layers of the light scattering model data generated from the sample scene. They are presented one by one, going from the bottom to top.

To present these results to the player, we need to perform one more step called light scattering transmittance accumulation. During this operation, we sum up how much light is transmitted through each of our 64 layers of volumes and what the final result should look like from the perspective of our game camera. Starting from the camera’s position, we take the light scattering values for the first volume and add them to the layer beneath it. Then, we add the sum of our first two layers to the third one, considering the air transmittance value. Rinse and repeat until we reach the bottom of our 64-layer grid.

The same data presented after the light scattering transmittance accumulation step. You can see the influence that the underlying layers have on their neighbors.

The simplification we applied earlier, treating the scene as a lower-resolution grid, now causes some issues. Since we have taken average values for larger areas, the resulting image would be full of solid-color squares with sharp edges visible where different volumes meet. This image artifact is called banding. The unpleasant phenomenon can be fixed by applying temporal techniques. First, we add a slight jitter to our calculations. For every frame, the lighting for each volume is calculated at a different point within its bounds. This guarantees that the result will be different in every frame. Then, we can use a couple of frames of data to get an average of volumetric lighting within a time period. This allows us to achieve a soft image without aliasing, banding, or other artifacts, apart from slight ghosting, which is unfortunate but far better than the alternatives.

A sample scene before and after the temporal techniques are applied. You can clearly see the lighting artifacts on the light shafts, which disappear completely after introducing jitter into our calculations.

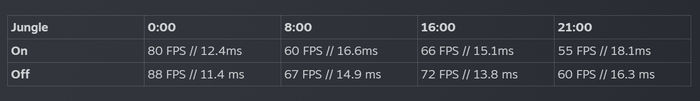

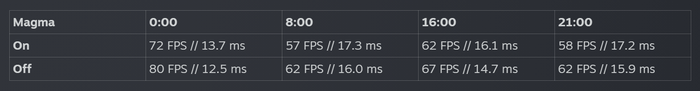

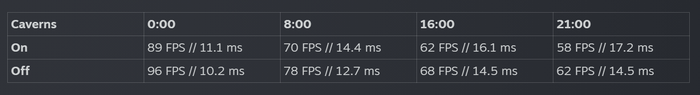

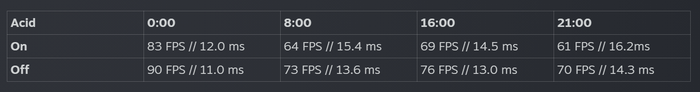

We conducted some performance tests to see what kind of impact Volumetric Lighting has on The Riftbreaker at various points of the day and night cycle. We chose sample scenes in various biomes and measured the performance results at 0:00, 8:00, 16:00, and 21:00 in-game time. We conducted these tests on a Ryzen 5 5600X CPU, 32 GB DDR4 RAM, and RTX 3080 GPU machine running Windows 11. The game was running at 4K resolution, with all settings at maximum, raytracing enabled, and no upsampling or resolution scaling.

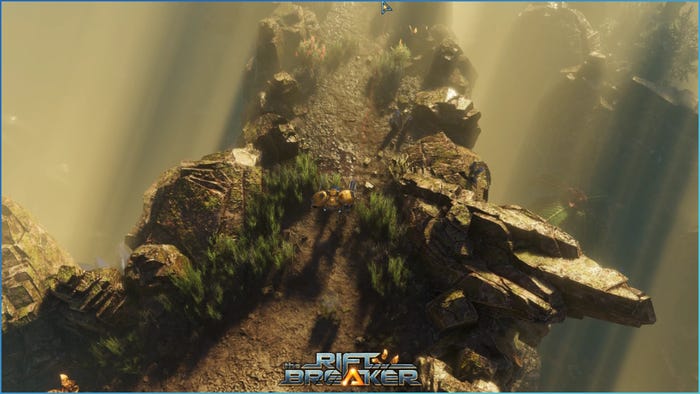

Sample scene from the Jungle biome.

Sample scene from the Magma biome.

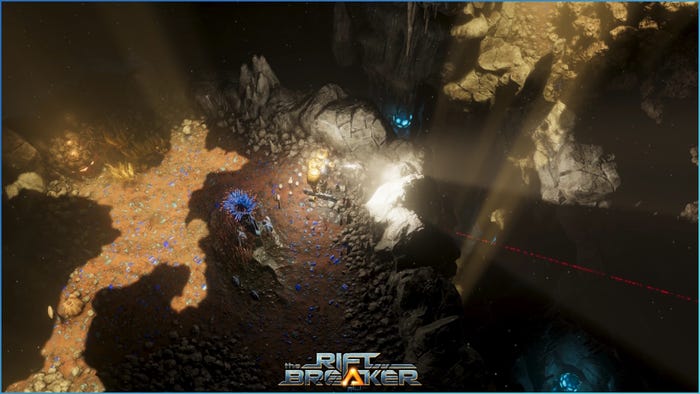

Sample scene from the Caverns biome.

Sample scene from the Acid biome.

Volumetric Lighting is not a new technique. It has been used in games for years, and you have most likely seen it in dozens of them. The introduction of the Crystal Caverns biome into The Riftbreaker seemed like a perfect opportunity to add this feature to The Schmetterling Engine. It makes the graphics scene more realistic and adds significance to what used to be empty space. It also gives our artists additional tools to create atmospheric ambiances, with delicate fog and lights playing a significant role in creating the game’s mood.

We hope you enjoyed learning about this technique as much as we enjoyed the R&D process behind it. What other aspects of game development would you like to learn about? Let us know in the comments and on our Discord at www.discord.gg/exorstudios. Volumetric Lighting is undoubtedly not the last feature we will expand our engine with. When we decide to add something new, you will certainly hear about this on our Discord first. You will also see the previews during our Twitch streams at www.twitch.tv/exorstudios every Tuesday and Thursday. See you there!

EXOR Studios

Read more about:

Featured BlogsYou May Also Like