Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

Non-Destructive Destruction

This article explores a new approach to applying destruction in a non-destructive way using Signed Distance Fields on Mesh assets.

Hi, I’m Stijn Van Coillie, Lecturer at Digital Arts and Entertainment and indie developer of God As A Cucumber. Currently, I’m obtaining my master’s degree in Game Technology at Breda University of Applied Science. This article is a summary of my research and how it can apply to the game industry, together with a link to the repository (GitHub) of the prototype developed in Unity.

CONTEXT

To create destruction in real-time applications, video game engines depend heavily on the Voronoi fracturing approach. This method not only creates additional data, its level of detail depends on the number of subdivisions, and it is mostly executed as a pre-process. Creating a non-destructive method would remove the pre-fracturing process and benefit the development process. The proposed way would be to render out the destructed parts with a voxel approach and render the mesh itself with a traditional shader approach.

To achieve this, we create a Signed Distance Field (SDF) of the asset, saved as a 3D texture, where each pixel gives us the nearest distance to the closest surface. The benefits of this are that it is very easy and fast to perform a Boolean operation on them. The rendering of the mesh itself can be done in the traditional way with one modification, we sample from the 3D texture and discard the fragments where there is destruction. We are then left with holes inside the asset, like the old-school techniques that were used on games such as Worms or Tanks. To finalize our method, we need to visualize the inside. For this, we use Raymarching, a volumetric rendering technique. Where the signed distance function of a sample is from our SDF.

SET-UP

We need to generate a Signed Distance Field from our mesh. Since we are using Unity, we can use the built-in SDF Bake Tool or write our own method. For our purpose generating the SDF can be done offline, in the editor. However, it is possible to generate them at runtime or compile time to save time or improve production time.

In this example, we visualized the SDF of our mesh inside the scene.

DESTRUCTION METHOD

We can now apply damage to our SDF by creating an additional SDF that stores our destruction information. For our example, we are using a mathematical signed distance function of a sphere to apply destruction. However, this could be any signed distance function or even an SDF in that matter.

Now that we have both our SDFs, we can easily apply Boolean operations to them. In this case, we use the subtraction operation, where we subtract our damage from our SDF mesh.

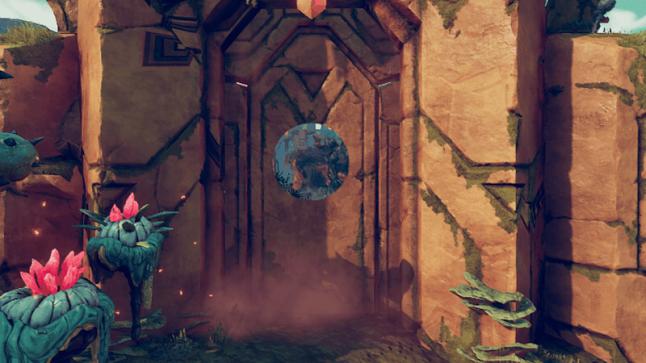

Here we see a visualization of the result of our subtraction operation.

RENDERING THE ASSET

We create a custom shader, in our case the built-in render pipeline but this would also work for URP or HDRP, and start by finding out if our fragment is in the damaged part of our asset. To determine this, we sample from our destruction SDF and if our value is smaller or equal to zero, we can assume the fragment is located in the destroyed area. In case it is not, we calculate the fragment out in the traditional shader approach.

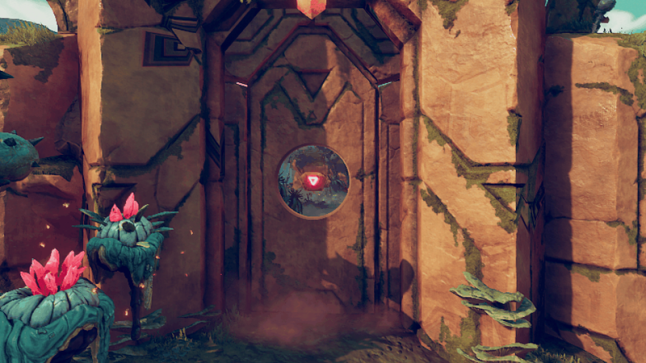

In this example, we discarded the damaged parts and only rendered out the asset.

If the fragment is located in the destroyed area of our asset, we need to visualize the inside. For this, we use Raymarching, a volumetric rendering technique. We use our SDF to calculate our signed distance and use this to calculate our position and normal. After which we can basically render out that fragment however we want. In this example, we used triplanar mapping.

All of this resulted in a non-destructive destruction method.

Examples

This method can be applied in many different situations. In this particular case, we used it for a cinematic shot in Unity. Because of the flexibility of the destruction method, we didn’t have to worry about refracturing the model every time we adjusted the beam’s direction.

Or in the case of our simulation example, we can easily add additional objects that deform the asset.

This non-destructive destruction method could be used for numerous other applications, for instance, in-game sculpting tools, VR experiences, art installations, …

OUTRO

It is worth mentioning that using this destruction method translates into more accessibility without forcing a new workflow. This could essentially remove the pre-fracturing process of creating a destructible object and at the same time give it a higher level of detail, which would benefit the development process tremendously. This could also mean that small-budget games, which normally cannot afford to create different versions of destructible assets, get the same opportunity to use destruction without increasing the budget.

Feel free to contact me in case you have any questions, remarks, or suggestions. You can contact me through Twitter or add me on LinkedIn.

Read more about:

Featured BlogsAbout the Author(s)

You May Also Like

.jpeg?width=700&auto=webp&quality=80&disable=upscale)