Video Games, Blindness and Sight-Loss: A Discussion

Mainstream video games rely heavily on the ability of players to be able to see, hear and interact with the content of their game worlds. But what happens when players have a degree of blindness or have reduced sight capabilities?

Mainstream video games rely heavily on the ability of players to be able to see, hear and interact with the content of their game worlds. But what happens when players have a degree of blindness or have reduced sight capabilities? Often this results in the player being unable to interact with the content at all, with much of the design and usability testing for games focusing on sighted individuals. This article will provide a brief overview of some of the ways in which video games can better support these players and delve into the potential experience of non-sighted players.

Firstly, it is important to be aware of the scale at which sight loss is present within the population. In the UK (according to the RNIB – Royal National Institute of Blind People) there are two million people with sight-loss or 1 in 30 people. It is predicted that by 2020 this will have risen by 250,000 and by 2050 it will have doubled to about four million[i]. When this is considered on a global level, there are an estimated two-hundred and fifty-three million people who have some form of reduced vision (sight-loss) according to the World Health Organisation[ii]. Whilst not every person with blindness and sight-loss would be interested in playing video games, there is an audience for mainstream games to cater to, with many deterred by a lack of options or clarity when it comes to blind accessibility in games. This article will use game accessibility as defined in the IGDA 2004 white paper “Game accessibility can be defined as the ability to play a game even when functioning under limiting conditions”[iii]. Through the use of this definition, this article’s aim is to inform of the barriers to entry those with blindness and sight-loss face which disable them and to assess some possible avenues through which mainstream games can become more accessible under these conditions.

There are games which are developed specifically with the intention of meeting the needs of blind players. These games are often split into two individual categories for those with blindness and sight-loss; those not specifically designed for accessibility (mainstream video games, text-based games) and those with the specific intention of accessible content (audio games, often modified video games)[iv]. To that end, there are communities online dedicated to emphasising the types of games which are made for those with blindness. One older example is audiogames.net which focuses on delivering news, reviews, forums and games that specifically use audio as their primary stimulus. The welcome page states “Using sound, games can have dimensions of atmosphere, and possibilities for gameplay that don’t exist with visuals alone, as well as providing games far more accessible to people with all levels of sight.”[v] Many properties of audio games provide a good basis from which to work when considering how to make mainstream video games more accessible. One example is the use of voice within the initial menu to be able to select options and game modes. For example, a video game aiming for blind accessibility should provide the accessibility options upfront using both text and voice. The first option “would you like to use text-to-speech” might be a solution.

Both PlayStation 4[vi] and Xbox One[vii] do have text-to-speech for the menus on an OS level and for built-in applications, this is currently only available in-game on a game-by-game implementation basis (in the case of Microsoft text-to-speech API for example). It is promising, however, that both console manufacturers do also include the option for zooming into elements of the screen for those with reduced sight to be able to have the option to enlarge areas that are difficult for their vision to pick up. Although it does still have issues; if in-game text is too small in active scenes (outside of menus or the game being paused) then it is not possible to interact with the scene at the same time, which is problematic in interactive media.

Thus, it is a necessity that to make video games as blind accessible as possible a bigger push for more tailored auditory information is important as well as haptic feedback.

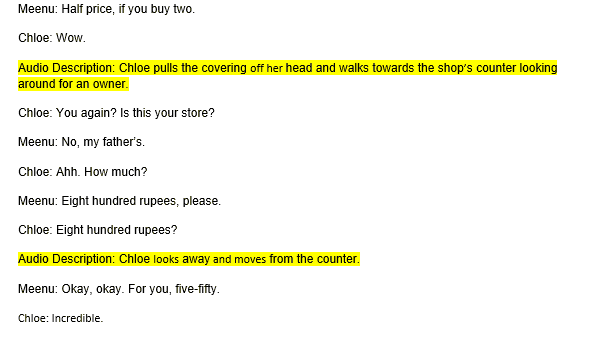

Audio description is one method which can be used to replace visual stimuli. While it can be difficult to implement due to the continually changing and dynamic situations which are present in video games content, cutscenes would be an effective area to start with. This is suitable particularly in instances where the game already has some form of text-to-speech or accessible sound design[viii]. This form of accessibility has been proven to be successful in more passive entertainment and media (TV, Film, Theatre, Museums…) with production companies, cinemas and other related establishments pushing for audio description in content, including guidelines. These guidelines often include what to describe, the language which should be used to describe it, pace and who users commonly are for the service[ix].

Figure 1: Brief audio description drafted as an example from a dialogue in a scene in Uncharted: The Lost Legacy.

With an improved use of audio description, there are benefits to the immersion, engagement and understanding of scenes for players who cannot rely on the visual information in the scene. Often directions are given when a script or dialogue are written for a game and to include an audio version of this description for blind video game players would increase their experience exponentially. While this might take more time it also makes the games content more accessible to these users.

As stated earlier, sound and music, in general, are extremely important for low-vision and blind people when playing video games and one of the core reasons is due to the difference in being able to navigate around the virtual world. Anderson states “The hardest pieces of information to convey tend to be navigation issues that are non-existent or trivial in a graphical interface, such as the location of exits”. Contemporary video game design often leads to the creation of open spaces and open worlds, with little or no visual information to use, another bar to entry for those with blindness and sight-loss is created. There are methods which can be used in the case of an open-world, perhaps if the game uses a waypoint system then sound which denotes direction and distance of location would be possible. Perhaps a series of beeps getting faster the closer to the location and utilisation of a binaural audio implementation for suggesting a specific direction to turn in.

The importance of distinct and consistent sound properties from audio sources in games cannot be overstated when it comes to blind accessibility. A blind gamer, Ben (also known as ‘Sightless Kombat’) in an interview with the BBC states “So there Kathleen has blocked low and the only reason I know she blocked low is because of that ‘thug’ sound that you heard there”[x]. The game referred to is Killer Instinct[xi] and illustrates the point that specific sounds and association with an audio source (in this case a ‘block’ from low) create an environment through which blind players are better able to interact with the content. Another example of sound and tactile feedback providing an experience playable by a gamer with nystagmus (which causes involuntary eye movement reducing and limiting vision) comes from Nintendo title 1-2-Switch[xii]. Through CBC News[xiii] the gamer (Steve Saylor) discussed how the 1-2-Switch allowed him to be able to interact effectively, with large text, spoken instructions for players and haptic feedback. In the case of 1-2-Switch distinct sounds and the vibration in the controller can be used to assess the timing of movements and to receive instruction eliminating the need for an over reliance on visual cues. Yet, both mentioned titles don’t rely on moving in three-dimensional space with Killer Instinct played in a virtual 2D plane and 1-2-Switch played with real-world movements and not especially relying on the visuals of the game, even if both illustrate important principles for all types of mainstream video game.

The largest complication for blind players is their navigation through the space as earlier mentioned and much of that has to do with the differences having blindness makes to how a given space is interacted with. The difference in the mental model between the two representations for movement control in space can be referred to as allocentric and egocentric representations. As the planning of motor movements occur in spatial coordinates the brain will often use several different frames to refer to and this forms the primary basis of difference. In allocentric representations, the person will plan movements relative from one object to the other (a support perception), while in egocentric they can be coded relative to a part of the body (hand, leg and so forth which means rather than a support perception there is a support motor behaviour)[xiv]. Egocentric movement and interaction may work well enough in the real world for those with blindness but of course, an egocentric method doesn’t translate as well to virtual environments which provide significantly less obvious proprioception. Within the ‘real’ world those with blindness would often use a white cane to be able to allow for scanning of the environment, warning of obstacles and sometimes physical stability. Lahay and Mioduser state[xv] “Recent technological advances, particularly in haptic interface technology, enable individuals who are blind to expand their knowledge by using an artificial reality built on haptic and audio feedback… transfer exploration strategies commonly used by them in real spaces into their investigation in VE.” There is the assertion that in virtual environments, utilising haptic and audio feedback, those with blindness (as would be logical) attempted to transfer their O & M (orientation and mobility) strategies for investigating the ‘unknown’. Thus, one method in video games content for working out where objects are in the environment or direction of activity might be to almost produce a virtual ‘white stick’. Where on controllers using an ‘analogue stick’ or otherwise, the movement of the stick acts as a small version of the blind users’ white stick vibrating or making a sound when hitting an enemy, object or otherwise and possibly beeping when facing the correct direction for movement in navigation.

Yuan, Folmer and Harris Jr. propose a few possible strategies to turn visual cues into audio cues for blind players[xvi]. One method is the using extra-ludic (knowledge from outside the game’s world) information to navigate in the world like using wind or footstep sounds to guide the player or warn them of obstacles and enemies. There are three main suggestions which can be broken down from the paper these are:

- Auditory Icons: sound effects which indicate different objects or actions.

- Earcons: one or more tones used as a language to indicate different objects and actions.

- Sonar: sonar-like mechanism conveys spatial information of the location of the objects.

Each of these methods can complement each other and when used effectively allow for a lot of the visual information to be conveyed in a natural and intuitive way for blind players. Blind users can generate their own spatial cognitive maps using auditory information and by interacting with elements in the immersive video game environment.

However, the big issue with implementation in mainstream video games is often for a variety of reasons. The biggest issue is a lack of accessibility knowledge or more precisely, how best to design and implement in a way which can cater to both sighted and non-sighted individuals. Another issue is with the process of sound and music design for digital games content, with music and sound design sometimes outsourced and added once the production phase is already underway or other times becoming involved in later sprints (or equivalent in the development team’s production methodology) and so forth. This can be detrimental to the addition of unique sounds and implementation of audio stimuli for blind accessibility, as it means the game has not been built with the sounds and music as a part of the design process from the start. But good accessibility options stem from an informed and concerted effort to design with all players in mind from the start. If the development team consider these options from the start (whether that be deaf or HoH accessible options or colour blindness etc) then implementations can be prototyped and tested both within the studio and with real users, through user researchers or accessibility experts and user testing. As stated in numerous articles about accessibility options; whether a person has a medical condition or not, accessibility options can often benefit the usability and experience of all players.

Work regarding blind accessible solutions has fortunately risen in recent years, especially with technological advancements. Microsoft has worked on research such as the production of the ‘canetroller’[xvii]. This solution is like one suggested earlier in the article where a virtual white cane is used as a method for the player to move through the environment. In this case, Microsoft used the HTC Vive (displaying no visual) with a 3D sound and specialised haptic feedback controller (like a white cane) and used ‘vibrotactile’ feedback to emulate the real-world orientation and mobility methods of blind users. Microsoft has also introduced the use of co-pilot functionality[xviii], which allows the user to connect two controllers which can act as a single controller. What this means is that in certain instances if another person is there who can play alongside blind users they can take control of the game in instances that might be difficult to overcome otherwise. A similar thing could be done since 2014 with PlayStation’s share play but that requires two PlayStation 4 consoles and a network connection[xix].

Another company which has been paving the way on accessibility guidelines and working within the community is Special Effect[xx] who have a range of work to produce a solution to allow users who can’t, interaction with games content. Their website has a lot of information about accessibility options including a ‘wish list’ for accessible features to be implemented by game designers. The five features stated for sight-related accessibility include[xxi]:

1. Openly describe accessibility features

2. Offer broad difficulty level adjustment

3. Improve menu access for visually impaired players

4. Offer high contrast and high visibility graphics

5. Use speech and audio to aid comprehension

Some of these have been addressed within the content of this article but there is information about the others within the Special Effect website, including high contrast and high visibility graphics which also consider the colour-blind community.

To surmise, there is a range of different solutions which are currently being researched, implemented or designed for working to make mainstream games more accessible in the future. Yet, the mandate for blind accessibility across mainstream content is severely lacking with many video games adhering to none of the options for blind accessibility. Options such as sound, haptic feedback and proprioception-based equipment as well as design, build a foundation through which the industry can work towards making mainstream video games content more accessible to those with blindness and sight-loss. The main thrust of research and design in this area going forward must work with the user who needs these accessibility options to co-create and gain further insight into the process through which blind accessibility can be implemented more efficiently going forward.

Additional Reading and Sources

[i] RNIB. (n.d.). How many people in the UK have sight loss. retrieved from: https://help.rnib.org.uk/help/newly-diagnosed-registration/registering-sight-loss/statistics

[ii] World Health Organisation. (2017). Blindness and Visual Impairment. Retrieved from: http://www.who.int/news-room/fact-sheets/detail/blindness-and-visual-impairment

[iii] IGDA. (2004). Accessibility in Games: Motivations and Approaches. Retrieved from: https://gasig.files.wordpress.com/2011/10/igda_accessibility_whitepaper.pdf

[iv] Gameaccessibility.com. (2015). No Pictures Please! Visually impaired gamers: where to go and what to play. Retrieved from: http://game-accessibility.com/documentation/visually-impaired-gamers-where-to-go-what-to-play/

[v] Audiogames.net. (n.d.). Welcome at Audiogames.net. Retrieved: audiogames.net

[vi] Sony Interactive Entertainment. PlayStation User’s Guide: Accessibility. Retrieved from: http://manuals.playstation.net/document/gb/ps4/settings/accessibility.html

[vii] Microsoft. Xbox Accessibility. https://www.xbox.com/en-US/xbox-one/accessibility

[viii] Game Accessibility Guideline. Provide an Audio Description Track. Retrieved from: http://gameaccessibilityguidelines.com/provide-an-audio-description-track/

[ix] Ofcom. Guidelines on the provision of television access services. Retrieved from: https://www.ofcom.org.uk/__data/assets/pdf_file/0023/19391/guidelines.pdf

[x] Hawkins, K. (2017). Blind gamer is trying to make gaming accessible to all [video]. Retrieved from: https://www.bbc.co.uk/news/av/technology-40281313/blind-gamer-is-trying-to-make-gaming-accessible-to-all

[xi] Double Helix Games, Iron Galaxy Games, Rare, Microsoft Studios. (2013). Killer Instinct [Video Game]. Redmond: Microsoft Studios.

[xii] Nintendo EPD. (2017). 1-2-Switch [Video Game]. Kyoto: Nintendo.

[xiii] Cloutier, D. (2017). How a blind man plays mainstream video games and the future of accessibility in games. CBC News.

[xiv] Willingham, B. D. (1998). A Neuropsychological Theory of Motor Skill Learning. Psychological Review 105 (3), pp. 558 – 584.

[xv] Lahay, O., Mioduser, D. (2008). Haptic-feedback support for cognitive mapping of unknown spaces by people who are blind. International Journal of Human-Computer Studies 66, pp. 23 – 35.

[xvi] Yuan, B., Folmer, E., Harris, C. F. (2011). Game Accessibility: a survey. Univ Access Inf Soc 10, pp. 81 – 100. [xvii] Greene, T. (2018). Microsoft’s new ‘canetroller’ brings VR to the visually impaired. Retrieved from: https://thenextweb.com/microsoft/2018/02/19/microsofts-new-canetroller-brings-vr-to-the-visually-impaired/

[xviii] Microsoft. (n.d.). Copilot on Xbox One. Retrieved from: https://support.xbox.com/en-US/xbox-one/accessories/copilot

[xix] Sony Interactive Entertainment. (n.d.). Share Play. Retrieved from: https://www.playstation.com/en-gb/explore/ps4/features/share-play/

[xx] Special Effect. (n.d.). Get Involved. Retrieved from: https://www.specialeffect.org.uk/get-involved

[xxi] Special Effect. (n.d.). Accessible Gaming Wish List. Retrieved from: https://www.specialeffect.org.uk/accessible-gaming-wish-list

Read more about:

BlogsAbout the Author(s)

You May Also Like

.jpeg?width=700&auto=webp&quality=80&disable=upscale)