Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

How Will the Oculus Rift Change Game Design?

This is something I’ve been thinking about a lot, as I’m an indie developer that recently got a hold of the first dev kit. What exactly will change in terms of game design and mechanics, or what can we do differently now that the Oculus has arrived?

This is something I’ve been thinking about a lot, as I’m an indie developer that recently got a hold of the first dev kit. What exactly will change in terms of game design and mechanics, or what can we do differently now that the Oculus has arrived? How exactly will immersion and presence affect game design?

I’ll start with outlining what the Oculus gives us that we did not have before.

Increased immersion or “presence” – the feeling that you are inside the environment.

Head tracking in terms of rotation (by actually moving your head) and position (craning your head forward, back, left and right).

Depth perception.

Real world head/limb movement speeds

Orientation issues

Then I’ll end with a few thoughts on some Oculus games and demos.

Immersion

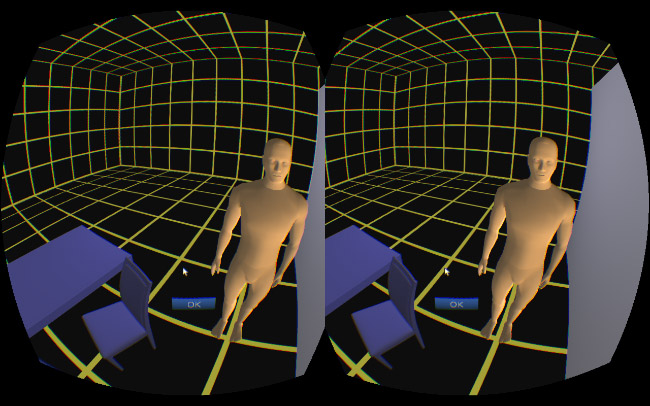

This has been the number one touted advantage of the Rift – you are now IN the game. Big things look big, high things seem high, large drops or valleys look deep, things that are close to you seem closer, and so on. You can’t compare it to a 3D screen, the depth and sense that you are inside an environment is real. In the Oculus, my first thought (inside the Settings Viewer) was “wow, the far corner of this large room is actually quite far away, and this guy is right in my face. That door to my right isn’t *really* there”. That’s quite a thing considering the graphics:

I believe the main benefit of immersion is it increases the chances for the developer to deliver the expected feeling or emotional response from events happening in-game. For example, fear, awe, danger and scale are all greatly enhanced. So how does this change things? Is the Oculus merely an enhancing effect? Scary games will be scarier, racing games will seem faster, shooters more …shooty? Also what do I mean “merely” increase immersion? I realise immersion can be pretty big for some games, but for the most part I wonder how much better or meaningful will a game be, if its got double the immersion factor, or 10x? So now you’ll feel like you’re really, really, really there! So what? This is something very hard to quantify.

If you play Call of Duty as-is but wearing an Oculus, has anything much changed just because you feel more like you are there? Will you see a fellow soldier die, and instead of chalking him up as another meaningless death, will you feel genuinely bad? I kind of doubt it. You might feel like those mortars are really exploding right next to you, though. Taking this to a (silly) extreme, if you recreated the room you are sitting in now, and had 100% perfect VR and immersion, that would be impressive from a tech standpoint, but as an experience or a game, it is meaningless.

Will the increased immersion effects wear off or is it just novelty value that we will eventually adjust to? Going back to regular games, I remember System Shock 2 scaring the crap out of me when I played it as a kid, and the best horror games these days still manage to do the same, so it didn’t wear off for 2D gaming at least. Considering Dreadhalls (made for Oculus) and forgetting how nauseous this game made me feel, the sense of terror was definitely magnitudes higher than other horror games I’ve played. If I didn’t get used to being scared in old games or movies, then hopefully the immersion effect and enhanced emotional responses on the Oculus won’t wear off either.

Finally while I don’t want to talk at length about it – the potential for significant emotional reactions could swing in a number of ways. I could imagine anything from heart attacks from horror games to people not wanting to play shooters because it feels like they’re actually killing people, to the opposite, where we get even more desensitized to violence. I’ve had dreams that feel so real that I’ll wake up feeling bad that I’ve just cheated on my partner, despite it having never happened. Will the same thing happen when VR gets really real?

Head Tracking

Head tracking feeds into both immersion and game mechanics, being able to move your head around helps immersion, but it also gives us some new tools to play with that were previously not available. In the past we’ve used controllers or the mouse and keyboard to control both where the player walks and where they look, however this leaves us with little option for finer control of the avatar’s head. The best option is something like Arma/DayZ where you hold Alt to control your head, but this still lacks control for moving your head forwards, backwards, left and right while rotating, and you can’t aim and look at the same time. For most games this fine head control will be unnecessary, but to use DayZ as an example again, even this could benefit if you try and move your head away from a teeth gnashing zombie, or actually shift your head over/under/around objects to search for nearby zombies.

With the ability to both move and rotate your head in all directions and dimensions, new game mechanics can open up which could involve actions such as:

Close and thorough inspection of objects at different distances and angles

Complex and fine head movement control – ie looking around inside a cockpit, over an edge, peering around corners, peeking over ledges, ducking, etc. You are no longer restricted to looking straight up or down, either.

Head position as a gameplay mechanic – we now have an extra control in addition to gamepad or mouse/keyboard. This could allow more complex games or capitalise on a single mechanic as in Dumpy the Elephant, where you control the Elephants head and attached trunk with your own head movements. In this instance immersion is increased as the trunk feels like an extension of your own head.

Social actions, ie – nodding to multiplayer friends in a conversation, nodding in the affirmative instead of hitting the A button to agree with an NPC. These mechanics also increase immersion.

To carry on talking about Dumpy, this is a good example of a game that could have been designed without the Rift, and would have still worked, however with the Rift it reaches a new level because your head movement is linked to the elephants – you are moving your head as if you are an elephant. It gives you an extra hook into the game world that wouldn’t be possible by just swinging your hand/wrist around with a mouse.

Depth Perception

As an elephant, being able to see down your trunk in full 3D adds another level again – with it almost coming out between your eyes. Depth perception is linked to immersion in terms of boosting the effect of being somewhere inside an environment, but could also help players nail the apex of a corner, make contact with a melee swing, and get scared shitless by a monster that’s right in their face.

Emotionally, I could see depth being a huge factor as well. Imagine looking at an NPC you’ve grown attached to slipping out of your fingers to their doom, Cliff Hanger style, or being right next to someone who’s hit by a car. Perhaps imagine a Bond moment where a buzzsaw or syringe is approaching your face… Again, for horror games turning around and seeing a monster face-to-face is terrifying as was evidenced when my partner slammed my new Rift into the table after ripping it off her face.

Reactions to other players or NPCs could be amplified if they move right in close to you. An aggressor could scream right into your face, or a love interest could slowly move in. Someone could lean in and whisper into your ear, a zombie could bite your face off, etc.

On a slightly more shallow note – all those fancy special effects and particles are sure gonna look pretty as you move through them!

Real World Movement

Real world head movement may even slightly restrict game design choices (although restriction of choices in art is rarely a bad thing). With the Oculus, you can only turn your head or move the view at a certain speed based on the player’s own neck muscles, or in terms of rollercoaster style games, you can only push different movement speeds in so many directions before making the player sick.

If you’re playing in 1st person, you’ll have to be a creature with a single head, neck and two eyes (sure, it’s not common to be a 10 headed, neckless cyclops or something), so while being an elephant or a Grey alien is doable, being a giraffe might not work quite so well. Also consider something like a bird, which travels horizontally with their body out behind them while the player themselves sits upright in chair. Gravity is working against game design choices like this. However, having played a few space games so far, the effect of being upside down with incorrect gravity isn’t so bad.

We also have to consider restriction of movement. You can’t have an NPC put the player’s avatar in a headlock for example, or restrain their head movement in any way, because the player can simply move their head. If you have the player moving through a very tight space, he can crane his head forward and just move through the geometry. I hear that in some demos, developers have blurred and/or darkened the screen when this happens. Perhaps this is a solution for restricting movement too?

Unexpected movement is another thing to think hard about. This can range from a camera move in a 3rd person game (perhaps the camera moves to avoid a wall or moves for a cut scene) to unexpected movements based on physics . If you play Wingsuit or Warthunder, despite their realistic physics models these games can cause somewhat unexpected up and down movements that causes your stomach to really churn. The more simple and direct the movement, the better, at least with the first dev kit.

Peripheral Movement

In addition to real world movement for the Oculus, peripherals like Razer Hydras can suffer from similar problems where the player attempts to move in a way that isn’t matched 1:1 to the game environment. For example, imagine in-game swinging a huge heavy axe with your nice light Hydras – there will be a mis-match between the speed you can swing in reality vs the game world.

Orientation

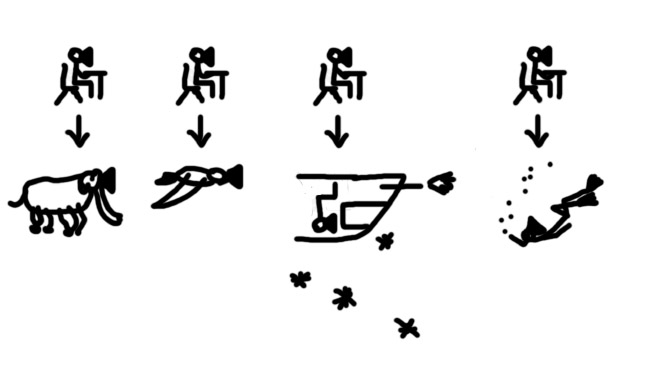

When playing normal games on a detached 2D screen, it doesn’t much matter which way you��’re facing, which way gravity goes, or how much you spin or flip. However when you are immersed into an environment, these things become an issue for your stomach, and sometimes for immersion. Consider my awesome art below:

Comparing your sitting position to the type of avatar/orientation. From left to right – elephant, bird, upside down space ship, scuba diver

Whether or not some of these conflicting body/avatar positions are a problem will probably come down to feel. I personally didn’t have a problem with the elephant or space ships, but I did feel odd as a bird. Games like Lunar Flight or other cockpit games on the other hand really feel like they click.

Existing Game Demos

I’m going to end this post with a breakdown of a small selection of games and how they utilize the Rift, as well as some potential problems with some concepts.

Lunar Flight

I think this is the best example so far of a game that uses all of the Oculus’s features to best effect. When DK2 comes out with head position tracking, it will be even more so. Seated in a Lunar Lander, I’m seated as I am in real life, looking out the cockpit of the Lander. I feel like I’m really in the Lander, the scale is perfect, I can judge depth well enough to land on target locations and the interface is designed well. I also like the fact that I have to look around to use the interface, rather than straight ahead, making more use of screen real estate and the Rift. When positional tracking comes out, I’ll be able to lean forward and judge my landings with even more precision, or look around a strut/monitor that’s in the way of my view of the Earth. Can’t wait!

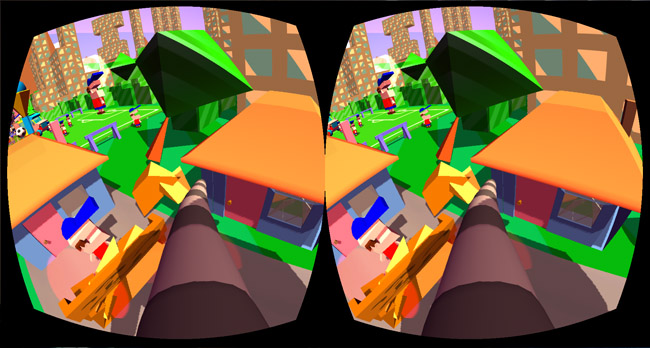

Dumpy the Elephant

The number one thing that separates this game from others is that you move your head to control the elephant’s head and trunk. That, coupled with the immersion gives you a pretty solid feeling of being an elephant despite the cartoony graphics. I’m a huge fan of the art style, and its great to see people using non-realistic graphics so soon. Amazingly, considering the amount of head movement involved, I never get nauseous.

Ambient Flight

I like the concept for this game, and it looks amazing, and a flying game for the Rift is just a no-brainer. However I feel a big disconnect being seated upright myself, vs horizontal as the bird avatar. Funnily enough though, I didn’t get this with Dumpy. I feel that this game might also benefit a huge amount from something like the Stem controller, where you’d need to spread your arms out to fly and maneuver, maybe even flap them like wings. The main benefit to this game is the immersion of the Rift, where you feel like you are in the air. This game may also cross the line a little between an experience and game, where (at least currently) there are no challenges or goals of any kind. Once you’ve played this once, you’ll possibly never replay again, but with so many experiences to be had on the Rift, I think this might be quite common. I suppose you may return to it just to chill out and fly around.

DreadHalls

With the first dev kit, I universally dislike all FPS’s because it induces the worst nausea for me. However I’ve seen a few let’s plays and my friends had a go and they were all fine. Hopefully DK2 solves this for everyone and most game types. Having said that, Dreadhall’s use of the Oculus makes great use of real world movement, as you can’t look left and right any faster than your head will allow, and positional tracking will be amazing for peeking around corners. Monsters can feel like they are right behind you, and you can’t pull a superhuman turn/run backwards to see. This game and perhaps the horror genre feels like the easiest to link immersion to a better game, as being immersed in a scary environment elevates the terror by such a degree. A very real problem with the sheer terror factor for this game is that I don’t actually want to play it. This is something I’ve seen and heard in other reviews/youtube playthroughs as well – there might be a limit to how much you can handle while playing a good, immersive Oculus game! Just imagine we reach an Exorcism of Emily Rose level of terror in VR, yikes! At the same time, who can pass up trying something that someone tells you is too scary?

Don’t play it here(!)

Conclusion

The future of game design with the Rift is quite an unknown – we still have such a small number of demos available, most of them just bite sized experiences, so its hard to judge yet what a fully developed Oculus game will be like with the consumer version. Here’s to hoping that what we get isn’t mostly “what we have now, plus a Rift on your face”. Games like Lunar Flight, Dumpy the Elephant and Dread Halls are some great steps forward, and I think the technology will really make developers dream big and try things that haven’t been done before.

For Rift experiences, I’m also looking forward to everything from 360 degree videos to sunny beach simulators, to dioramas like Blocked In.

Thanks for reading, very keen to hear people’s opinions on how the Oculus will change game design, how immersion will affect how games are made, how I said a stupid thing, or any other comments you have!

Read more about:

Featured BlogsAbout the Author(s)

You May Also Like

.jpeg?width=700&auto=webp&quality=80&disable=upscale)