Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

The Game Outcomes Project, Part 3: Game Development Factors

Part 3 in a 5-part series analyzing the results of the Game Outcomes Project survey, which polled hundreds of game developers to determine how teamwork, culture, leadership, production, and project management contribute to game project success or failure.

This article is the third in a 5-part series.

Part 1: The Best and the Rest is also available here: (Gamasutra) (BlogSpot) (in Chinese)

Part 2: Building Effective Teams is available here: (Gamasutra) (BlogSpot) (in Chinese)

Part 3: Game Development Factors is also available here: (Gamasutra) (BlogSpot) (in Chinese)

Part 4: Crunch Makes Games Worse is now available here: (Gamasutra) (BlogSpot) (in Chinese)

Part 5: What Great Teams Do is available here: (Gamasutra) (in Chinese)

For extended notes on our survey methodology, see our Methodology blog page.

Our raw survey data (minus confidential info) is now available here if you'd like to verify our results or perform your own analysis.

The Game Outcomes Project team includes Paul Tozour, David Wegbreit, Lucien Parsons, Zhenghua “Z” Yang, NDark Teng, Eric Byron, Julianna Pillemer, Ben Weber, and Karen Buro.

The Game Outcomes Project, Part 3: Game Development Factors

The Game Outcomes Project was a large-scale study of teamwork, culture, production, and leadership in game development conducted in October 2014. It was based on a 120-question survey of several hundred game developers, and it correlated all of those factors against a set of four quantifiable project outcomes (project delays, return on investment (ROI), aggregate reviews / MetaCritic ratings, and the team’s own sense of satisfaction with how the project achieved its internal goals). Our team then built all of these four outcome questions into an aggregate “score” value representing the overall outcome of the game project, as described on our Methodology page.

Previous articles in this series (Part 1, Part 2) introduced the Game Outcomes Project and showed very surprising results. While many factors that one would expect to contribute to differences in project outcomes – such as team size, project duration, production methodology, and most forms of financial incentives – had no meaningful correlations, we also saw remarkable and very clear results for three different team effectiveness models that we investigated.

Our analysis uncovered major differences in team effectiveness, which translate directly into large and unmistakable differences in project outcomes.

Every game is a reflection of the team that made it, and the best way to raise your game is to raise your team.

In this article, we look at additional factors in our survey which were not covered by the three team effectiveness models we analyzed in Part 2, including several in areas specific to game development. We included these questions in our survey on the suspicion that they were likely to contribute in some way to differences in project outcomes. We were not disappointed.

Design Risk Management

First, we looked at the management of design risk. It’s well-known in the industry that design thrashing is a major cost of cost and schedule overruns. We’ve personally witnessed many projects where a game’s design was unclear from the outset, where preproduction was absent or was inadequate to define the gameplay, or where a game in development underwent multiple disruptive re-designs that negated the team’s progress, causing enormous amounts of work to be discarded and progress to be lost.

It seemed clear to us that the best teams carefully manage the risks around game design, both by working to mitigate the repercussions of design changes in development, and by reducing the need for disruptive design changes in the first place (by having a better design to begin with).

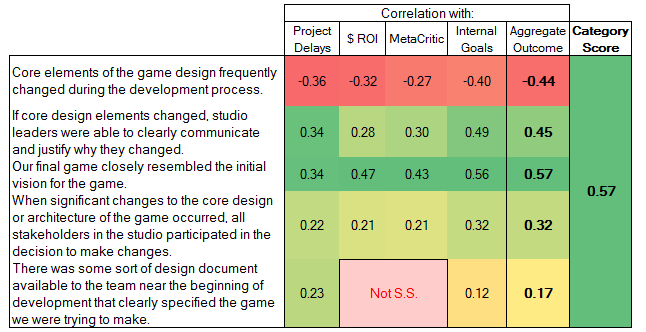

We came up with a set of 5 related questions, shown in Figure 1 below. These turned out to have some of the strongest correlations of any questions we asked in our survey. With the exception of the two peach-colored correlations for the last question (related to the return-on-investment outcome and the critical reception outcome for design documents), all of these correlations are statistically significant (p-value under 0.05).

Figure 1. Questions around design risk management and their correlations with project outcomes. The “category score” on the right is the highest absolute value of the aggregate outcome correlations, as an indication of the overall value of this category. “Not S.S.” indicates correlations that are not statistically significant (p-value over 0.05).

Clearly, changes to the design during development, and the way those design changes were handled, made enormous differences in the outcomes of many of the development efforts we surveyed.

However, when they did occur, participation of all stakeholders in the decision to make changes, and clear communication and justification of those changes and the reasons for them, clearly mitigated the damage.

[We remind readers who may have missed Part 2 that negative correlations (in red/orange) should not be viewed a bad thing; on the contrary, questions asked in a “negative frame,” i.e., asking about a bad thing that may have occurred on the project, should have a negative correlation with project outcomes, indicating that a lower answer (stronger disagreement with the statement) correlated with better project outcomes (better ROI, fewer delays, higher critical reviews, and so on). What really matters is the absolute value of a correlation: the farther a correlation is from 0, the more strongly it relates to differences in project outcomes, and you can then look at the sign to see whether it contributes positively or negatively.]

Somewhat surprisingly, our question about a design document clearly specifying the game to be developed had a very low correlation – below 0.2. It also had no statistically significant correlation (p-value > 0.05) with ROI or critical reception / MetaCritic scores. This is quite surprising, as it suggests design documents are far less useful than generally realized. The only area where they show a truly meaningful correlation is with project timeliness. This seems to suggest that while design documents may make a positive contribution to the schedule, anyone who believes that they will contribute much to product success from a critical or ROI standpoint by themselves is quite mistaken.

We should be clear that our 2014 survey did not ask any questions related to the project’s level of design innovation. Certainly, it’s much easier to limit design risk if you stick to less ambitious one-off games and direct sequels. We don’t want to sound as if we are recommending that course of action.

For the record, we do believe that design innovation is enormously important, and quite often, a game’s design needs to evolve significantly in production in order to achieve that level of innovation. Our own subjective experience is that a desire for innovation needs to be balanced against cautious management of the enormous risks that design changes can introduce. We plan to ask more questions in the area of design innovation in the next version of the survey.

Team Focus

Managing the risks to the design itself is one thing, but to what extent does the team’s level of focus – being on the same page about the game in development, and sharing a single, common, vision – impact outcomes?

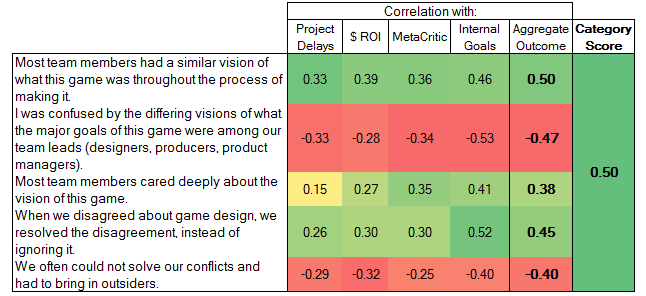

Figure 2. Questions around team focus and their correlations.

The strong correlations here are not too surprising; these tie in closely with the design risk management topic above, as well as our questions about “Compelling Direction,” the second element of Hackman’s team effectiveness model from Part 2. As a result, the correlations here are very similar. It’s clear that successful teams have a strong shared vision, care deeply about the product vision, and are careful to resolve disagreements about the game’s design quickly and professionally.

It’s interesting to note that the question “most team members cared deeply about the vision of this game” showed a wide disparity of correlations. It shows a strong positive correlation with critical reviews and internal goal achievement, but only a very weak correlation with project timeliness. This seems to indicate that while passion for the project makes for a more satisfied team and a game that gets better review scores, it has little to do with hitting schedules.

Crunch (Extended Overtime)

Our industry is legendary (or perhaps “infamous” is a better word) for its frequent use of extended overtime, i.e. “crunch.” But how does crunch actually correlate with project outcomes?

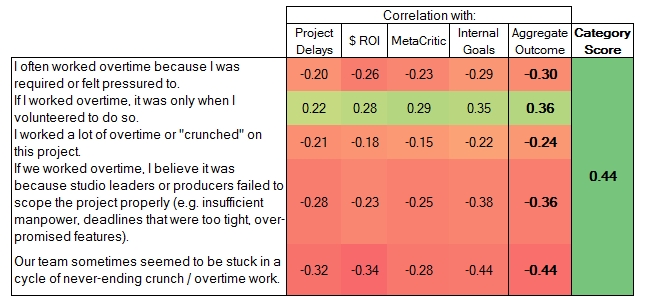

Figure 3. Questions around crunch, and related correlations.

As you can see, all five of our questions around crunch were significantly correlated with outcomes – some of them very strongly so. The one and only question that showed a positive correlation was the question asking if overtime was purely voluntary, indicating the absence of mandatory crunch.

Even in the area where you might expect crunch would improve things – project delays – crunch still showed a significant negative correlation, indicating that it did not actually save projects from delays.

This suggests that not only does crunch not produce better outcomes, but it may actually make games worse where it is used.

Crunch is an important topic, and one that is far too often passionately debated without reference to any facts or data whatsoever. In order to do the topic justice – and hopefully lay the entire “debate” to rest once and for all – we will dedicate the entirety of our next article to further exploring these results, and we’ll don our scuba gear and perform a “deep-dive” into the data to ferret out exactly what our data can tell us about crunch and its effects.

At the very least, we hope to provide enough data that future discussions of crunch will rest far less on opinion and far more on actual evidence.

Team Stability

A great deal of validated management research shows clearly that teams with stable membership are far more effective than teams whose membership changes frequently, or those whose members must be shared with other teams. Studies of surgical teams and airline crews show that they are far more likely to make mistakes in their first few weeks of working together, but grow continuously more effective year after year as they work together. We were curious how team stability affects outcomes in game development.

Figure 4. Questions around team stability and their correlations to project outcomes.

Surprisingly, our question on team members being exclusively dedicated to the project showed no statistically significant correlations with project outcomes. As far as we can tell, this just doesn’t matter one way or the other.

However, our more general questions around project turnover and reorganization showed strong and unequivocal correlations with inferior project outcomes.

At the same time, it’s difficult to say for sure to what extent each of these is a cause or an effect of problems on a project. In the case of turnover, there are plenty of industry stories that illustrate both: there have been plenty of staff departures and layoffs due to troubled projects, but also quite a few stories of key staff departures that left their studios scrambling to recover – in addition to stories of spiraling problems where project problems caused key staff departures, which caused more morale/productivity problems, which led to the departure of even more staff.

We hope to analyze this factor more deeply in future versions of the survey (and we’d like to break down voluntary vs involuntary staff departures in particular). But for now, we’ll have to split the difference in our interpretation. As far as we can tell from here, turnover and reorganizations are both generally harmful, and wise leaders should do everything in their power to minimize them.

Communication & Feedback

We included several questions about the extent to which communication and feedback play a role in team effectiveness:

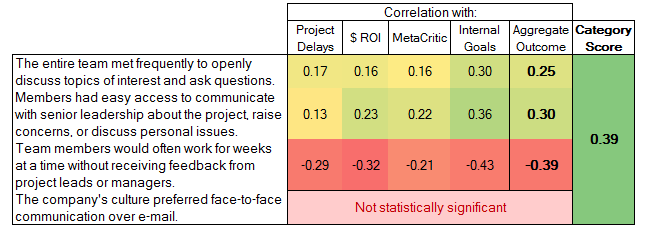

Figure 5. Questions around communication and their correlations.

Clearly, regular feedback from project leads and managers (our third question in this category) is key – our third question ties in very closely with factor #11 in the Gallup team effectiveness model from Part 2, with virtually identical correlations with project outcomes. Easy access to senior leadership (the second question) is also clearly quite important.

Regular communication between the entire team (the first question) is somewhat less important but still shows significant positive correlations across the board. Meanwhile, our final question revealed no significant differences between cultures that preferred e-mail vs face-to-face communication.

Organizational Perceptions of Failure

A 2012 Gamasutra interview with Finnish game developer Supercell explained that company’s attitude toward failure:

"We think that the biggest advantage we have in this company is culture. […] We have this culture of celebrating failure. When a game does well, of course we have a party. But when we really screw up, for example when we need to kill a product – and that happens often by the way, this year we've launched two products globally, and killed three – when we really screw up, we celebrate with champagne. We organize events that are sort of postmortems, and we can discuss it very openly with the team, asking what went wrong, what went right. What did we learn, most importantly, and what are we going to do differently next time?"

It seems safe to say that most game studios don’t share this attitude. But is Supercell a unique outlier, or would this attitude work in game development in general if applied more broadly?

Our developer survey asked six questions about how the team perceived failure on a cultural level:

Figure 6. Questions around organizational perceptions of failure and their correlations.

These correlations are quite significant, and nearly all of them are quite strong. More successful game projects are much more likely encourage creative risk-taking and open discussion of failure, and ensure that team members feel comfortable and supported when taking creative risks.

These results tie in very closely with the concept of “psychological safety” explained Part 2, under the “Supportive Context” section of Hackman’s team effectiveness model.

Respect

Extensive management research indicates that respect is a terrifically important driver of employee engagement, and therefore of productivity. A recent HBR study of nearly 20,000 employees around the world found that no other leader behavior had a greater effect on outcomes. Employees who receive more respect exhibit massive improvements in engagement, retention, satisfaction, focus, and many other factors.

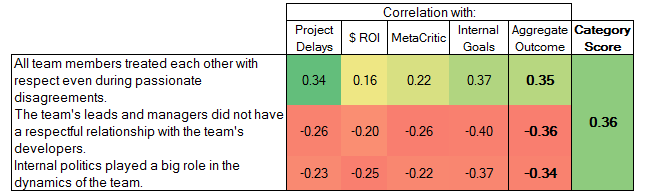

We were curious whether this also applied to the game industry, and whether a respectful working environment contributed to differences between failed and successful game project outcomes as well. We were not disappointed.

Figure 7. Questions around respect, and related correlations.

All three of our questions in this category showed significant correlations with outcomes, especially the question about respectful relationships between team leads/managers and developers.

Clearly, all team members -- and leads/managers in particular -- should think twice before treating team members with disrespect: they are not only hurting their team, but hurting their own game project and their own bottom line.

Project Planning

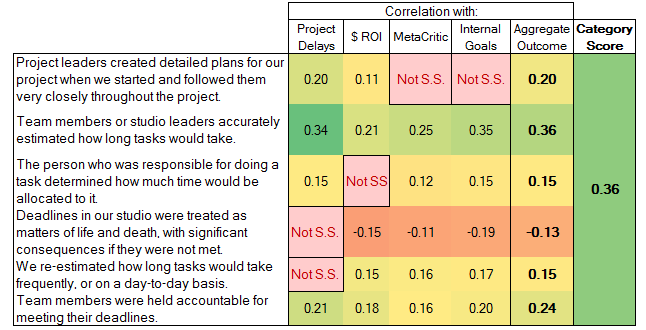

We asked a number of questions around the ways different aspects of project planning affected outcomes:

Figure 8. Questions around project planning and their correlations.

Clearly, deadlines and accountability are important, as the positive correlation of the last question shows. Accountability is obviously a net positive.

However, teams that took deadlines too seriously, and treated them as matters of life and death (question #4) experienced a negative correlation with project outcomes, while having no statistically significant correlation with project timeliness. This clearly indicates that treating deadlines as matters of life and death not only fails to make a positive contribution to the schedule, it is actually counterproductive in the long run.

This seems to be telling us that taking deadlines too seriously can be harmful, and high-pressure management tactics are likely to backfire. We speculate that successful teams balance their goals for each milestone against the realities of production, the need for team cohesion, and the pragmatism to sometimes sacrifice or adjust individual milestone goals for the good of the overall project.

Surprisingly, daily task re-estimation (question #5) also shows no significant correlation with timeliness, although it does have a weak positive correlation with all the other outcome factors.

Furthermore, detailed planning (question #1), while positively correlated with both ROI and timeliness, seems to have no statistically significant correlation with critical reception or internal goal achievement: this is clearly useful for project timeliness, but we speculate that this can also lock the team into a fixed, brittle development plan that can tempt teams to focus on the lesser good of schedule integrity over the greater good of product quality, and can sometimes stifle opportunities for improving the game in development.

The most unambiguous findings here are that accurate estimation (question #2) and a reasonable level of accountability (question #6) both contribute positively to all outcome factors.

Technology Risk Management

We were also curious about risks around technology. How did major technology changes and the management of those changes affect outcomes, and did the team participate in any sort of code reviews or pair programming? The game industry has countless stories to tell of engine changes or major technology overhauls that either caused project delays or even contributed to outright project failure or cancellation.

Figure 9. Questions around technology risk management, and their correlations with project outcomes.

Here, too, we see some very strong correlations. Question #1 shows that major technology revamps in development can introduce a great deal of project risk, while question #3 seems to indicate that the communication of those technology changes is even more important.

However, whether these decisions are driven by internal or external changes does not appear to be relevant, and while code reviews and pair programming are clearly positively correlated and statistically significant, the correlation is a relatively weak one, at under 0.2, and shows no statistically significant correlation with the project’s critical reception or achievement of its internal goals. Although there is significant evidence that code reviews reduce defects and improve a team’s programming skills, we were surprised that these correlations are not higher, and we suspect it may be related to the way the reviews are carried out in addition to the team’s experience level -- deeper analysis reveals this factor is much more significant with more experienced teams. We plan to investigate these more thoroughly in the next version of the study.

Production Methodologies

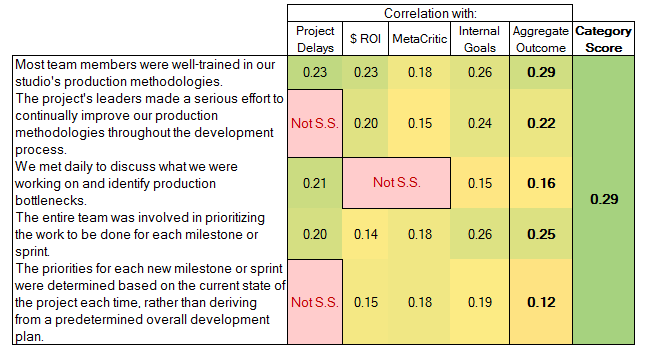

In Part 1, we revealed the rather shocking discovery that the specific production methodology a team uses – waterfall, agile, scrum, or ad-hoc – seems to make no statistically significant difference in project outcomes. However, we also asked a number of additional questions on the topic of production methodologies:

Figure 10. Questions around production methodologies and their correlations.

Here, we can see clearly that training in production methodologies, efforts to improve them, and involving the entire team in prioritizing the work for each milestone are all significantly correlated (>0.2) with positive project outcomes. However, our questions about daily production meetings and re-prioritization for each milestone showed relatively low correlations.

We see no statisticallysignificant correlation of the last question (regarding re-prioritization in each milestone) with project delays, but a small positive correlation with the other three outcomes. It seems reasonable to assume that this indicates that while re-prioritization at each milestone increases product quality, it sometimes does so at the expense of the schedule.

We also further attempted to verify our controversial finding that production methodology used makes no difference by re-evaluating production methodologies only for respondents that replied that their teams were well-trained in their studio’s production methodology (i.e. they answered “Agree Strongly” or “Agree Completely” to the first question in this category). Here, too, we found no statistically significant differences between waterfall, agile, and agile using Scrum.

This analysis appears to reinforce our earlier finding that the particular production methodology being used matters very little; what matters is having one, stick to it, and properly training your team to use it.

Collaboration & Helpfulness

We’ve personally experienced many different types of team cultures where helpfulness was treated as a virtue or a vice. Some encourage a “sink or swim” attitude, and deliberately force new hires to learn the ropes on their own; others go out of their way to encourage and reward collaboration and helpfulness among team members. We were curious about the effect of these cultural differences on project outcomes.

Figure 11. Questions around collaboration and helpfulness and their correlations.

Although the correlations to the individual outcomes here are relatively weak, the correlations with the aggregate outcome are unambiguously positive.

Note that there is no statistically significant correlation between the second question and the outcome factor for project timeliness. We speculate that some teams may spend too much time and energy obsessing over their issues and challenges, which can become a time sink or a source of negativity if carried out to unhealthy extremes.

Outsourcing and Cross-Functional Teams

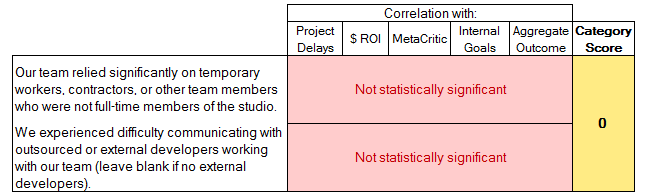

We asked two additional categories of questions. One category related to the use of contractors, temporary workers, and outsourcing:

Figure 12. Questions around outsourcing and their correlations.

We saw no statistically significant correlations regarding outsourcing, and as far as our data set can tell, this has no identifiable impact on project outcomes. It seems much more likely that any effects of outsourcing have much more to do with the quality of the contractors or outsourced labor, the way outsourcing is integrated into the team, its cost, and the quality of the coordination of the outsourced labor, all of which were outside the scope of the 2014 survey.

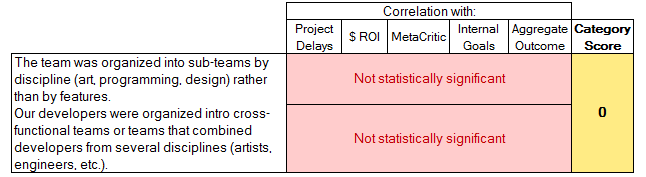

The other category related to whether sub-teams were divided up by discipline (art, programming, design) or were organized into cross-functional sub-teams, each combining several disciplines.

Figure 13. Questions around cross-functional teams and their correlations.

We also observed no correlations for cross-functional or per-discipline teams, leading us to conclude that there is probably no “right” answer here. If there is any utility in adopting one team structure or the other, the factors involved were outside the scope of the questions asked in our study.

Conclusions: Best Practices

The previous article illustrated that three very different team effectiveness models all correlate strongly with game project outcomes. We found that team effectiveness is tied to having a compelling direction and a shared vision, an enabling structure and supportive context, a connection with the mission of the organization, regular feedback, a deep level of trust and commitment within the team, belief in the mission of the organization, and the essential element of “psychological safety” that allows team members to feel comfortable taking interpersonal risks.

In addition to those factors, all but the last two of the factors outlined in this article showed significant correlations as well. Those that showed no correlations are just as noteworthy as those that did.

For convenience, we deliberately ordered the section descriptions listed in this article in order from strongest to weakest correlation. To summarize:

Design risk management showed the strongest correlations, with a correlation over 0.57.

Team focus came in a close second, at 0.50.

Avoidance of crunch was in third place, at 0.44.

After that, team stability, communication, organizational perceptions of failure, respect, project planning, and technology risk management were also very important, all with correlations between 0.36 and 0.39.

Production methodologies and collaboration/helpfulness came in last but were still significant, at 0.29 and 0.20, respectively.

Outsourcing and the use of cross-functional teams showed no statistical significance. These do not seem to impact project outcomes in any general sense as far as our survey was able to detect.

Finally, in order to help teams make the best use of these results, we’ve created an interactive self-reflection tool to help teams conduct systematic post-mortems and identify their best opportunities for growth.

Self-Reflection Tool

The Self-Reflection Tool is an interactive Excel spreadsheet that includes the 38 most relevant questions from our survey, along with five linear regression models (and one for each individual outcome factor and one for the aggregate outcome score). To use it, you can simply open it and answer the 38 questions highlighted in yellow on the primary worksheet. It will then forecast your team’s likely ROI, critical success, chance of project delays, and chance to achieve the project’s internal goals. It will also suggest your team’s most likely avenues for improving its odds of a positive outcome.

For an even better analysis, print out the questions, ask your fellow team members to take the survey anonymously, and then average the results.

You can download the self-reflection tool here.

Conclusion

By comparing hundreds of game projects side-by-side, the Game Outcomes Project has given us a unique perspective on game development. In the process, it has uncovered quite a few surprises. The study has shown clearly which factors contribute to success or failure in game projects and pointed the way toward many future avenues of research, which are listed on our Methodology page.

We believe that this kind of systematic, objective, data-driven approach to identifying the links between common practices on game development teams and discrete project outcomes points the way toward a new approach to defining industry standards and best practices, and hopefully helps lay some persistent fallacies and popular misapprehensions to rest. In the future, we hope to extend the study with a larger number of participants and continue to refine and evolve it annually.

More than anything else, we hope that this project will help teams and their leadership see more clearly that the differences in teamwork and culture across teams are simply massive, and we have demonstrated that these differences have an overwhelming impact on the games we make and our success or failure as organizations.

Although there is always an element of risk involved, the lion’s share of your own destiny remains within your own control.

If you want to improve your team's odds of success, the factors we examined in this study are probably a very good starting point.

Future Work

In Part 4, we will tackle the tricky and pervasive topic of crunch. We analyze the data from a number of angles and see that it makes a clear and unambiguous case with regard to extended overtime. Anyone considering subjecting their team to “crunch” in the hope of raising product quality or making up for lost time would be well-advised to read it carefully.

By popular demand, we will also be releasing an ordered summary of our findings as Part 5 one week after that.

The Game Outcomes Project team would like to thank the hundreds of current and former game developers who made this study possible through their participation in the survey. We would also like to thank IGDA Production SIG members Clinton Keith and Chuck Hoover for their assistance with survey design; Kate Edwards, Tristin Hightower, and the IGDA for assistance with promotion; and Christian Nutt and the Gamasutra editorial team for their assistance in promoting the survey.

For further announcements regarding our project, follow us on Twitter at @GameOutcomes

Read more about:

Featured BlogsAbout the Author(s)

You May Also Like

.jpeg?width=700&auto=webp&quality=80&disable=upscale)