Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

The Game Outcomes Project, Part 1: The Best and the Rest

The first in a 5-part series analyzing the results of the Game Outcomes Project survey, which polled hundreds of game developers to determine how teamwork, culture, leadership, and project management contribute to game project success or failure.

This article is the first in a 5-part series.

Part 1: The Best and the Rest is available here: (Gamasutra) (BlogSpot) (in Chinese)

Part 2: Building Effective Teams is available here: (Gamasutra) (BlogSpot) (in Chinese)

Part 3: Game Development Factors is available here: (Gamasutra) (BlogSpot) (in Chinese)

Part 4: Crunch Makes Games Worse is available here: (Gamasutra) (BlogSpot) (in Chinese)

Part 5: What Great Teams Do is available here: (Gamasutra) (in Chinese)

For extended notes on our survey methodology, see our Methodology blog page.

Our raw survey data (minus confidential info) is now available here if you'd like to verify our results or perform your own analysis.

The Game Outcomes Project team includes Paul Tozour, David Wegbreit, Lucien Parsons, Zhenghua “Z” Yang, NDark Teng, Eric Byron, Julianna Pillemer, Ben Weber, and Karen Buro.

[Editor's Note: The results of the Game Outcomes Project will be addressed at length during GDC 2016 as part of Paul Tozour's talk on "The Game Outcomes Project: How Teamwork, Leadership, and Culture Drive Results."]

The Game Outcomes Project, Part 1: The Best and the Rest

What makes the best teams so effective?

Veteran developers who have worked on many different teams often remark that they see vast cultural differences between them. Some teams seem to run like clockwork, and are able to craft world-class games while apparently staying happy and well-rested. Other teams struggle mightily and work themselves to the bone in nightmarish overtime and crunch of 80-90 hour weeks for years at a time, or in the worst case, burn themselves out in a chaotic mess. Some teams are friendly, collaborative, focused, and supportive; others are unfocused and antagonistic. A few even seem to be hostile working environments or political minefields with enough sniping and backstabbing to put a game of Team Fortress 2 to shame.

What causes the differences between those teams? What factors separate the best from the rest?

As an industry, are we even trying to figure that out?

Are we even asking the right questions?

These are the kinds of questions that led to the development of the Game Outcomes Project. In October and November of 2014, our team conducted a large-scale survey of hundreds of game developers. The survey included roughly 120 questions on teamwork, culture, production, and project management. We suspected that we could learn more from a side-by-side comparison of many game projects than from any single project by itself, and we were convinced that finding out what great teams do that lesser teams don’t do – and vice versa – could help everyone raise their game.

Our survey was inspired by several of the classic works on team effectiveness. We began with the 5-factor team effectiveness model described in the book Leading Teams: Setting the Stage for Great Performances. We also incorporated the 5-factor team effectiveness model from the famous management book The Five Dysfunctions of a Team: A Leadership Fable and the 12-factor model from 12: The Elements of Great Managing, which is derived from aggregate Gallup data from 10 million employee and manager interviews. We felt certain that at least one of these three models would surely turn out to be relevant to game development in some way.

We also added several categories with questions specific to the game industry that we felt were likely to show interesting differences.

On the second page of the survey, we added a number of more generic background questions. These asked about team size, project duration, job role, game genre, target platform, financial incentives offered to the team, and the team’s production methodology.

We then faced the broader problem of how to quantitatively measure a game project’s outcome.

Ask any five game developers what constitutes “success,” and you’ll likely get five different answers. Some developers care only about the bottom line; others care far more about their game’s critical reception. Small indie developers may regard “success” as simply shipping their first game as designed regardless of revenues or critical reception, while developers working under government contract, free from any market pressures, might define “success” simply as getting it done on time (and we did receive a few such responses in our survey).

Lacking any objective way to define “success,” we decided to quantify the outcome through the lenses of four different kinds of outcomes. We asked the following four outcome questions, each with a 6-point or 7-point scale:

"To the best of your knowledge, what was the game's financial return on investment (ROI)? In other words, what kind of profit or loss did the company developing the game take as a result of publication?"

"For the game's primary target platform, was the project ever delayed from its original release date, or was it cancelled?"

"What level of critical success did the game achieve?"

"Finally, did the game meet its internal goals? In other words, to what extent did the team feel it achieved something at least as good as it was trying to create?"

We hoped that we could correlate the answers to these four outcome questions against all the other questions in the survey to see which input factors had the most actual influence over these four outcomes. We were somewhat concerned that all of the “noise” in project outcomes (fickle consumer tastes, the moods of game reviewers, the often unpredictable challenges inherent in creating high-quality games, and various acts of God) would make it difficult to find meaningful correlations. But with enough responses, perhaps the correlations would shine through the inevitable noise.

We then created an aggregate “outcome” value that combined the results of all four of the outcome questions as a broader representation of a game project’s level of success. This turned out to work nicely, as it correlated very strongly with the results of each of the individual outcome questions. Our Methodology blog page has a detailed description of how we calculated this aggregate score.

We worked carefully to refine the survey through many iterations, and we solicited responses through forum posts, Gamasutra posts, Twitter, and IGDA mailers. We received 771 responses, of which 302 were completed, and 273 were related to completed projects that were not cancelled or abandoned in development.

The Results

So what did we find?

In short, a gold mine. The results were staggering.

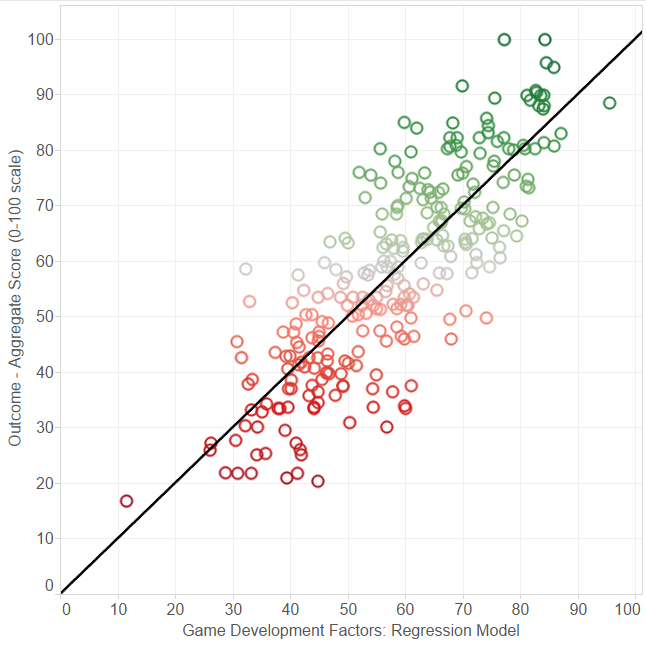

More than 85% of our 120 questions showed a statistically significant correlation with our aggregate outcome score, with a p-value under 0.05 (the p-value gives the probability of observing such data as in our sample if the variables were be truly independent; therefore, a small p-value can be interpreted as evidence against the assumption that the data is independent). This correlation was moderate or strong in most cases (absolute value > 0.2), and most of the p-values were in fact well below 0.001. We were even able to develop a linear regression model that showed an astonishing 0.82 correlation with the combined outcome score (shown in Figure 1 below).

Figure 1. Our linear regression model (horizontal axis) plotted against the composite game outcome score (vertical axis). The black diagonal line is a best-fit trend line. 273 data points are shown.

To varying extents, all three of the team effectiveness models (Hackman's “Leading Teams” model, Lencioni's “Five Dysfunctions” model, and the Gallup “12” model) proved to correlate strongly with game project outcomes.

We can’t say for certain how many relevant questions we didn’t ask. There may well be many more questions waiting to be asked that would have shined an even stronger light on the differences between the best teams and the rest.

But the correlations and statistical significance we discovered are strong enough that it’s very clear that we have, at the very least, discovered an excellent partial answer to the question of what makes the best game development teams so successful.

The Game Outcomes Project Series

Due to space constraints, we’ll be releasing our analysis as a series of several articles, with the remaining 3 articles released at 1-week intervals beginning in January 2015. We’ll leave off detailed discussion of our three team effectiveness models until the second article in our series to allow these topics the thorough analysis they deserve.

This article will focus solely on introducing the survey and combing through the background questions asked on the second survey page. And although we found relatively few correlations in this part of the survey, the areas where we didn’t find a correlation are just as interesting as the areas where we did.

Project Genre and Platform Target(s)

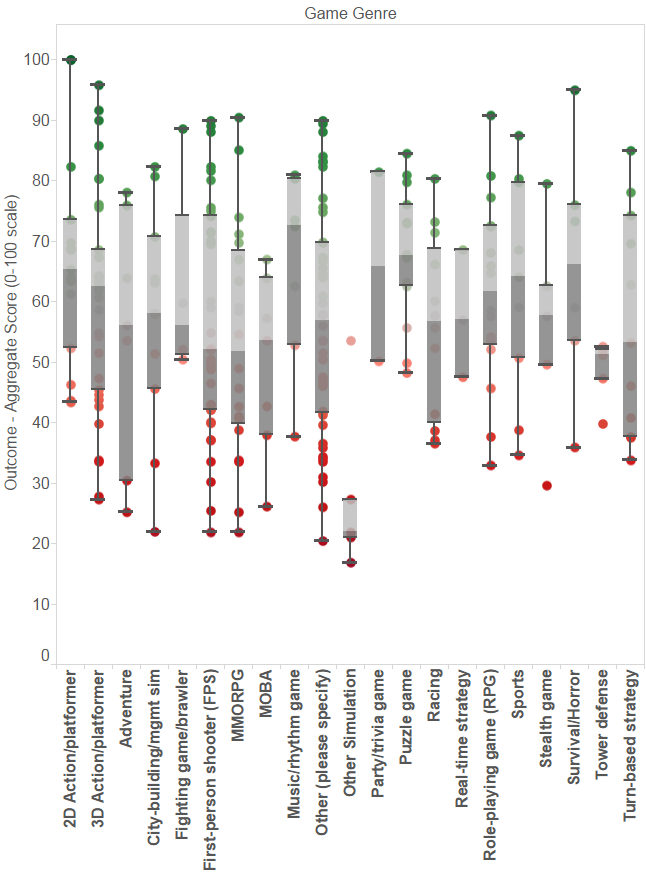

First, we asked respondents to tell us what genre of game their team had worked on. Here, the results are all across the board.

Figure 2. Game genre (vertical axis) vs. composite game outcome score (horizontal axis). Higher data points (green dots) represent more successful projects, as determined by our composite game outcome score.

We see remarkably little correlation between game genre and outcome. In the few cases where a game genre appears to skew in one direction or another, the sample size is far too small to draw any conclusions, with all but a handful of genres having fewer than 30 responses.

(Note that Figure 2 uses a box-and-whisker plot, as described here).

We also asked a similar question regarding the product’s target platform(s), including responses for desktop (PC or Mac), console (Xbox/PlayStation), mobile, handheld, and/or web/Facebook. We found no statistically significant results for any of these platforms, nor for the total number of platforms a game targeted.

Project Duration and Team Size

We asked about the total months and years in development; based on this, we were able to calculate each project’s total development time in months:

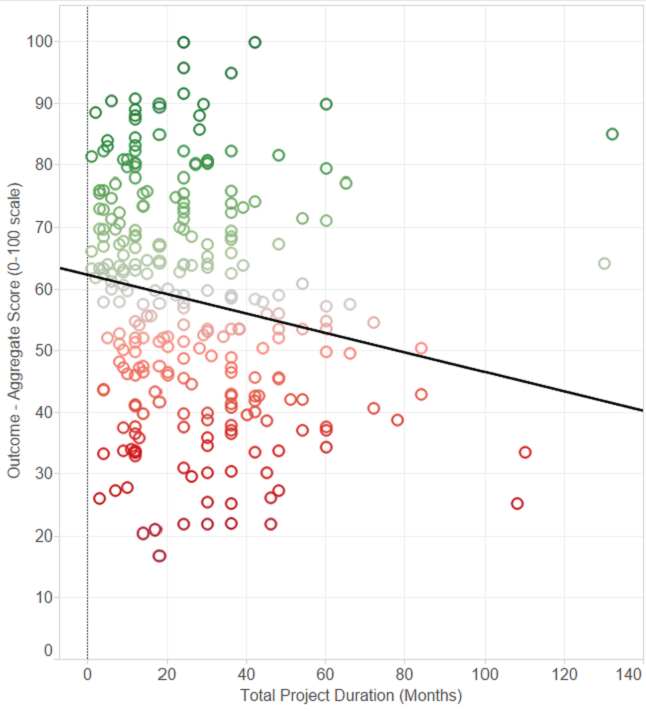

Figure 3. Total months in development (horizontal axis) vs game outcome score (vertical). The black diagonal line is a trend line.

As you can see, there’s a small negative correlation (-0.229, using the Spearman correlation coefficient), and the p-value is 0.003. This negative correlation is not too surprising, as troubled projects are more likely to be delayed than projects that are going smoothly.

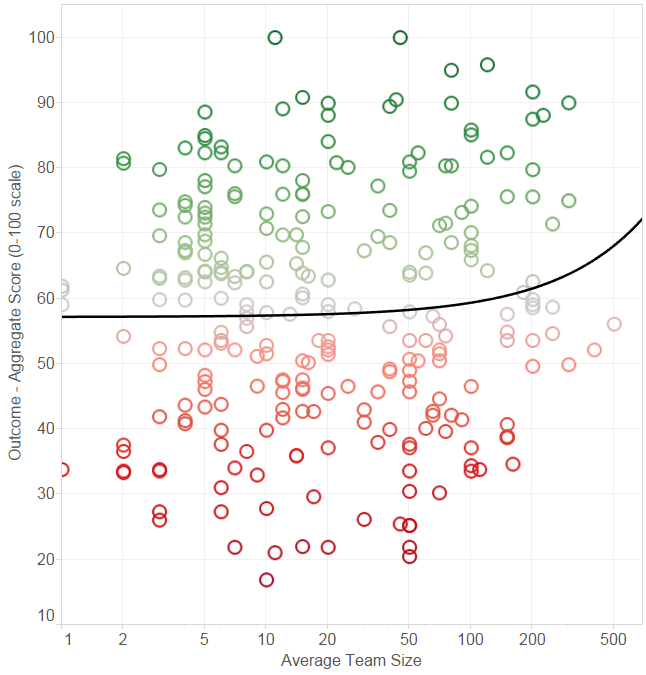

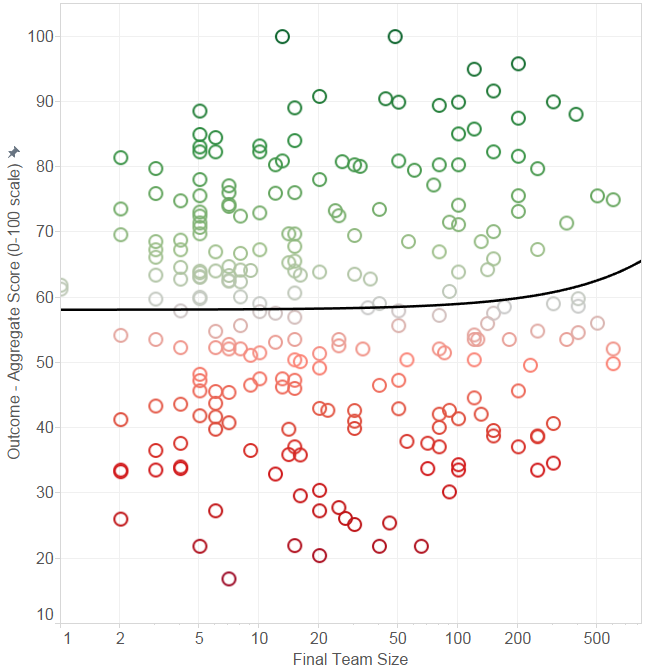

We also asked about the size of the team, both in terms of the average team size and the final team size. Average team size was between 1 and 500 with an average of 48.6; final team size was between 1 and 600 with an average of 67.9. Both showed a slight positive correlation with project outcomes, as shown below, but in both cases the p-value is well over 0.1, indicating there’s not enough statistical significance to make this correlation useful or noteworthy.

Note that in both figures below, the horizontal axis is shown on a logarithmic scale, which makes the linear trend line appear curved.

Figure 4. Average team size correlated against game project outcome (vertical axis).

Figure 5. Final team size correlated against game project outcome (vertical axis).

We also analyzed the ratio of average to final team size, but we found no meaningful correlations here.

Game Engines

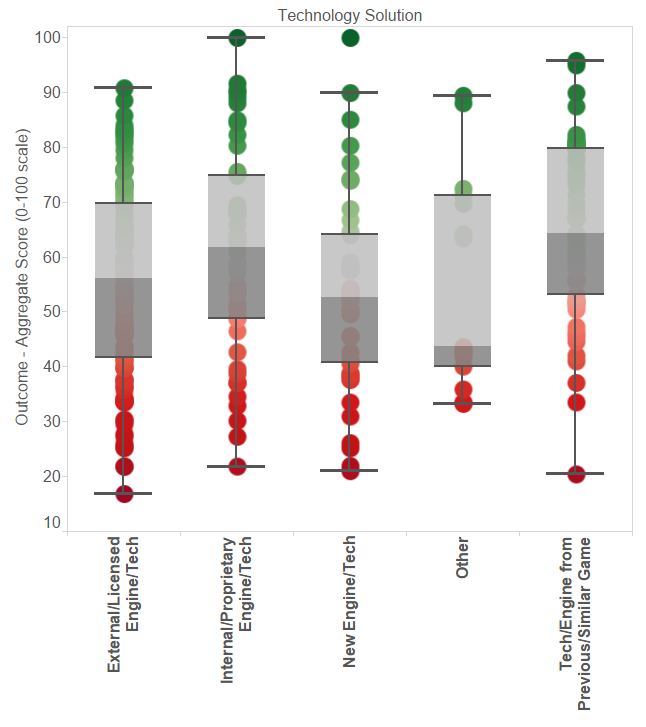

We asked about the technology solution used: whether it was a new engine built from scratch; core technology from a previous version of a similar game or another game in the same series; an in-house / proprietary engine (such as EA Frostbite); or an externally-developed engine (such as Unity, Unreal, or CryEngine).

The results are as follows:

Figure 6. Game engine / core technology used (horizontal axis) vs game project outcome (vertical axis), using a box-and-whisker plot.

Average composite score

New engine/tech | 53.3 | 18.3 | 41 |

|---|---|---|---|

Engine from previous version of same or similar game | 64.8 | 15.8 | 58 |

Internal/proprietary engine / tech (such as EA Frostbite) | 60.7 | 19.4 | 46 |

Licensed game engine (Unreal, Unity, etc.) | 55.6 | 17.5 | 113 |

Other | 55.5 | 19.5 | 15 |

The results here are less striking the more you look at them. The highest score was for projects that used an engine from a previous version of the same game or a similar one – but that’s exactly what one would expect to be the case, given that teams in this category clearly already had a head start in production, much of the technical risk had already been stamped out, and there was probably already a veteran team in place that knew how to make that type of game!

We analyzed these results using a Kruskal-Wallis one-way analysis of variance, and we found that this question was only statistically significant on account of that very option (engine from a previous version of the same game or similar), with a p-value of 0.006. Removing the data points related to this answer category caused the p-value for the remaining categories to shoot up above 0.3.

Our interpretation of the data is that the best option for the game engine depends entirely on the game being made and what options are available for it, and that any one of these options can be the “best” choice given the right set of circumstances. In other words, the most reasonable conclusion is there is no universally “correct” answer separate from the actual game being made, the team making it, and the circumstances surrounding the game's development. That’s not to say the choice of engine isn’t terrifically important, but the data clearly shows that there plenty of successes and failures in all categories with only minimal differences in outcomes between them, clearly indicating that each of these four options is entirely viable in some situations.

We also did not ask which specific technology solution a respondent’s dev team was using. Future versions of the study may include questions on the specific game engine being used (Unity, Unreal, CryEngine, etc.)

Team Experience

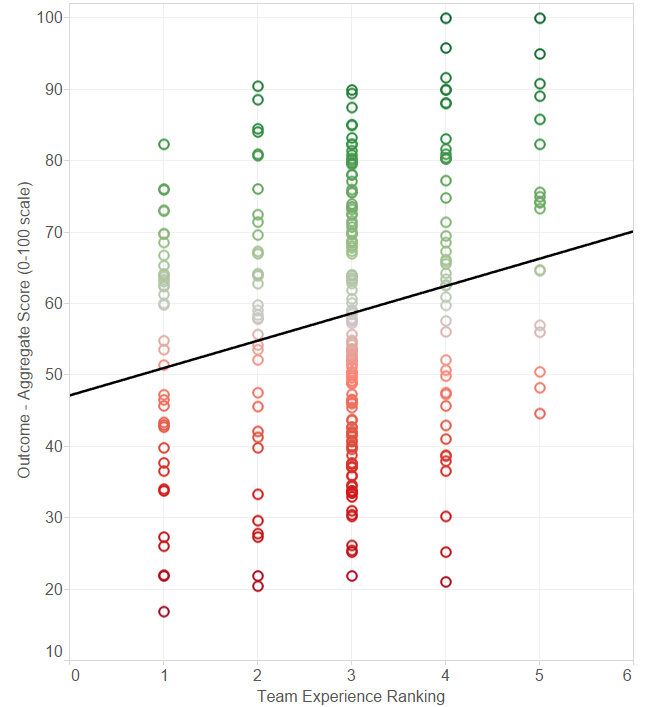

We also asked a question on this page regarding the team’s average experience level, along a scale from 1 to 5 (with a ‘1’ indicating less than 2 years of average development experience, and a ‘5’ indicating a team of grizzled game industry veterans with an average of 8 or more years of experience).

Figure 7. Team experience level ranking (horizontal axis, by category listed above) mapped against game outcome score (vertical axis)

Here, we see a correlation of 0.19 (and p-value under 0.001). Note in particular the complete absence of dots in the upper-left corner (which would indicate wildly successful teams with no experience) and the lower-right corner (which would indicate very experienced teams that failed catastrophically).

So our study clearly confirms the common knowledge in the industry that experienced teams are significantly more likely to succeed. This is not at all surprising, but it's reassuring that the data makes the point so clearly. And as much we may all enjoy stories of random individuals with minimal game development experience becoming wildly successful with games developed in just a few days (as with Flappy Bird), our study shows clearly that such cases are extreme outliers.

Surprise #1: Incentives

This first page of our survey also revealed two major surprises.

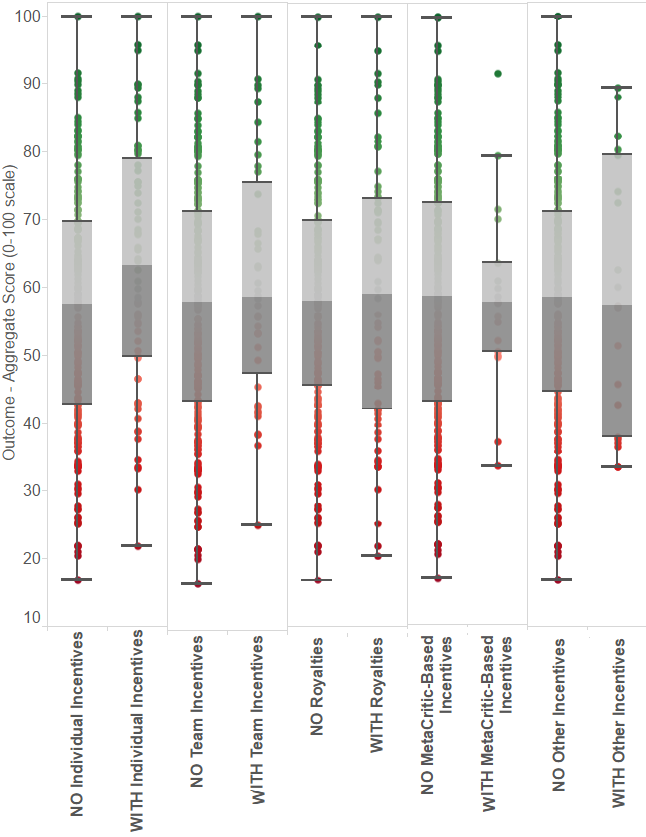

The first surprise was financial incentives. The survey included a question: “Was the team offered any financial incentives tied to the performance of the game, the team, or your performance as individuals? Select all that apply.” We offered multiple check boxes to say “yes” or “no” to any combination of financial incentives that were offered to the team.

The correlations are as follows:

Figure 8. Incentives (horizontal axis) plotted against game outcome score (vertical axis) for the five different types of financial incentives, using a box-and-whisker plot. From left to right: incentives based on individual performance, team performance, royalties, incentives based on game reviews/MetaCritic scores, and miscellaneous other incentives. For each category, we split all 273 data points into those excluding the incentive (left side of each box) and those including the incentive (right side of each box).

Of these five forms of incentives, only individual incentives showed statistical significance. Game projects offering individually-tailored compensation (64 out of the 273 responses) had an average score of 63.2 (standard deviation 18.6), while those that did not offer individual compensation had a mean game outcome score of 56.5 (standard deviation 17.7). A Wilcoxon rank-sum test for individual incentives gave a p-value of 0.017 for this comparison.

All the other forms of incentives – those based on team performance, based on royalties, based on reviews and/or MetaCritic ratings, and any miscellaneous “other” incentives – show p-values that indicate that there was no meaningful correlation with project outcomes (p-values 0.33, 0.77, 0.98, and 0.90, respectively, again using a Wilcoxon rank-sum test).

This is a very surprising finding. Incentives are usually offered under the assumption that they are a huge motivator for a team. However, our results indicate that only individual incentives seem to have the desired effect, and even then, to a much smaller degree than expected.

One possible explanation is that perhaps the psychological phenomenon popularized by Dan Pink may be playing itself out in the game industry – that financial rewards are (according to a great deal of recent research) usually a completely ineffective motivational tool, and actually backfire in many cases.

We also speculate that in the case of royalties and MetaCritic reviews in particular, the sense of helplessness that game developers can feel when dealing with factors beyond their control – such as design decisions they disagree with, or other team members falling down on the job – potentially compensates for any motivating effect that incentives may have had. With individual incentives, on the other hand, individuals may feel that their individual efforts are more likely to be noticed and rewarded appropriately. However, without more data, this all remains pure speculation on our part.

Whatever the reason, our results seem to indicate that individually tailored incentives, such as Pay For Performance (PFP) plans, seem to achieve meaningful results where royalties, team incentives, and other forms of financial incentives do not.

Surprise #2: Production Methodologies

Our second big surprise was in the area of production methodologies, a topic of frequent discussion in the game industry.

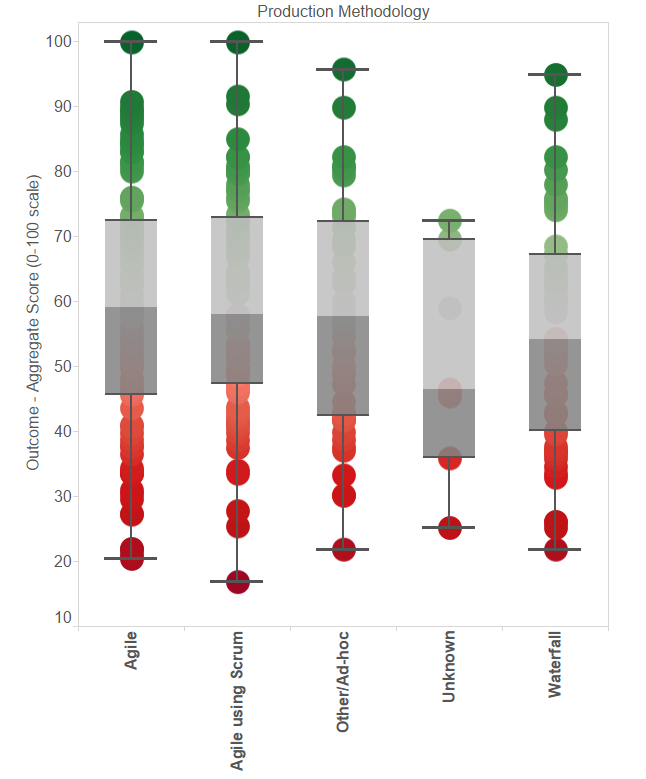

We asked what production methodology the team used – 0 (don’t know), 1 (waterfall), 2 (agile), 3 (agile using “Scrum”), and 4 (other/ad-hoc). We also provided a detailed description with each answer so that respondents could pick the closest match according to the description even if they didn’t know the exact name of the production methodology. The results were shocking.

Figure 9. Production methodology vs game outcome score.

Here's a more detailed breakdown showing the mean and standard deviation for each category, along with the number of responses in each:

Average composite score

Unknown | 50.6 | 17.4 | 7 |

|---|---|---|---|

Waterfall | 55.4 | 17.9 | 53 |

Agile | 59.1 | 19.4 | 94 |

Agile using Scrum | 59.7 | 16.9 | 75 |

Other / Ad-hoc | 57.6 | 17.6 | 44 |

What’s remarkable is just how tiny these differences are. They almost don’t even exist.

Furthermore, a Kruskal-Wallis H test indicates a very high p-value of 0.46 for this category, meaning that we truly can’t infer any relationship between production methodology and game outcome. Further testing of the production methodology against each of the four game project outcome factors individually gives identical results.

Given that production methodologies seem to be a game development holy grail for some, one would expect to see major differences, and that Scrum in particular would be far out in the lead. But these differences are tiny, with a huge amount of variation in each category, and the correlations between the production methodology and the score have a p-value too high for us to deny the assumption that the data is independent. Scrum, agile, and “other” in particular are essentially indistinguishable from one another. “Unknown” is far higher than one would expect, while “Other/ad-hoc” is also remarkably high, indicating that there are effective production methodologies available that aren’t on our list (interestingly, we asked those in the “other” category for more detail, and the Cerny method was listed as the production methodology for the top-scoring game project in that category).

Also, unlike our question regarding game engines, we can't simply write this off as some methodologies being more appropriate for certain kinds of teams. Production methodologies are generally intended to be universally useful, and our results show no meaningful correlations between the methodology and the game genre, team size, experience level, or any other factors.

This begs the question: where’s the payoff?

We’ve seen several significant correlations in this article, and we will describe many more throughout our study. Articles 2 and 3 in particular will illustrate many remarkable correlations between many different cultural factors and game outcomes, with more than 85% of our questions showing a statistically significant correlation.

So it’s very clear that where there were significant drivers of project outcomes, they stood out very clearly. Our results were not shy. And if the specific production methodology a team uses is really vitally important, we would expect that it absolutely should have shown up in the outcome correlations as well.

But it’s simply not there.

It seems that in spite of all the attention paid to the subject, the particular type of production methodology a team uses is not terribly important, and it is not a significant driver of outcomes. Even the much-maligned “Waterfall” approach can apparently be made to work well.

Our third article will detail a number of additional questions we asked around production that give some hints as to what aspects of production actually impact project outcomes regardless of the specific methodology the team uses -- although these correlations are still significantly weaker on average than any of our other categories concerning culture.

Conclusions

We are beginning to crack open the differences that separate the best teams from the rest.

We have seen that four factors – total project duration, team experience level, financial incentives based on individual performance, and re-use of an existing game engine from a similar game – have clear correlations with game project outcomes.

Our study found several surprises, including a complete lack of any correlations between factors that one would assume should have a large impact, such as team size, game genre, target platforms, the production methodology the team used, or any additional financial incentives the team was offered beyond individual performance compensation.

In the second article in the series, we discuss the three team effectiveness models that inspired our study in detail and illustrate their correlations with the aggregate outcome score and each of the individual outcome questions. We see far stronger correlations than anything presented in this article.

Following that, the third article explores additional findings around many other factors specific to game development, including technology risk management, design risk management, crunch / overtime, team stability, project planning, communication, outsourcing, respect, collaboration / helpfulness, team focus, and organizational perceptions of failure. We also provide a self-reflection tool that teams can use for postmortems and self-analysis.

Finally, our fourth article brings our data to bear on the controversial issue of crunch and draws unambiguous conclusions, and our fifth article summarizes our results.

The Game Outcomes Project team would like to thank the hundreds of current and former game developers who made this study possible through their participation in the survey. We would also like to thank IGDA Production SIG members Clinton Keith and Chuck Hoover for their assistance with question design; Kate Edwards, Tristin Hightower, and the IGDA for assistance with promotion; and Christian Nutt and the Gamasutra editorial team for their assistance in promoting the survey.

For announcements regarding our project, follow us on Twitter at @GameOutcomes

Read more about:

Featured BlogsAbout the Author(s)

You May Also Like

.jpeg?width=700&auto=webp&quality=80&disable=upscale)