Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

The audio engineering for Finding Monsters Adventure

Have you ever wondered how a monster sounds like behind the code? Learn with our engineer, Vic Hasselmann, how the Audio Engineering of Finding Monsters Mobile and VR was developed!

*This article tells our experience engineering the audio of Finding Monsters. All the opinions expressed here are mine and not necessarily reflect the studio opinions.

After the release of Jake & Tess' Finding Monsters Adventure, both available on Smartphone and Gear VR, it would be wonderful to share what we learned while engineering the audio for the two versions and give an insight of the workflow. This article will cover how our audio system works, what is the role of a technical sound designer and the differences between Smartphone and VR worlds.

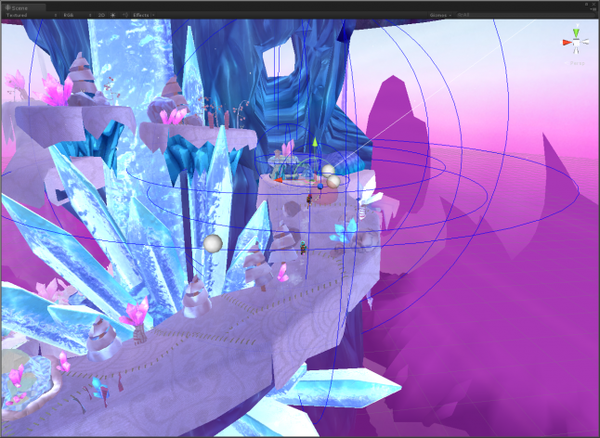

Finding Monsters Adventure - Mobile Trailer

I played the role of Technical Sound Designer on Jake & Tess' Finding Monsters Adventure, being responsible to implement all the audio features designed by our game design and sound design team. Even with music knowledge and expertise, it was my first time doing the job, which was really interesting.

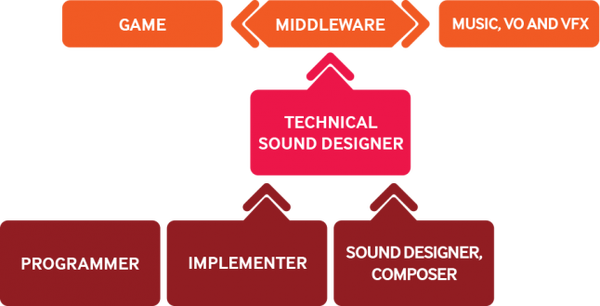

First off, let me present the concept of Technical Sound Designer, which is a Sound Designer who also implements the sounds in the game using a middleware. In practice, the technical sound designer does the implementation and design of playback systems and makes it an integral part of the sound designing process. Some alternative names for this professional would be Music implementer, Adaptive Composer, Music Integrator or even Music Designer. The role of Technical Sound Designer will be similar to this diagram:

Now that we know the importance of this role in our project, let’s talk about how the engineering works and how we designed the immersive experience for the player with the highest quality possible. I will cover all the important topics related to this project, some problems and solutions we found.

The engineering behind the audio

FMOD Studio is designed to be used in conjunction with the FMOD Studio Programmer’s API. The Programmer’s API allows an audio programmer to implement content made in FMOD Studio in a software project and control its playback through code. In Jake & Tess' Finding Monsters Adventure we used FMOD as Middleware integrated with Unity plug-in.

FMOD uses the concept of listener, events and emitters. In our game, the game camera represents the player ears in the game world. The player’s ears in a game are referred to as the listener and this is our first important term. The listener is often, but not always, positioned with the game camera much like a microphone. It hears all the sound produced in the game world.

The sounds that are produced in the game world are created by invisible objects called emitters. A 3D model in the game makes no sound on its own. Thus, an emitter with the appropriate sounds is required to be attached to the game object to produce sound. In summary, emitters make sounds and listeners hear them.

When I joined the project, the audio system was feeble and had to be improved. The first problem was to find a way to play multiple sounds dynamically. In old versions of the game, a lot of instances of emitters were being referenced to a game object, which makes the task of adding new sounds really messy. The solution I found was to create a scriptable object containing all the sounds of the game and make a constant string reference with the sound asset name. When a new sound needs to be played, the audio system that handles the entire library will receive a request from the source game object and return a new emitter with the given sound attached to it and ready to play. It’s important to know that we have 3 different types of audio playing in our game: music, effects and ambient sounds. They can be either 2D or 3D.

The 2D sound is simple. You just need to add the FMOD Emitter Component and invoke the play function. Within an update check you can easily destroy the event after its finish. The sound will play with the default attenuation and can be manipulated within your different playback states.

The 3D sound works in a different way. It needs the emitter to be already attached to a game object inside the scene and the given sound asset has to be 3D as well. Why is that? It’s because the 3D sound event gets attenuated based on the distance from the emitter to listener.

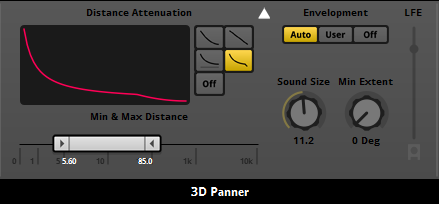

The minimum and max distance

The minimum distance refers to how far a sound will travel before it starts to attenuate. In a real world, all sounds drop off as the sound waves travel through the air and lose power. In a game world, the point of sound emission is essentially a tiny point in space, so having the sound always drop off instantly might not reproduce the effect that is desired. The sound will be produced from the emitters and will travel with maximum amplitude, which is volume, until it reaches the defined minimum distance then it will drop off amplitude until it reaches the defined maximum or max distance.

When the sound reaches the defined max distance it stops attenuating. That means whatever volume it is when it reaches the max distance will be maintained from that distance outwards. When using an inverse drop off, we will set the max distance far enough away from the sound source.

Distance Attenuation

The sound may have naturally faded to inaudible depending on the nature of the drop off curve. If however you set the max distance to a value where the sound is still audible it will stay at that level no matter how far away you travel. The FMOD Studio allows us to define the most suitable drop off curve for our sound in a 3D space. The distance attenuation can also be switched to off which results in the sound having no drop-off curve. There are four attenuation curve options in the 3D Panner: Linear Squared, Linear, Inverse and Inverse Tapered.

Designing the immersive experience

Even in its early stage, our teams always cared about the music style of the game, because of that the sound and music clearly reflects the visual art style of Jake & Tess' Finding Monsters Adventure. There are a lot of ambient sound pieces that fit together to create the perfect harmony, describing the experience that you are into. Wind, birds, waterfalls and snowstorm effects are in everywhere, surrounding the magical world.

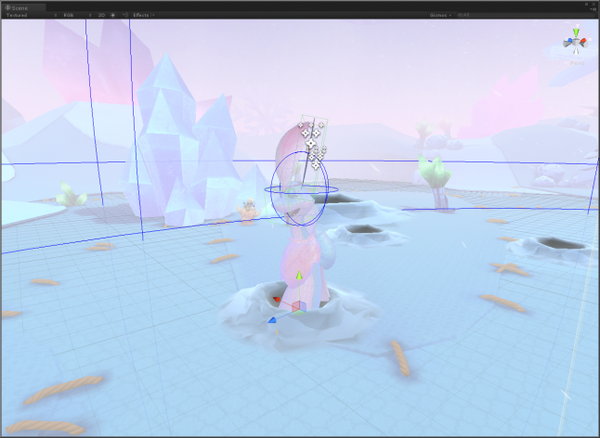

Designing an immersive 3D perception inside the Smartphone is tricky. We are always positioning the audio listener to follow the camera during the game play to create the impression of reflections, occlusions and realistic spatial positioning on the environment.

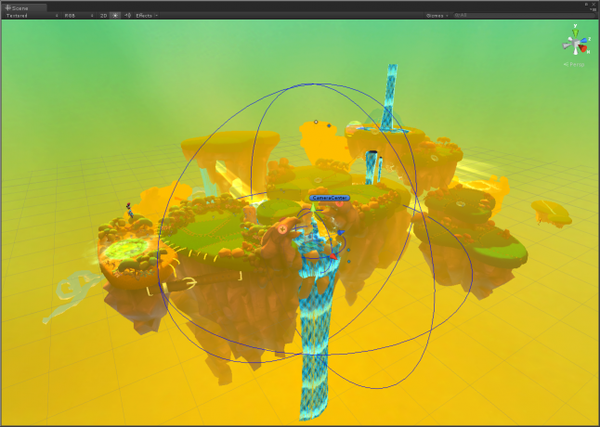

In VR, people are more attuned to what sounds feels inside the environment. We cared about creating immersion with a sense of placement in the world. For instance, the 3D perception works simply: almost all of environmental and ambience sounds in Jake & Tess' Finding Monsters Adventure are 3D. We do not need to move and position the audio listener to keep it in a certain relation to the camera position like in Smartphone due the fact that the sounds are always repositioning as you rotate your head to feel a sense of direction.

Cutting the rags

Game development is such an iterative process and sound design is no different. It was a huge benefit to have a programmer dedicated almost full time to support the audio even in early stages, to understand the nature of the overall experience and exactly what would be required to achieve the sound we were aiming for.

Read more about:

Featured BlogsAbout the Author(s)

You May Also Like

.jpeg?width=700&auto=webp&quality=80&disable=upscale)