Trending

Opinion: How will Project 2025 impact game developers?

The Heritage Foundation's manifesto for the possible next administration could do great harm to many, including large portions of the game development community.

Wondering what the landscape for stereoscopic 3D games looks like? This in-depth article examines platforms, display technologies and middleware, to offer a look at the landscape for developers planning to implement 3D into their games.

[Wondering what the landscape for stereoscopic 3D games looks like? This in-depth article from Darkworks SA co-founder Guillaume Gouraud examines platforms, display technologies and middleware, to offer a look at the landscape for developers planning to implement 3D into their games.]

Whether you love it or hate it, stereoscopic 3D is here, and with it comes new challenges that will certainly have developers and publishers questioning if they have the technological muscle and understanding to make the leap into this new arena.

To help, we'll cover how stereoscopic 3D works, while also examining various technological solutions making true depth possible. And with the new surge of technological advancements over the past years, there is indeed plenty to explore.

In 2006, a new wave of consoles introduced High Definition content within the video game industry. While this shift proved to be a delicate subject for some, the majority of the console industry embraced HD as a hot new technology that promised to advance the gaming experience with stunning visuals and a never-before-seen level of immersion.

Several companies did well throughout this transition, while others were not so fortunate. All the same, HD is now entrenched within the industry.

Fast forward to the year 2011 -- now 3D is poised to secure its position as the future of interactive entertainment. As evidence of this evolution, electronic titans Sony, Microsoft, and Nintendo have all opened their systems to be 3D capable.

Sony first issued an update in 2010 to the PlayStation 3's firmware, permitting it to play new 3D-enabled Blu-rays and games. Microsoft too made the jump with the Xbox 360, putting the responsibility on developers to incorporate 3D into their titles.

While Microsoft is not pushing this feature just yet, the system equals the 3D quality already available on the PlayStation 3. Nintendo also made its move into the third dimension by developing the world's first handheld 3D gaming console, the 3DS.

To understand the various elements involved in creating 3D-enabled games, it's necessary to first examine how 3D images are processed by the end user. Furthermore, we will also take a look at the fundamental technologies used to display these images.

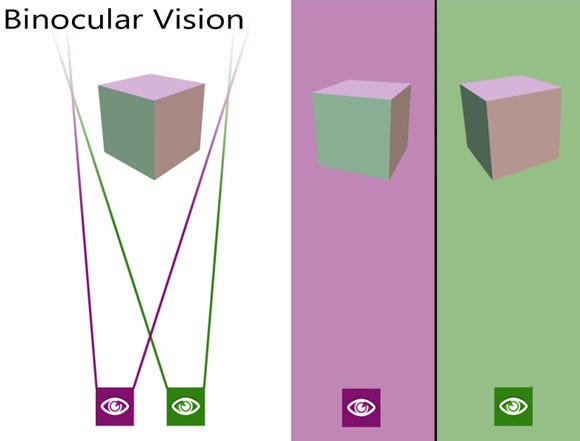

The process that enables the viewing of three-dimensional objects is known as Binocular Single Vision. This allows us to distinguish between two and three-dimensional images based on the fact that we have two distinct eyes that provide slightly different perspectives of the same object.

When the object is viewed, because our eyes are positioned inches apart from one another, each eye observes separate and slightly dissimilar images from the other, which is call parallax. Our brains don't see the two separate images as double, but rather it fuses them together through a process known as stereopsis to form a single percept. This allows us to not only distinguish the length, width and height of objects, but the depth and distance between them as well.

To exhibit true depth using display mechanisms, there is one universal law that all 3D technologies must have in common. That is, using the same guiding principles as our visual system, 3D displays have to rely on sending slightly different perspectives of the same image to each eye. While there are variations on how this is accomplished, ultimately the technologies can be divided into three categories. Those are passive, active shutter and auto-stereoscopic display. All are explained in detail below.

Filtered lenses are often referred to as "passive glasses" as they do not require the use of batteries nor do they need to be electronically linked to the display mechanism, which active systems require. Instead, they use simple optical filters to selectively sort the right and left images to the correct eye.

Anaglyph visualization was the earliest form of passive 3D and was developed more than a hundred years ago by Wilhelm Rollmann. The workings behind this technique are fairly straightforward. Using strongly different, almost chromatically opposite colors, the left and right images must be dyed and printed on top of one another via the same, two-dimensional medium such as a plain sheet of paper. The viewer can then decode the fused image by wearing a set of glasses that contain corresponding colored filters, sending a precise image to the correct eye without interference.

A company called TriOviz has recently commercialized glasses they call INFICOLOR that use a part of the technology that enabled these sorts of anaglyph 3D glasses, but with a modern innovative twist -- the use of much more complex filters that allow wearers to perceive natural colors with great viewing comfort. INFICOLOR glasses allow regular 2D HDTVs to render 3D images; they work with games that have been pre-treated with TriOviz's technology.

More recently, a newer version of passive technology has emerged using polarized lenses. Many of you are most likely familiar with this technique as it's commonly used by RealD and IMAX 3D to display movies in true 3D. However, as of late, TV manufacturers such as Vizio and LG have also decided to incorporate this system into their new lines of 3D TVs.

The technology works by interlacing the left and right images together using a unique screen made of two emitting filters on top of one another. Each image is displayed using a property of light called polarization. This allows the passive-polarized glasses to then selectively filter out light between two images using the corresponding polarized films. Therefore, each eyepiece must be polarized in a different direction, allowing separate images to be delivered to each eye. In this manner a 3D effect is achieved.

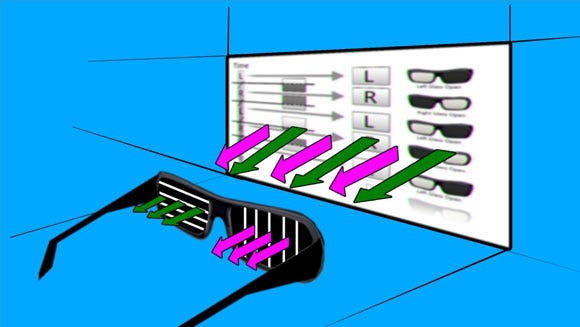

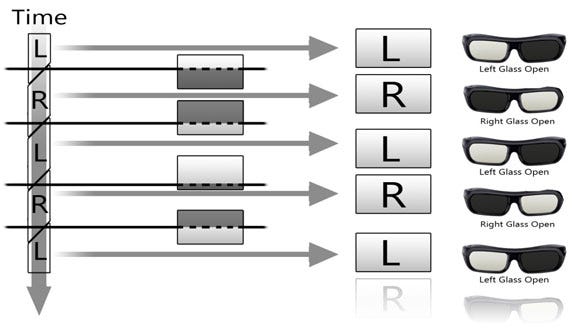

While polarization is largely taking on duties in cinema, active shutter is the primary technology used in home entertainment systems. The key reason for this is that the technology takes very little modification to work with HDTVs, making them easy to develop into 3D sets, and an ideal choice for commercial TV manufactures.

The display mechanism works by taking advantage of the high frame rates (120Hz and above) that are available in current LED and plasma TVs. This allows the TV to display two high-definition pictures, each image being displayed at a high refreshment frequency (>60Hz) in order to achieve temporal multiplexing.

Shutter glasses are then required to be synced with the TV in order to actively filter the corresponding frames so each eye only receives the intended image, thus simulating a 3D effect.

The third and final technology is auto-stereoscopic display, also known as parallax barrier. This display system differs from the two previously discussed technologies, as it does not require that users wear glasses when viewing three-dimensional images.

Instead, this technology relies on a special optical filter which divides the images and enables it to direct light to each eye so the viewer perceives a coherent left and right image, thus producing the illusion of depth. This works well for fixed-length viewing, typically in portable devices such as Nintendo's 3DS.

To display 3D, you need to output two images, one for each eye, thus doubling the amount of data transferred from the console video chip to the TV. To begin, it is necessary to have a TV capable of handling 3D, and a console capable of outputting this amount of data. Thanks to the HDMI standards, and more precisely HDMI 1.4a, there is a specific format capable of handling 3D called "3D over HDMI," which allows up to 720p for each eye.

To output 3D over HDMI, the game engine has to create a HDMI 1.4a compatible frame, which has a specific resolution and format, the HDMI protocol and the 3DTV do the rest. Currently, only the PS3 and Xbox 360 have announced support for HDMI 1.4a standard (for Blu-ray playback on PS3, for example).

Other consoles need to rely on previous versions of HDMI standards, using other 3D video formats such as side-by-side or top-bottom, where the resolution is halved to include two images in one frame, or otherwise depend on new stereoscopic display mechanisms, like the 3DS with its auto-stereoscopic screen.

When constructing three-dimensional virtual worlds, there are two primary 3D rendering techniques employed in video games: 2D + depth rendering, and dual rendered 3D.

2D + depth rendering creates the 3D effect in games by sampling the geometry in the scene to obtain the depth-map, and then using it to generate a second point of view from the regular 2D color image. This technique renders the scene for the left eye, and then creates the image for the right eye using a per-pixel displacement based on the depth map -- making the results geometrically accurate.

And because the effect is calculated at the end of the post-process chain from a disparity map, it can be entirely and dynamically tweaked. The main tweaking parameters are the overall effect strength, the amount of negative and positive parallax (how much object appear in front of the screen or behind the screen), how the depth budget is to be allocated, and finally where the focal point should be set.

The key advantage to the 2D + depth technique is the low impact that its integration has on production. It requires that the game only render frames once, which has a low performance impact on the game. Furthermore, many 2D + depth effects give artists the means to tweak parameters of the 3D effect itself in order to get desired results. The only drawback is that 2D+ does have an upper limit on how much parallax can be created in a scene, but for gamers, even a slightly less intense 3D effect has been a welcome experience.

Several recent Xbox 360 and PlayStation 3 games have used the 2D + depth to great effect. These games have either used technology from Darkworks (using the TriOviz for Games SDK that allows game makers to produce games that render 3D images on 3D TVs as well as on 2D HDTVs via INFICOLOR glasses) or technology from Crytek.

In comparison, dual rendering creates the strongest 3D effect, and is used for movies as well as in video games. For movies, to create stereoscopic 3D images using the effect of parallax, film makers need to capture two images shot from slightly different angles. This means 3D-compatible cameras are needed in order to record the two images simultaneously. This allows one image for each eye to be projected onto the same screen to create a 3D effect.

In the case of video games, to construct a three-dimensional virtual world, game makers need to position characters, buildings and other objects in a manner that simulates a miniature version of the real world. In doing this, they can position two cameras to capture slightly dissimilar images of the same scene, just as the right and left eyes would do.

However, while this solution may offer the best 3D effect, performance penalties at run time for the game as well as production complexity added by the performance impact of this method means that dual rendered 3D effects on consoles face significant obstacles.

When a game renders twice as many images as the original game to create a 3D scene, the application runs half as fast. In order to run at framerate, many games that dual render have to reduce the resolution of their geometry, or run at slower framerate.

Practically speaking, a dual rendered 3D mode can work for some titles, but it can be disruptive to a game as well.

Many game makers have already taken advantage of these solutions adding 2D + depth capabilities into games such as Thor: God of Thunder, Enslaved: Odyssey to the West and Batman Arkham Asylum: GOTY edition.

Titles utilizing the dual rendering method consist of Sony's first-party titles like Killzone 3, Gran Turismo, as well as third-parties games like Mortal Kombat and Call of Duty: Modern Warfare 2. Currently there are more than 30 3D-enabled titles available for the PlayStation 3 alone, with many more slated to be released in the next year.

Industry analyst Scott Steinberg anticipates this growth will continue into the coming years.

"From a game maker's standpoint, 3D is here, it's real and it's an affordable way to bring an entirely new dimension to interactive entertainment, with top franchises from Mario Kart to Mortal Kombat increasingly making the jump," he says. "Buoyed by the launch of ever-more-capable and reasonably-priced 3D television sets, tablet PCs, smartphones and leisure time devices, it's already become a watchword for industry leaders from Electronic Arts to Warner Bros Interactive Entertainment.

"Backed by cutting-edge debuts such as Killzone 3 and Crysis 2, the 3D TV market is expected to top $100 billion -- including an audience of 40 million gamers -- by 2014 alone. To put things in perspective, Hollywood is anticipated to increase 3D film production by roughly 60 percent and box office receipts by $1.7 billion over 2010."

It's evident that three-dimensional media has seen a great deal of interest and growth over the last few years. And with more studios taking note of the new 3D trend, it's important that everyone involved in the process understands the principals behind 3D and how they can be used to create games that actually extend a sense of reality, resulting in experiences that consumers want.

Thanks to our experience with TriOviz for Games technology, we here at Darkworks know how to do that and we want to make sure others know how to do that as well. With this article, our aim is to educate game makers in terms of how they can use technology to create high quality 3D experiences for end users while also providing an inside look at the world of 3D. This feature merely scratches the surface of the topic, so for the next article we will jump into further details regarding the various platforms and technique specificities.

Read more about:

FeaturesYou May Also Like