Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs.

Steel Hunters Dev Log: What Have I Done [Literally & Figuratively]

Mostly a development update on my indie game (Steel Hunters), some highlights, some budget low-low-low-low-lights (and publishing? maybe?), environment creation/iteration, gameplay this and that, MICROMORTS/Banana Equivalent Dose, and syyyystems design!

This article was originally posted on the Joy Machine blog maybe check it out too (it also has a table of contents because this really is poorly organized wordspew)!

I wanted to cover a variety of Steel Hunters updates across the board so this piece is covering a lot of ground on a variety of areas; prepare for a large-cone blast of wordspew.

Completely Wonderful Recent Happenings

To kick off this topical and tonal hurricane of a Steel Hunters update, lets start with the two biggest morale boosts in months:

Steel Hunters and I Are in PC Gamer

I’ve worked with Xalavier on another piece for PC Gamer in the past, but seeing the game in a big area in the spread was just joyous.

I’ve had work on games while I was at Stardock Entertainment and when working briefly on Crowfall… but seeing something I’m directly responsible for like this is just, well, pretty profoundly meaningful to me.

An iOS-Created Slideshow of Steel Hunters’ Progression

During my sprints it can be hard to calm down and get to sleep, so I tend to play with iOS image and video apps.

This time: a simple, mostly-chronological, progression of Steel Hunters from its tech art/vfx sandbox playground two years ago up to a few weeks ago.

It’s goofy, sure, but it’s such a strong reminder that progress (tremendous progress that I couldn’t have ever fully predicted) is being made. Even when it feels like I’m crawling to the “finish line”. As follows:

I still wish I could have used “Carry On Wayward Son” for the background track.

Joy Machine, Steel Hunters, and the Whole Money Thing

So far, Steel Hunters has been an enormous personal investment (and clearly not financially), but it’s one I’m making because I believe it demonstrates a sort of quality and style of game design that can make for a really great game experience. But, also, because the game entire production is based on:

An efficient programming/design ideology aimed at getting the absolute most out of any given system/feature.

A content workflow that gets the most out of whatever content can be created while, also, minimizing the cost of the content that needs to be created (especially when it comes to mech/behemoths, which could have otherwise been incredibly costly to create).

I actually have the crew at my first post-college gig, Stardock Entertainment, who instilled, well, making as good a game as is possible while minimizing costs.

The requirements for an initial launch are completely viable for a small team to achieve and the game’s architecture is designed to be easily updated (especially without any need for major patches/binary updates) and frequent timed events and content and so on (one of the very few great things I realized when working in mobile games).

But it Needs the Funding to Get There

So far, I’ve been the only person working on Steel Hunters; and that’s been, to say the very, very least: rough. I’m doing this because I have enough confidence in the quality of the initial publisher demo (which hopefully can be successfully conveyed in the short initial public trailer). And, aside from that, I’ve never had more fun working on a project and been this certain about how great and varied an experience it can be (whether played single-player or, especially, with up to three other players).

I’ve also constantly targeted a level of quality for a “prototype” (probably more akin to a green light demo at this point) that is representative of the game while, at the same time, based on production-ready and quality code and design that it can immediately “hit the ground running” with more people involved. It’d be fairly impossible to make the full game by myself, much less hit the intended play experience without some excellent team members who specialize in areas I can only skate by on.

I specifically wanted to position Steel Hunters as a AA-quality game and aim for as low a budget necessary as possible because I want really want to demonstrate that even a fast-paced third-person shooter (with heavily customizable ways for players to play how they want) can be made without a AAA budget. And all of this would be possible for the list of reasons I mentioned earlier.

I actually had some early (too early) negotiations with some publishers over a year ago that, I think, could have actually become a deal… But I wasn’t psyched with their view of what the game needed (especially given the drastically inflated cost associated with those needs), so I eventually decided to just be patient and spend as much time on whatever I can develop in an attempt to show off a game that resonated with a publisher that really gets the goals we (my COO and I) have.

The Public Trailer and Publisher Demo

NOW, all that said, the first trailer is — for real this time — not too far off. Not imminent, but very much in sight. Realistically, I could make a few days’ worth of changes and script a handful of sequences and it’d be pretty solid, but I’ve always wanted the trailer to be solely comprised of unscripted, in-game footage — even if it’s only the highlight reel from the sum total of footage taken. When I initially wanted to release a trailer (a year ago), I realized doing that would be falling into the trap of so many other projects: a trailer that is good but nowhere near representative of the game’s actual current state.

And, really, the trailer is a larger risk because it can’t be as carefully storyboards and scripted to really make the most of its fairly short duration (~1:15m). But, it’d be silly to talk a big game about “how games should be developed and marketed” if I didn’t even follow the same principles myself.

But the trailer has a lot going for it: we integrated a character, of sorts, to convey the intended dry and dark humor I want to infuse the game with while the gameplay itself just shows a lot of mechs and explosions and so forth (to be voiced by a VO actress, Tamara Ryan, whose rough recordings matched the tone and cadence of the character on even an initial dry run). I also was able to get help from one of my favorite bands, The Felix Culpa, to provide the background music for the whole thing. So… I’m optimistic.

The publisher demo is intended to follow on the heels of the trailer. I wanted the two to be simultaneously available, but the prep for the two deliverables is so different that it was just unrealistic

Steel Hunters Happenings

I just cut everything I wrote (which was probably a page and a half or two) because, really, I’ve already talked enough about how intense and rough the development of this project has been. And I’ve learned a lot of great lessons I’d love to relay at some point, but that’s for another post. So, here are the neat things going on:

A Completely Different Take on the Environment

I wasn’t planning on changing the landscape all that much from its second iteration, but one day I wanted to aim for smoother, more sand-swept landscape with less rigid mesas, some small dunes/waves, and smoother, more open spaces. The prior iterations were nowhere near open enough to ever construct a believable space for where a city may have once been.

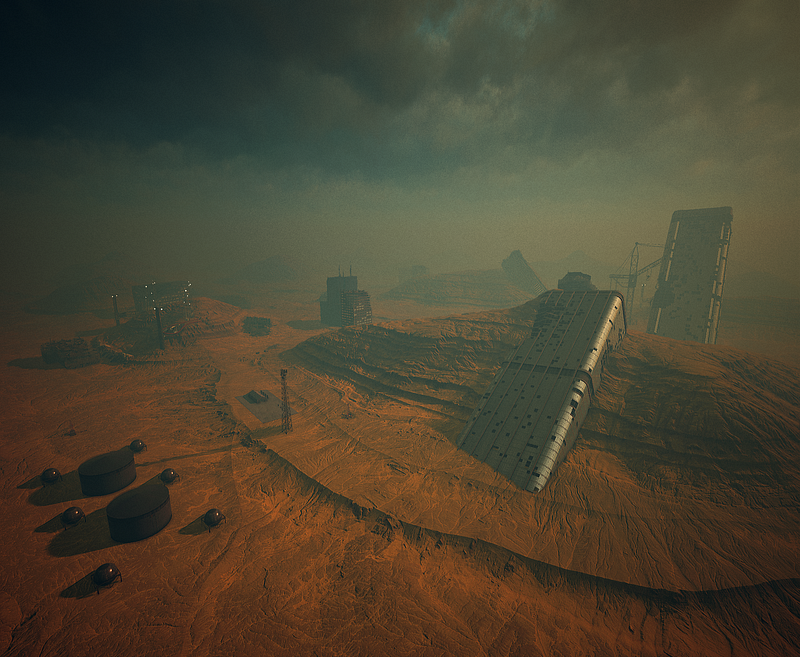

So as to not labor on the whole process too much in an overview article, here’s what iteration two looked like:

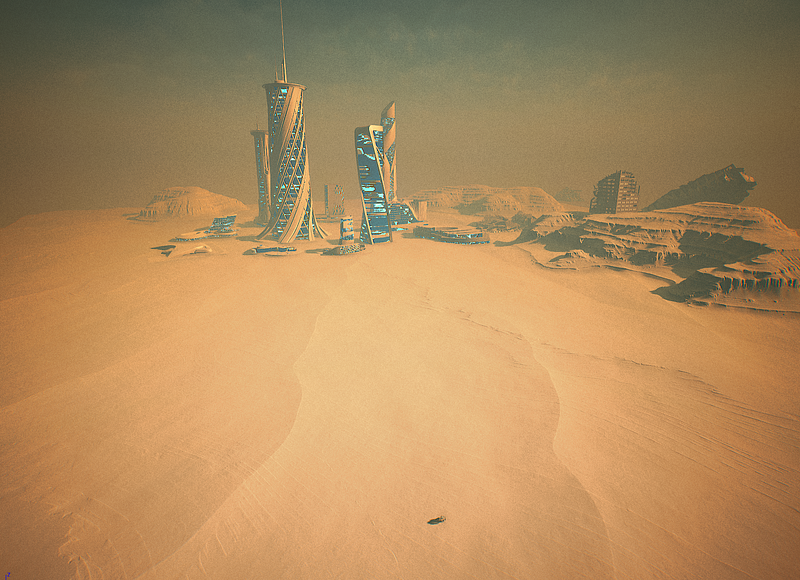

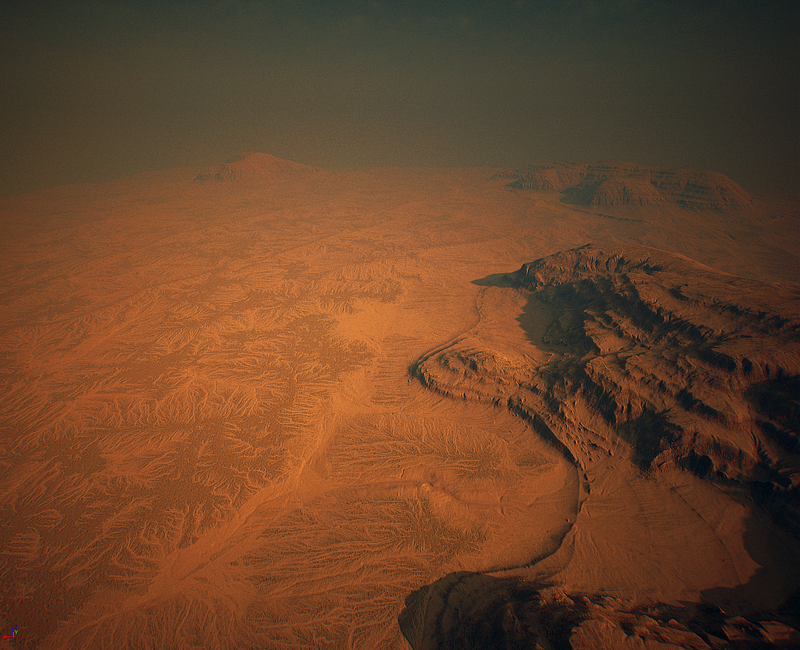

And here’s the lighting-only and end result of the current third/final iteration of the landscape (I didn’t do exact before/afters, so the lighting-only image has meshes from the second-iteration landscape, which I was using for a scale reference):

On the plus side: anyone with World Machine and GeoGlyph 2.0 can check out the two-pass (one of the biggest mistakes in the second iteration) node graph that generated it on the Joy Machine GitHub Repo.

With More Stuff

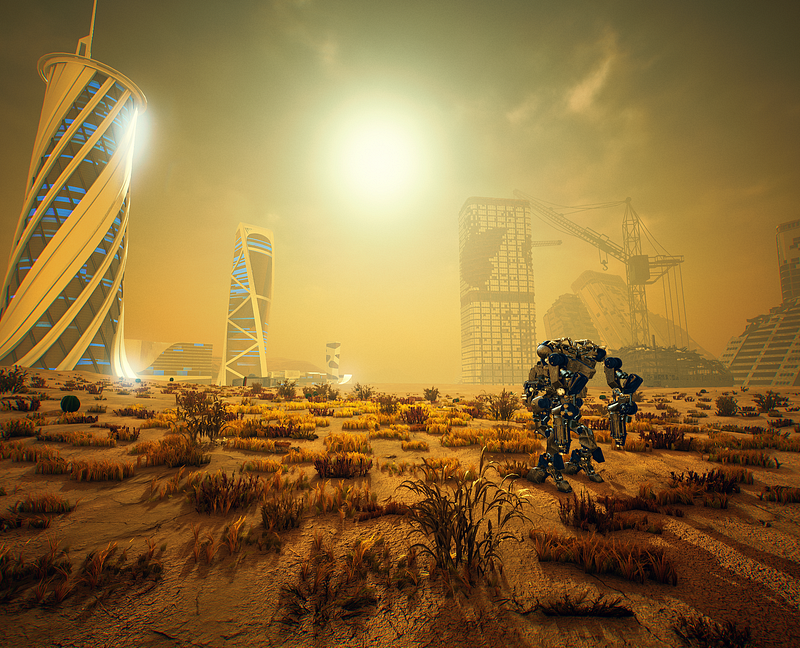

Here’s a more recent screen shot of the progress on the landscape. Of note is that it’s been a lot of high-level building/mesh layout work to establish the rough feel of each of the map’s disparate areas. You may notice that there’s still a fairly large space that hasn’t been filled out quite yet, but I just figured out what that was going to be a day or two ago:

The current aesthetic for the “AI” buildings (the very out-of-place clean, new, and shiny buildings) is very much a work-in-progress.

The Pieces Are Coming Together

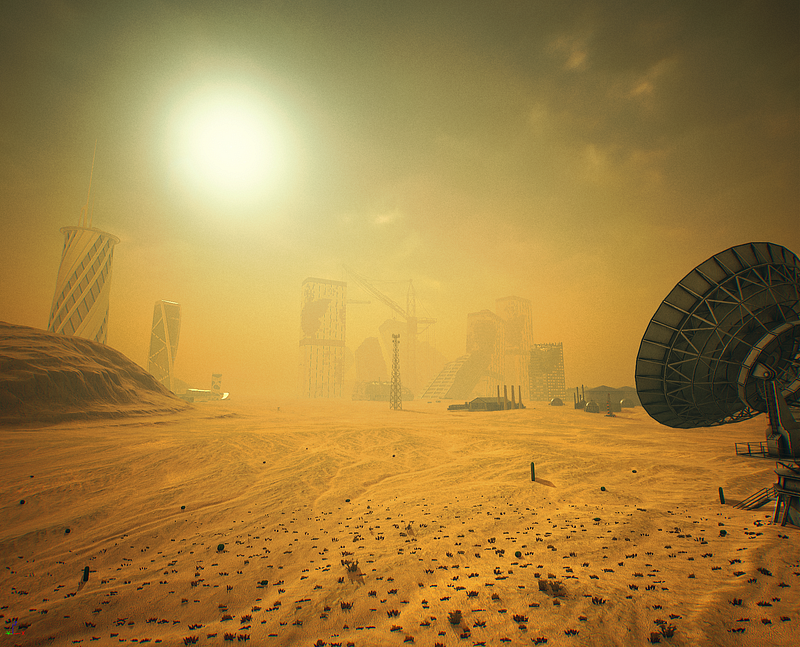

For a more grounded perspective, though, here’s the same camera composition some of you have come to know and love (or at least expect):

Gameplay

I have, much to the detriment of my own stress levels and completely-unfulfilled desired time doing design/vfx work, spent about 90% of my time over the last three months working exclusively on gameplay and backend code. So much C++. So much. But a run-down of the major systems currently in-place and just in need of tweaking to feel and function better:

A custom camera rig and effect stack (effect in this case being game/world effect such as a camera shake or FOV/positioning change).

The custom “physical movement component” that replaces the commonly-used “character movement component” that, if you’ve worked in UE4 or played prototypes/demos/some games, are very familiar with.

This was one of the most difficult development requirements of anything I’ve ever worked on (the second being something that is too large to fit in this list, so will be the next section). There are so many things in the UE4 “Game Framework” which are built around the use of the character (type of pawn) and character movement component that it was a bit of a nightmare to go in and replace them myself. Of all fields in game development: complex collision detection and animation are big blind spots. And the physical movement component combined physics, complex and continuous collision detection and animation all into one mega-funtask.

The most important goal of this task, though, was to make any object using a physical movement component (basically only mechs/behemoths) as fully-integrated into the physical simulations and physics objects in the world as I could possibly make them. If a larger and heavier mech were to run into a lighter mech, the lighter mech would react as you’d expect: it’d be pushed back or its speed would be altered (as opposed to just completely stop upon impact). The component also interacts with physics objects but, more importantly, also applies force/impulse to Blast objects (covered in a bit).

A refactored mech setup that functions better and separates as many of its dependencies on other systems in the game as much as possible. This may not mean much in the actual end playable result, but for development it makes life much, much easier.

The refactor also makes AI mechs, remote human-controlled mechs, and the local player’s mech share all the common functionality that can possibly be shared. This sounds like it should be simple and obvious — and maybe it was — but the mech and mech part architecture was one of the first things I iterated on continuously from the get-go to be as solid as possible. And it was, it just wasn’t done considering how its logic should be split up for all its necessary use-cases.

Complete support across all of Steel Hunters project features for JSON-based config data (locally or from a server) that the game will automatically reload on-the-fly whenever it detects a change to an active file.

I can’t even begin to convey how much faster this made camera setup and weapon/projectile design. It’s fantastic.

More on this whole thing from a past article I wrote.

Support for NVIDIA’s Blast: As far as I’m aware, Blast has yet to be really used in many projects, but it is absolutely wonderful. I’ve gotten to the point where I can start designing meshes explicitly for Blast and the results are, I mean, astounding. A basic example is that if you were to shoot out the legs of a table, it would, of course, eventually topple. Blast adds a “stress factor” into the mix, however, so if you were to only shoot out one table leg and the table was made to look cheaply-built (or whatever), you can make it buckle under the stress of its instability with even a small bump (or a gust of wind which, because I’m a complete nerd, also physically affects certain objects in the world).

Unfortunately, since Blast isn’t widely-used yet, the content workflow is somewhat complicated due to a lack of examples/documentation. That said, like with other GameWorks tech that I’m using, NVIDIA’s developers on each respective tech have been astoundingly supportive. I’m currently working with the lead developer on Blast (at least from the Unreal Engine 4 integrate-side) and an NV tech artist to improve my understanding of the best workflow.

Also: I’m planning on some particularly large-scale world features (meshes, not landscape — that’s static) to be Blast-based. And… Well. I’m beyond excited for how rad that should be.

Then there’s, of course, a variety of engine-side rendering and shading adjustments, improvements to my core material “shaders”, iterations on post-processing effects and atmospherics, and more that I’ll go into another time.

… Except to say that I added support for Multi-Channel Signed Distance Fields for UI elements to make them as clear and smooth for any screen resolution as I possibly can. I can make a 32x32 reticle (which may take up, say, 150–200px of screen space at 1080p) look good whether playing at very low resolutions or at obscenely high resolution. I’ll release my plugin for this once it’s cleaned up a bit.

The Core Game Simulation

I was hoping to postpone work on the core game simulation backend until after the demo/trailer because it’s a huge part of the project and as non-trivial a system I’ve ever had to design, much less develop, in my… career, I think? But, because luck isn’t a thing that exists sometimes, that had to receive first-pass support now. Literally: now. I’m wrapping up the first-pass implementation today.

The whole goal of the game simulation was to be able to easily integrate independent systems/functionality into any type of object in the game so that the object can handle it as it needs to while the actual implementation of the systems (which can be trivial or can be quite complex) it handles never need to care one iota about how an object may handle them. I was always assuming this would be some kind of event system, but the more I looked through my own design notes and documentation, the traditional concept of an event (more specifically the Unreal Engine 4 concept of an event) didn’t quite fit with what I had in mind: a, let’s say “occurrence” for now, would be activated in the world that needed to be handled by any object which supports that occurrence. But each occurrence, not intended to have knowledge of any of the specific objects that would respond to it, would need to be handled by anything that fell within its “influence range” (which can be unbounded). Aside from the challenge that presented, there was the performance implication that a locationally-relevant occurrence could have on a world filled with any number of objects that may or may not support it.

The first thing I did once I realized all of this was try to find a working terminology for the entire simulation architecture that would clearly separate it from the word “event” — otherwise there’s just room for a whole bunch of confusion throughout development (in practice or purely in conversation). This led to:

A search for general development terminology lists.

Then software engineering terminology lists.

Then: various units of measurement. And this one yielded one AMAZING RESULT. Some key examples:

Micromort — “A micromort is a unit of risk measuring a one-in-a-million probability of death (from micro- and mortality).”

Banana Equivalent Dose — “The banana equivalent dose, defined as the additional dose a person will absorb from eating one banana, expresses the severity of exposure to radiation, such as resulting from nuclear weapons or medical procedures, in terms that would make sense to most people.”

As I was searching through all of this, I was talking to my BFF Josh Sutphin(who explicitly said to note that it’s currently undergoing work to make it “not absolute shit”) about what kind of setup I was thinking of. And I said:

I’m thinking of the whole thing as, like, a droplet of water falling into a pond and the ripple effect it causes.

About an hour, I discovered that “Metaphor-Based Metaheuristics” were a thing. As I was looking through the list, I found the “Intelligent Water Drops Algorithm”. A very rough interpretation of the summary and its corresponding paper, while not anywhere near being exactly what I was thinking of, was close enough for me to absolutely dig it. So, my simulation is:

A single “simulation core” that only one very high-level game object has access to. But the simulation core has some publicly-available static methods for interacting with the simulation, of course.

The “simulation droplet” which is, essentially, the contents of a what I previously referred to as “occurrences”.

A “simulation bucket” which holds a list of all pending droplets for the duration of their lifetime and hands references of them out to any object within the droplet’s influence area that support any given droplet type.

These buckets are created and placed throughout the world to cover as much ground as possible to limit the search area for objects whenever it’s holding active droplets.

Currently, there are just a handful of these buckets because I don’t want this first-pass to be a larger time commitment than it already is, but I have a rough generalized adaptive quad-tree implementation that I will likely integration into the simulation core for bucket distribution that adjusts the quantity of buckets based on the density of players (and therefore actions/droplets) in given areas.

This is possibly the most ridiculous thing I’ve ever come up with, but having implemented 95% of it by now, its practical use is perfect. So that’s neat.

Until Next Time

I probably won’t do any more Steel Hunters-specific articles until after the trailer/demo are ready, but this was long overdue.

I’m currently working on Part Three of my two-part series (part one, part two) which is solely as much advice, anecdotes, recommendations, and some more touchy-feely stuff like how to do deal with our weird games industry if you’re someone with a kind of mental illness that I have some familiarity with. So hopefully it’s easy to see why it is taking so long to write.

I’m also working on a very rough overview of C++ programming in Unreal Engine 4 to serve as interim resource for developers working on games and finding the lack of documentation on pretty critical subjects problematic or not fully understanding why C++ in UE4 is the way it is, my best-practices recommendations, a bunch of tips/tricks/snippets/utilities that I’ve developed to make my life easier, as well as a software/extensions (or just the configuration of commonly-used extensions and even Visual Studio itself for best-use with UE4).

I can’t even begin to give a rough idea of when I’ll publish those other than: some day. The whole Steel Hunter thing in addition to contracting that I need to do to, like, live is starting to be a substantial high-wire act to maintain. But I’ll get there!

Read more about:

Featured BlogsAbout the Author(s)

You May Also Like