Trending

Opinion: How will Project 2025 impact game developers?

The Heritage Foundation's manifesto for the possible next administration could do great harm to many, including large portions of the game development community.

Can you use metrics to predict when players will leave your game? Innova, a Russian MMO operator, decided to test it out on NCSoft's Aion, and in this article, the company's head of analytics and monetization, explains the team's road to accurate predictions.

May 17, 2012

Author: by Dmitry Nozhnin

The sad truth about all online services and games? The most significant churn occurs right the first minutes and hours of gameplay. The issue has been already explored in a numerous ways, with many profound hypothesizes related to usability and simplicity of interface, availability of a free trial, learning curve, and tutorial quality. All of these factors are considered to be very important.

We set a goal to investigate why new players depart so early and to try to predict which players are about to churn out. For our case study, we used the MMORPG Aion, but surprisingly, the results appear to be applicable to a wide array of services and games. Although Aion, at the time this study was undertaken, was a purely subscription-based game with a seven-day free trial capped at level 20, the vast majority of churners left long before they had to pay to keep playing. Our research was about in-game triggers for churn.

Behavioral studies show that casual players have a limited attention span. They might leave the game today, and tomorrow won't even recall that it was ever installed and played. If a player left the game, we have to act immediately to get her back.

But how can we differentiate players who churned out of the game from casual players who just have plans for an evening and won't log in for a while? The ideal way would be predicting the churn probability when the player is still in the game -- even before she actually thinks about quitting the game.

Our goal was more realistic: to predict new players' churn the day they logged in for the last time. We define churn as inactivity for 7 days, and the goal was not to wait for a whole week to be sure the player has left the game and won't return, but instead to predict the churn right on their last day of play. We'd like to predict the future!

We had tons of data. Fortunately, Aion has the best logging system I've ever seen in a Korean game: it traces literally every step and every action of the player. Data was queried for the first 10 levels, or about 10 gameplay hours, capturing more than 50 percent of all early churners.

It took two Dual Xeon E5630 blades with 32GB RAM, 10TB cold and 3TB hot storage RAID10 SAS units. Both blades were running MS SQL 2008R2 -- one as a data warehouse and the other for MS Analysis Services. Only the standard Microsoft BI software stack was used.

Having vast experience as a game designer, with over 100 playtests under my belt, I was confident that my expertise would yield all the answers about churn. A player fails to learn how to teleport around the world -- he quits. The first mob encountered delivers the fatal blow -- she quits. Missed the "Missions" tab and wondered what to do next -- a possible quit, also. Aion is visually stunning and has superb technology, but it's not the friendliest game for new players.

So I put on my "average player" hat and played Aion's trial period for both races with several classes, meticulously noting gameplay issues, forming a preliminary hypothesis list explaining the roots of churn:

Race and Class. I assumed it would be the main factor, as the gameplay for the support-oriented priest radically differs from the powerful mage, influencing player enjoyment.

Has the player tried any other Innova games? (We have a single account)

How many characters of what races and classes have been tried?

Deaths, both per level and total, during the trial

Grouping with other players (high level and low level)

Mail received and guilds joined (signs of a "twink" account run by a seasoned player)

Quests completed, per level and total

Variety of skills used in combat

The list was impressive and detailed, describing numerous many ways to divert the player from the game.

So let's start the party. The first hypothesis went to data mining models. The idea is very simple: we predict the Boolean flag is leaver, which tells whether the player will leave today or keep enjoying the game at least for a while:

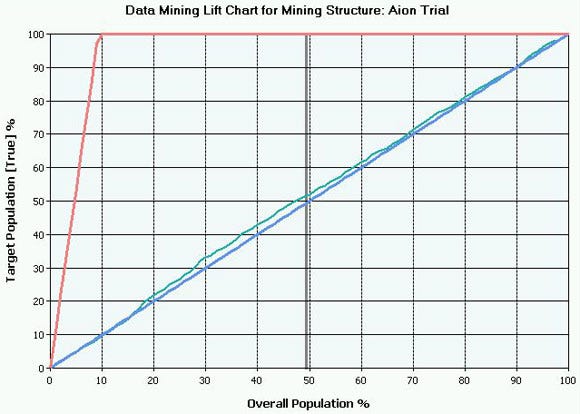

Lift Chart 101: The bottom straight line is a simple random guess. The upper skyrocketing line is The Transcendent One, who definitely knows the future. Between them is the pulsing thin line, representing our data-mining model. The closer our line is to The One, the better the prediction power is. This particular chart is for level 7 players, but the picture was the same for levels 2 to 9.

Fatality! Our first model barely beasts a coin toss as a method of predicting the future. Now it's time to pump other hypotheses into the mining structure, process them, and cross our fingers:

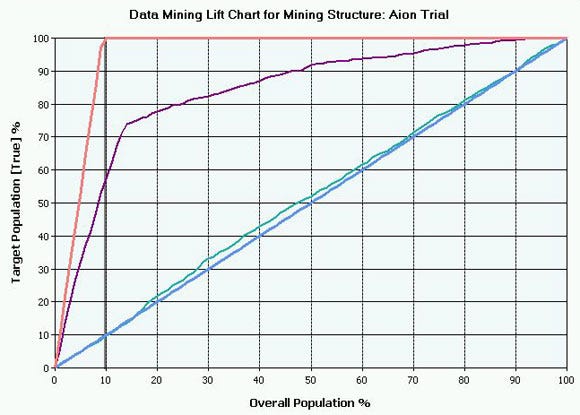

Well, it looks better, but still, precision is just a bit over 50 percent, and the false positives rate is enormous, at 28 percent.

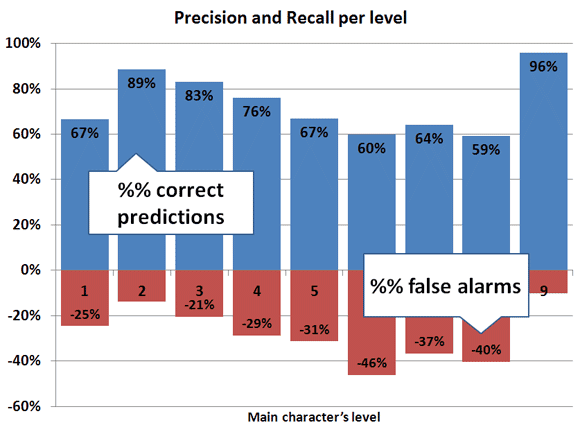

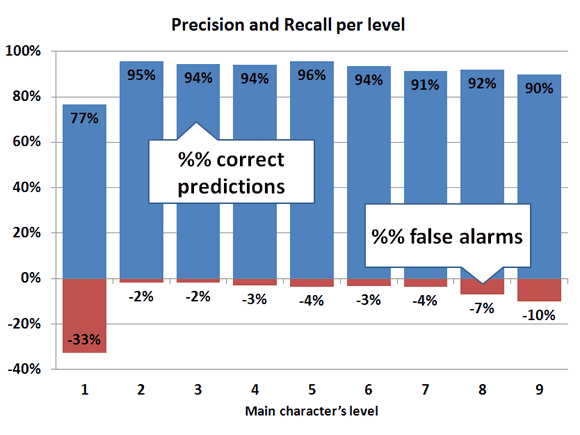

Precision and Recall 101: The higher the precision, the more true leavers the model detects. False positives are those players predicted as churners, when in reality they aren't.

Phase 1 Result: All my initial ideas failed. Total disaster!

The first and simplest data-mining algorithm is naive Bayes, which is extremely human-friendly and comprehensible. It showed that the hypothesis metrics do not correlate with real churners. The second method, Decision Trees, revealed that a few of my ideas were actually quite useful, but not enough to boost the prediction precision to the top.

Data Mining algorithms 101: Naive Bayes is great at preliminary dataset analysis and highlighting correlations between variables. Decision Tree reduces the dataset into distinct subsets, separating the churners from happy players. These methods are both human-readable, but quite different in their underlying math and practical value. Neural Network is essentially a black box capable of taking complex variable relations into account, and producing better predictions, at the cost of being completely opaque for the developer.

I brainstormed with the Aion team, and we had a great time discussing our newbie players -- who they are, how they play, and their distinct traits. We remembered how our friends and relatives first stepped into the game and how their experience was.

The result of this brainstorming session was a revised list of in-game factors affecting newbie gameplay (had she expanded the inventory size, bound the resurrection point, and used the speed movement scrolls?) and also the brilliant idea of measuring the general in-game activity of players.

We used the following metrics:

Mobs killed per level

Quests completed per level

Playtime in minutes per level

By that time, we had also completely revamped the ETL part (extraction, transformation, and loading of data) and our SQL engineer made a sophisticated SSIS-based game log processor, focused on scalability and the addition of new game events from logs. Given the gigabytes of logs available, it was essential that we be able to add a new hypothesis easily.

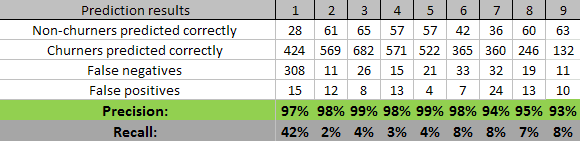

New data was loaded and processed, models examined and verified, and results analyzed. For the sake of simplicity, I won't post more lift charts, but instead only the refined results:

Level 9's anomalous high precision was game-related at the time of research, so disregard that data.

At this stage, our models improved their prediction power -- especially levels 2 to 4 -- but 6 to 8 are still way too bad. Such imprecise results are barely usable.

Decision Tree proves that general activity metrics are the key prediction factors. In a sense, playtime per level, mobs killed per level, and quests completed per level metrics comprised the core prediction power of our models. Other metrics contributed less than 5 percent to overall precision. Also, the Decision Tree was rather short, with only two or three branches, which means it lacked the relevant metrics. It was also a mystery to me why all three algorithms have variable precision/recall rates from level to level.

Phase 2 Result: We've achieved considerable success with general activity metrics, as opposed to specific game content-related ones. While precision is still not acceptable, we've found the right method for analysis, using Bayes first and Tree afterwards.

Inspired by visible improvements in the data mining results, I set up three development vectors: more general activity metrics, more game-specific metrics, and a deeper learning of the Microsoft BI tools.

Experimenting with general activity, we finally settled on the silver bullets:

Playtime at current level, previous level, and total during lifetime

Mobs killed per minute (current/previous/lifetime)

Quests completed per minute (same)

Average playtime per play day

Days of play

Absenteeism rate (number of days skipped during the seven day free trial)

Those metrics accounted for massive increase in recall rate (thus fewer false positives, which is great news!) Decision Tree finally started branching like there is no tomorrow. We also saw the unification of different data-mining algorithms for all levels, a good sign that the prediction process was stabilizing, and becoming less random. Naive Bayes was lagging behind the Tree and Neural by a whopping 10 percent in precision.

New individual metrics actually were quite a pain to manage. Manual segmentation for auto-attack use involved some math, and things like 75th percentile calculation in SQL queries. But we normalized the data, allowing us to compare the different game classes; the data mining models received category index data instead of just raw data. Normalized and indexed new individual metrics added a solid 3 to 4 percent to overall prediction power.

Combat 101: In online games, characters fight with skills and abilities. Auto-attack is the most basic, free action. Experienced players use all skills available and their auto-attack percentage will be lower -- although game and class mechanics heavily influence this metric. In Aion, the median for mage is at 5 percent while the fighter is at 70 percent, and even within a single class, the standard deviation is still high.

The next move was reading the book Data Mining with Microsoft SQL Server 2008 in search of tips and tricks for working with analysis services. The book itself was helpful for explaining the intricacies of Decision Tree fine-tuning, but it also led me to realize the importance of correct data discretization.

In the example above, we've manually achieved discretization of the auto-attack metric. The moment I started tinkering with the data, it became obvious that SQL Server's automated discretization could and should be fine-tuned. Manually tuning the number of buckets heavily affects the Tree's shape and precision (and that of other models too, for sure -- but for the Tree, changes are most visible).

I've spent a whole week of my life tuning each of the 30+ dimensions for each of the nine mining structures (one structure per game level; nine levels total). Experimenting with the buckets revealed some interesting patterns, and the difference between seven and eight buckets could easily be a whopping 2 percent precision increase. For example, the mobs killed bucket count was settled at 20, total playtime at 12, and playtime per level at 7.

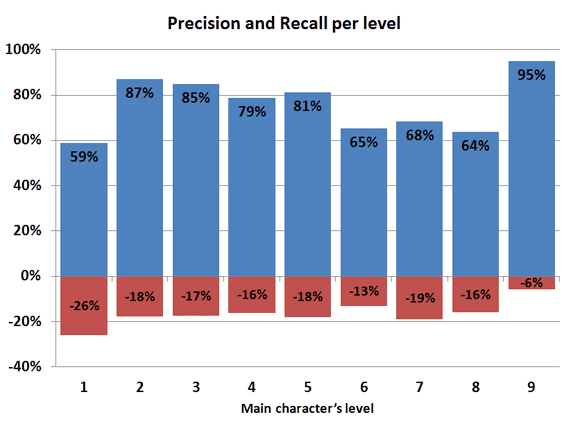

Fine-tuning yielded a great decrease in false positives, and boosted the Tree up to the numbers for Neural Net:

Phase 3 Result: Finally, we've got some decent figures, and we've also gathered a lot of interesting data about our players.

Frankly, I thought we'd hit the ceiling for accurate prediction. New metrics and hypotheses do not contribute to precision; the models are stable. 78 percent precision / 16 percent false positives is enough to start working on churn prediction.

Motivating these players with free subscriptions or valuable items probably won't be efficient (taking into account the VAT tax associated with such gifts in Russia) but emailing these players couldn't hurt, right?

An unexpected gift: As we were in our third month of the data-mining project, we realized the data might become outdated, as the game received several patches during that time.

Reloading the new, larger dataset for all three months, I noticed some changes in the lift charts. The data was behaving slightly differently, although the precision/recall stayed the same.

ETL procedures were rewritten from scratch again, and the whole three-month dataset was fed to the hungry data-mining monster.

At that time, processing time per level was less than a minute, so an increased dataset resulted in an acceptable 5 minute wait time. Unfortunately, all manual fine-tuning had to be redone, but look at the picture:

Increasing the dataset, we've hugely boosted the efficiency of the models!

For the first level, unfortunately, we can't really do anything. As Avinash Kaushik would say, "I came, I puked, I left". Those players left the game right after creating their character and we have few, if any, actions logged for them.

All those numbers above were historical data and a learning dataset for our precious mining models. But as I'm a very skeptical person; I want the battle-tested results! So we take fresh users, just registered today, and put them into the prediction model, saving the results. After seven days, we compare the week ago predicted churners with their real life behavior. Did they actually leave the game or not?

Our original goal -- to predict players about to churn out of the game -- was successfully achieved. With such high precision/recall we can be confident in our motivation and loyalty actions. And I remind you that these are just-in-time results; at 5:30 am, models get processed, new churners are detected, and they're ready to be incentivized the moment we come into the office in the morning.

Have we achieved our second goal, determining why players churn out? Nope. And that's the most amusing outcome for me -- knowing with very high accuracy when a player will leave, I still don't have a clue why she will leave. I started this article listing hypothesizes about causes of players leaving the game early:

Race and Class

Has the player tried any other Innova games (We have a single account)

How many characters of what races and classes have been tried

Deaths, both per level and total during the trial

And many others

We have tested over 60 individual and game-specific metrics. None of them are critical enough to cause churn. None of them! We haven't found a silver bullet -- that magic barrier preventing players from enjoying the game.

The key metric in this research appears to be the number of levels gained during the first day of the trial. Fewer than seven levels -- which represents about three hours of play -- means a very high chance to churn out. The next metrics with high churn prediction powers are overall activity ones:

Mobs killed per levels

Quests completed per levels

Playtime in minutes per levels

Playtime per day

It took us three months, two books, and a great deal of passion to build a data-mining project from scratch. Nobody on the team had ever touched the topic. On top of our robust but passive analytics system at Innova, we've made a proactive future predicting tool. We receive timely information on potential churners and we can give them highly personalized and relevant tips on improving their gameplay experience (all of those 60+ metrics provide us with loads of data).

The project was made for a specific MMORPG, Aion, but as you can see, a major contribution came from generic metrics approaches applicable to other games, and even general web services.

This was our very first data-mining project, finished in September 2011, and it has been rewritten completely since then, based on our current experiences with predicting the churn of experienced players, clustering and segmentation analysis, and a deeper understanding of our player base. So the data mining adventures are to be continued...

Read more about:

FeaturesYou May Also Like