Constraints and opportunities for UI design in VR

Understanding the constraints and opportunities for designing User Interfaces (UI) in Virtual Reality (VR) is essential. Balancing immersion, usability, and experience is just one of the many challenges when designing UI for VR.

Introduction

Understanding the constraints and opportunities for designing User Interfaces (UI) in Virtual Reality (VR) is essential. If the developer doesn’t understand the constraints they face in VR, they will essentially waste time solving problems that don’t exist or neglect the problems which need to be solved. Equally a developer should be aware of the opportunities VR affords, providing opportunities to create innovative solutions, which can set them apart from the crowd. Balancing immersion, usability, and experience is just one of the many challenges when designing UI for VR. Understanding this is one of the core challenges of my research.

Traditions are bad?

When designing User Interfaces for Virtual Reality, it’s very easy to think in terms of traditional conventions, such as those found in 2d and 3d applications. While there are many lessons to be learned from these traditional approaches, VR developers have found that many of these conventions are no longer applicable.

To understand the challenges a UI designer faces in VR, it is important to examine the opportunities and obstacles they are confronted with.

Constraints

Depth of View

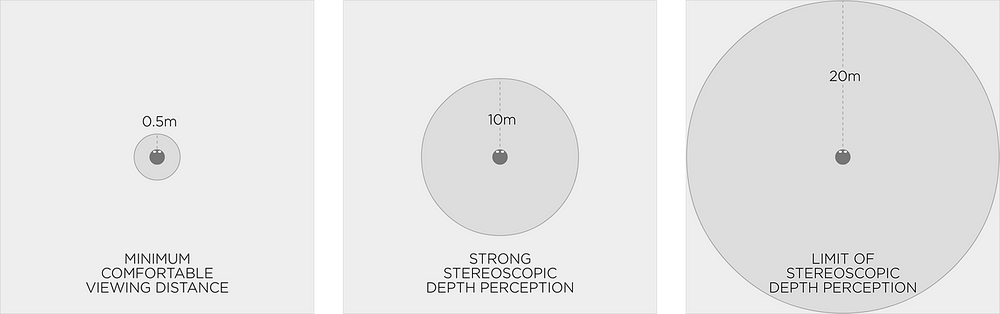

Some developers have stated that most of what the player needs to look at should be positioned between 0.5 to 10 metres away. However, Oculus state in their guide that the most comfortable range of depths is between 0.75 to 3.5 metres. Depending on the target hardware, the depth of view may vary, so it is important to understand this early in development.

Figure 1: Vincent McCurley

Field of View

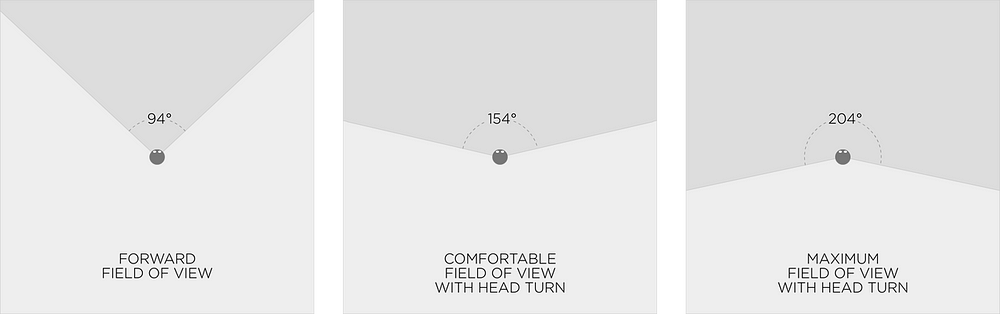

Vincent McCurley (VR filmmaker) found that the most comfortable forward field of view was 94 degrees, which with head turning will increase to 154 degrees. The maximum field of view with head turning is 204 degrees. This is fine for stationary experiences; however, for 360 degree positional tracking, room scale experiences, this will need to be adapted.

Figure 2: Vincent McCurley

Player fatigue (HMD weight)

The additional weight a Head Mounted Device place on the user’s neck should not be under estimated. As the hardware is improved and the weight reduced, this issue may become negligible. In the meantime, ensure that any UI implementations do not place unnecessary strain on the user.

Resolution

Resolution in VR is limited, with some devices having a more limited resolution than others. Elements that appear outside of the comfortable viewing ranges will be harder to see than those within. Forcing the player to focus on elements outside of these ranges will cause eyestrain. In addition scene elements on the periphery are generally out of camera focus and therefore lack resolution, which as a result look poor. Text in Virtual Reality, unfortunately, doesn’t look great, so ensure this isn’t something you rely on too heavily for your user interface elements.

Player attention

It’s difficult to ensure the players’ attention will be focused where you want it to be at any given time. The freedom VR gives the player to look around and interact with the world means you need to find clever ways to direct their attention to a specific point at a given time. There are many traditions used for drawing a players attention, ensuring the approach chosen is suitable, requires testing and iteration.

Opportunities for innovation

Depth perception

The perception of depth is one of the biggest strengths of VR; no other digital medium allows the user to perceive depth in the same way. The perception of depth adds greatly to the users’ immersion and the novelty of VR experiences. There are many opportunities for using this perception of depth in UI, some of which may have not yet been considered.

Positional audio

Sound has always been a powerful tool in any medium, from watching movies to playing games. The introduction of 5.1 surround sound to an experience, resulted in unprecedented immersion. The opportunity to use sound in VR as a tool has many possibilities, not least to act as an additional layer to the User Interface. In many games HUD elements are used to guide player attention and ensure they are aware of their objectives. Positional Audio in VR can be used to communicate depth and therefore should be considered as a tool for guiding player attention to specific elements within a scene.

UI in Two categories

As I see it there are two main categories that UI can be grouped into UI into;

UI that is part of the players’ Heads Up Display

UI that is integrated into the environment.

Heads-Up-Display… oh you mean HUD

Old HUD conventions can work with thoughtful re-design that is mindful of stereoscopic vision and comfortable viewing ranges. Projecting HUD elements out from the player or attaching them to the camera are both feasible approaches. Translucent UI elements that are well lit are more comfortable to view than more traditional UI’s that can be found in non VR games.

Pinning UI elements in the real world is an approach where a UI element is designed as part of the “real” or VR world, such as maps, compasses or Satellite navigation devices. If you have a weapon you could imbed elements onto the weapon such as an ammo counter. Close-up weapons and tools can, however, lead to eyestrain; one solution may be to make them part of the players’ avatar that drops out of view when not in use.

Figure 3: Far Cry 2 (Ubisoft, 2008)

Traditional cross hairs will need to be drawn at varying depths, depending on where the player is focusing on. If the cross hair merely floated in front of the player, then it would be out of focus most of the time. A system will need to be designed that will render the crosshair as a 3d object in the 3d world.

Environmental Integration

Embedding UI elements into the environment allows the designer a spatial approach to UI design. If a player wishes to engage with a menu, it could take the form of a computer screen, for example. From this menu the player can be updated on game objectives and their progress. This option could also enable the player to alter game settings, such as level of difficulty. Visual UI elements could be imbedded onto objects or non-player characters, to confirm their interactivity.

Figure 4: Tomb Raider (Crystal Dynamics, 2013)

Positional audio is another option which can be used to draw a players’ attention to a specific element within the scene when a player is in close proximity. It is also possible to combine Visual UI elements with positional audio to attract the players’ attention/gaze and then confirm that a particular object or NPC is interactive.

The challenge is to strike a balance between making things look good versus keeping them comfortably readable. The amount of content to display on screen, should be weighed against the resolution of content.

Jerrys’ Final Thoughts

Balancing immersion, usability, and experience is just one of the many challenges when designing UI for VR. Understanding this is one of the core challenges of my research. I hope that my findings can be used to help UI developers understand their constraints and to find new ways to solve the challenges of implementing UI in VR. Although it may seem daunting to design UI for VR games, remember that constraints can fuel creativity.

“Embrace your constraints and draw out of them the very solution that sets you apart from the crowd” (Porter J, Brewer J).

Thank you for taking the time to read my article. I hope you found it informative and useful. If you have any thoughts on the topic or feedback you wish to share, please don’t hesitate to email me: @ [email protected]

Read more about:

BlogsAbout the Author(s)

You May Also Like