Trending

Opinion: How will Project 2025 impact game developers?

The Heritage Foundation's manifesto for the possible next administration could do great harm to many, including large portions of the game development community.

A developer of the IGF-nominated game Pugs Luv Beats explains how music technology and theory was married to cute and colorful gameplay to create a surprising blend of avant-garde musicianship and accessible fun.

August 1, 2012

Author: by Yann Seznec

A developer of the IGF-nominated game Pugs Luv Beats explains how music technology and theory was married to cute and colorful gameplay to create a surprising blend of avant-garde musicianship and accessible fun.

People often ask me how I got into making games. This still comes as a surprise to me, since I don't really see myself as making games -- my background is as a musician and performer -- but since founding Lucky Frame four years ago after achieving a small degree of fame (or notoriety) with my Wii remote music controlling software I have found myself and my company identifying more and more with the indie game scene, which is absolutely great.

It's brilliant, because as a musician I see an enormous amount of groundbreaking work and exciting potential in the world of interactive audio and music in games. As a musician friend of mine once pointed out to me, in many ways the most innovative things happening right now in the music world are not happening in music at all, but in music software. I have no doubt that if Bach or Coltrane or Nancarrow were alive today they would be designing generative music systems or programming new Monome patches.

This isn't a new or revolutionary thing to say -- I would argue that this shift towards technology as a creative musical tool began years ago, with composers like the Russolo brothers (pictured) who built their own insane instruments because they wanted to make cacophonous noises, and continued with Daphne Oram and her incredible Oramics machine, which enabled her to draw her compositions on film.

Conlon Nancarrow, who I just mentioned, is another really interesting example of a composer who became interested in using technology. When his compositions proved too complex for musicians to play, he began manually punching holes in piano rolls and running them through player pianos, allowing him to create enormously complex music that would otherwise have never been heard (and also making him perhaps the first person to program music).

This trend has perhaps been accelerated in recent times due to the incredible explosion of media technology in the past 20 years. Whilst super-high-end recording and production remains expensive, it is now possible for a huge population of people to record, mix, compose, and produce high quality sound at an extremely low price.

You want a synthesizer in 1980? Well, you pay $1300 for an ARP. Now, you are ridiculously spoilt for choice for free software synths (and parts are far cheaper if you want to build a hardware one!) The consequence of this is that every sound you can ever possibly imagine can be created quickly and easily and probably with free software.

You want a synthesizer in 1980? Well, you pay $1300 for an ARP. Now, you are ridiculously spoilt for choice for free software synths (and parts are far cheaper if you want to build a hardware one!) The consequence of this is that every sound you can ever possibly imagine can be created quickly and easily and probably with free software.

Another huge revolution from a composition point of view, as Matthew Herbert often points out, is that whilst in the past composers would be forced to imitate something (Beethoven writing a flute part to emulate a nightingale, Cootie Williams' talking trumpet), nowadays a composer can go out and record a bird for his piece, or use a recording of someone talking.

This presents a huge conundrum for composers and musicians -- if everything can be done, it sort of takes some of the fun out of it. I think that's why many composers and musicians, particularly of the electronic/digital variety, are becoming more and more interested in interface and process. How is the music made, and how does that affect the end result? That has been the philosophical focus of most of my work over the past few years, resulting in ridiculous appearances on UK reality show Dragons' Den and more recently with my experiments using live mushrooms to control electromechanical instruments.

It was of course only a matter of time before I became interested in using games for music, since video games are perhaps the most powerful interactive interfaces around right now. Why not try and use the power of game interaction to generate music?

These are exciting times for audio and music people in the game world. The trend of Guitar Hero-style games has perhaps faded, which I see as a very good thing. While they certainly have their place (and I'm sure they will come back), those types of games revolve around players trying to recreate existing music with as much accuracy as possible (often mediated through the interface of a strange plastic representation of an instrument).

This creates what is of course a brilliantly compelling game design, but is not very exciting from an audio or music design and development standpoint. As a side note, I see an interesting parallel between this type of gaming and the old-fashioned music conservatory style of teaching music, in terms of focusing on accuracy rather than fun, musicality, or creativity.

That was our starting point for making Pugs Luv Beats, our recent release for iOS, which was (ahem) nominated for an IGF Excellence in Audio award. We wanted to make a music game that was focused on music creation, and which was powered by the generation of audio, rather than forcing the user to see music as a challenge.

Our first stab at this was with a space shooter drum machine that Jon Brodsky, the Lucky Frame coder/designer, prototyped a while back. Codenamed Space Hero, it was a system for setting up a series of enemies, which you then had to destroy with your spaceship. When the enemies arrived on screen they would trigger a drum sound, and when they were destroyed they would make a bass sound -- so it was basically an editable and playable drum machine. AWESOME PROTOTYPE VIDEO ALERT!

This prototype proved to us that game design -- or at least interacting with a game -- could in many ways be seen as analogous to music performance and composition. Both are essentially based around a series of choices, and both in many cases involve some sort of start, end, and cycle.

As a counterpoint teacher once explained to me, once you have written a note of music, you only ever have four choices. You can play that note again, you can play that note again differently, you can change the note, or you can not play any note. Similar sets of choices inhabit the world of game design -- and similar techniques for simplifying the process as well. One particularly good example is the technique used in the writing of a fugue, which involves taking one melody and overlapping it in varying ways. Check out this video to see a visualization of a Bach fugue.

You can clearly see that the first melodic line played through once, and once it starts to develop, the same melodic line is played underneath the variations. This happens several more times throughout the piece.

I always like thinking of the four fugal melodies as being like four different characters exploring the same space, perhaps finding different power-ups that change their speed, double their power, and so on. There are many other comparisons that could be made -- for one thing, that Bach visualization would make a pretty cool level builder for a platform game (file under: Future Game Ideas...)

Pugs Luv Beats is the first in a series of music games that we are making that explores this philosophy. We are trying to make music games where both the music and the game aspects are on equal footing -- playing one will generate the other, and vice versa. In other words, our aim is to use compositional techniques to make games, and games to make music.

In order to make this happen, we needed to create both a gameplay that lends itself to creative exploration and a musical generation system that will provide complex, generative, but reproducible audio. Each of these things is important for different reasons.

Complexity is perhaps the most obvious -- it is the idea that actions in the game which create music must also have enough depth and offer enough value that repetition will not get old. This is an odd science, because on one level, music is based on repetition and patterns, yet in games a repetitive sound can destroy an illusion -- or at least become annoying.

Additionally, the musical complexity must be someone limited, or else the audience will lose the connection between the action and the musical result. For example, if a certain tile triggers a sound when the player's character traverses it that could potentially get old very quickly.

However, if that tile emits a different pitch of the same sound each time, it will add a layer of musical complexity. The trade-off is a decrease in the connection between the action and the sound -- a balance needs to be struck!

Generative, or procedural, audio is a bit of a buzz phrase, and there are certainly a number of different definitions floating around the internet. Thinking of it in terms of generative music frames things a bit differently, relating it closer to the world of 20th century classical music composers like Xenakis and John Cage (and arguably, jazz composers like Charles Mingus).

In that world, the concentration can be on setting up a system within which a performer can explore and generate their own music. In many ways, it is about creating constraints and borders, a framework that allows an infinitely variable set of musical possibilities.

For example, in John Cage's pipe organ piece As Slow As Possible, the actual tempo it is meant to be played at is left undefined -- meaning performance times have varied between 20 minutes and 639 years. Cage's Fontana Mix takes this even further, as even the instrumentation is defined as "any number of tracks of magnetic tape, or for any number of players, any kind and number of instruments". The score is a series of transparencies and paper, which when layered create patterns that direct the players. A potentially infinite number of patterns can be created.

This may all seem rather academic, but it is important to note the basic parallels between this type of music composition (often called non-deterministic, or parametric) and games. Games and sports, of course, are the epitome of setting up a system and letting the players make their own way. Each play of the game will be slightly different, and in some cases different playthroughs will result in barely recognizable endings. It's no accident that many of these 20th century composers were fascinated by game theory.

Finally, reproducibility is essential in terms of rewarding the user. As described in the previous two paragraphs, music that is generated in a game needs to have depth, and the user needs to feel agency over its creation, but in order for something to be a game there needs to be some element of mystery. This mystery does need to be reproducible, however, or the user will not be able understand the patterns and play with them -- which is a major component of turning an object that makes noise into a musical instrument.

So how did we put all of these things into practice? Well, we made a game with dogs wearing hats. Pugs Luv Beats is a universal iOS music composition game that we released in December 2011. In the game, the player controls an alien breed of pugs. Once the masters of a wondrous and highly advanced civilization, these pugs are the victims of their own greed. They loved nothing more than to collect beats, which they cultivated with their special brand of "luv". But an ill-advised scheme to grow the biggest beat of all time spun wildly out of control, and their home planet was destroyed. The player must help the pugs to grow more beats so they can rediscover new planets, build houses, and recover their lost technology.

We are very proud of the game, particularly since we are a tiny operation of three people. Jon Brodsky handled all of the coding, using a combination of openFrameworks, Lua, and a custom build game engine called Blud. I did all of the audio using libpd and Pure Data, which I'll get to shortly. Sean McIlroy is the artist and designer in the team, and he did an amazing job developing the cute, original, and oddly poignant pug characters, as well as the whole gameworld and UI. This was an enormously important aspect -- as you'll soon see, Pugs Luv Beats is in many ways a music sequencer disguised as a game, however we wanted to make sure that it looked nothing like music software.

The gameplay itself focuses on the pugs. You start out with a single pug living in a house. You need to make the pug harvest beets, which you do simply by tapping a path for the pug to run. When the pug hits a beet, it creates a musical beat (ha!) and your beet count rises. More beets lets you buy another house, with another pug. Buying more pug houses lets you uncover more of the planet, and thus more beets. Collecting enough beets will also allow you to buy a brand new planet and start over again.

As you expand your universe, you will discover new terrains -- these new terrains will make different sounds, letting you grow your musical palette. However, these terrains will slow your pugs down -- that's why you need to find and equip your pugs with hats and costumes. You want to go faster on snow? Find a Santa hat! How about water? Shark fin!

This approach to the game design and structure means the game is effectively endless -- even once you have discovered all of the hats and costumes, you can continue to explore the universe and find new combinations of terrains. Each planet is also a separate musical world, so you can always start from scratch, or go back to your previous planets to remix and recompose -- all using costumed pugs.

Behind our aesthetic styling and collection game mechanic there is a generative music engine that puts all of this theory and design into practice. Let's delve into a bit.

We decided pretty early on that we wanted to use Pure Data to handle all of the audio. This was made possible thanks to the hard work of Peter Brinkmann and Peter Kirn, among others, who put together libpd (go buy the new book!) Pure Data is a free and open source graphical programming interface designed mostly for audio and music geeks who don't want to have to program everything by hand. I will go ahead and admit at this point that I fall into this category.

To expand on that -- I am not a coder, and in all likelihood I never will be. I have a really hard time with text on a screen, but a graphical representation of code makes it a lot easier for me to understand.

That was what led me to Max/MSP, a commercial graphical programming system that is very popular in the contemporary art and music world. I became rather adept at Max/MSP during my studies and used it to make the Wii Loop Machine, among other things.

Pure Data is effectively a free version of Max/MSP (there is a lot more to that story, but it is heroically uninteresting), so when we had the possibility to integrate that into our projects we jumped at the chance.

Working with Pure Data provided several big advantages (beyond being free). First, it allowed us to be far more agile in our development. We are a tiny three-person team, with only one coder. Using Pure Data allowed me to handle all of the audio development, including previewing, prototyping, experimentation, and much more. I could play with the audio engine and make really complex tweaks without ever bothering Jon.

Second, we were able to take advantage of 20 years of audio research and development, rather than having to build everything ourselves. Say we want a sample accurate wavetable playback system? Using Pure Data we didn't have to build it ourselves, it comes with the package. How about a reverb system? A quick Google search will result in dozens of different reverbs made in Pure Data by researchers and developers around the world that are easily reconfigurable and adaptable to our project.

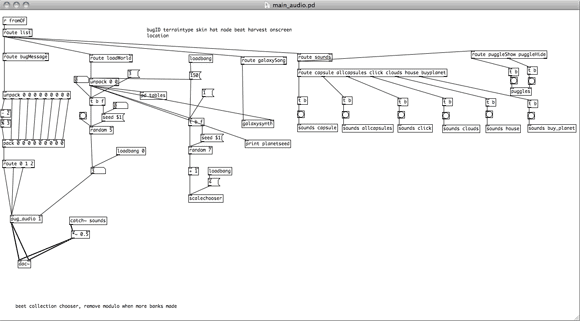

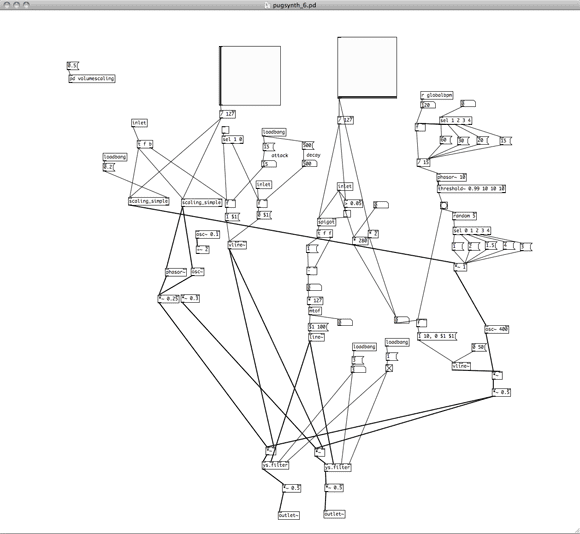

Click for large version

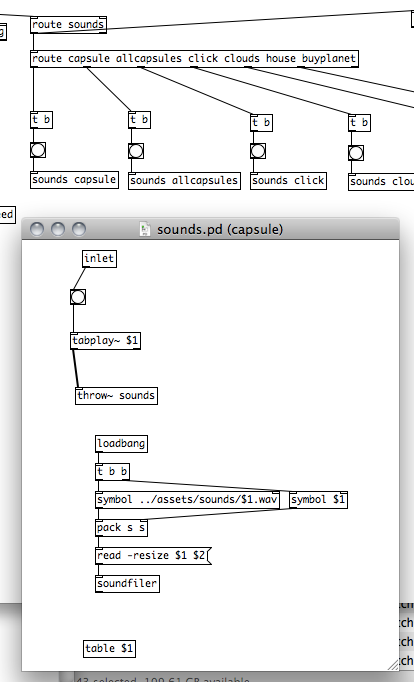

To explain a bit about how it works, the entire sound engine for Pugs Luv Beats is contained within this patch. It receives messages from the Lua/openFrameworks code that Jon put together, and sends those messages around the subpatches to generate and modify sounds. The simplest thing is does is trigger sounds, for things like menu actions, building things, etc. You can see on the far right the object "route sounds", which is handling all of these standard sounds. Each "sounds" object contains a little subpatch that plays the sound on a trigger.

While it may seem needlessly complicated to use this system just for playing a sound, this does offer a lot more flexibility, and more importantly allows me (the sound designer) to change anything sound-related without bothering Jon (who is in all likelihood doing something insanely complicated to do with multithreading or whatever).

A slightly more complex section of the engine can be seen in what we called the Pugglesynth. The challenge here was to create a sound library for Mr. Puggles, the friendly pug who guides you through the tutorial. We wanted him to make some noise every time he popped up, but we didn't want to use any more sound files. It needed to be funny and charming, without being repetitive. I therefore built a two oscillator subtractive synth, and every time he appears, a series of random numbers are triggered, controlling the pitch and filter frequency. Every time he disappears, however, the same two notes are played. This gives him recognizable character, without making him annoying.

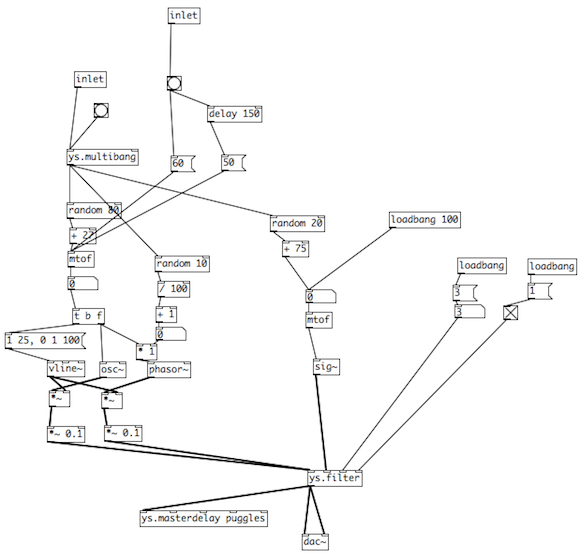

Speaking of synthesizers, the galaxy synth in Pugs Luv Beats was so popular that we actually decided to spin it off into its own application altogether -- Pug Synth! There's nothing like an x-y pad synthesizer to get everyone excited. Here's a snapshot of the patch for the Swamp synth, which features a dubstep-style filter whose rate is linked to the x axis, along with the pitch.

Click for large version

Rather than being smoothly linked, however, the rate is locked into multiples of master BPM, which is also controlling a drum machine. This means your ripping pugstep is always in time with your beats. It also meant we were able to build some wicked generative drum machines, and add variations really easily, while keeping everything within a strong musical framework.

This approach, of using certain master parameters to lock everything together, is something we first employed in Pugs Luv Beats. One of the big challenges of designing a creative music game is to make sure things are controlled enough to not sound horrible, but free enough that the player feels real agency over the musical output. This relates strongly to the "complexity" and "reproducibility" that I mentioned before, and there was no single fix.

What we ended up doing was making sure that whenever music was being generated, certain global parameters were set and unchangeable, including tempo and key. The former is quite straightforward, but the latter required a more complex approach -- hold on to your hats!

One of the elements of the audio engine I'm most proud of is how it handles tonality. There are a bunch of melodic sound libraries in the game, linked to different terrains that the pugs run across. Lava triggers electric guitar; sand triggers an Iranian santoor, etc. The note that is played relates to the position of the tile on the planet.

This essentially means each planet is a giant MPC, or sampling keyboard -- a pug landing on a tile will always generate a certain sound at a certain pitch.

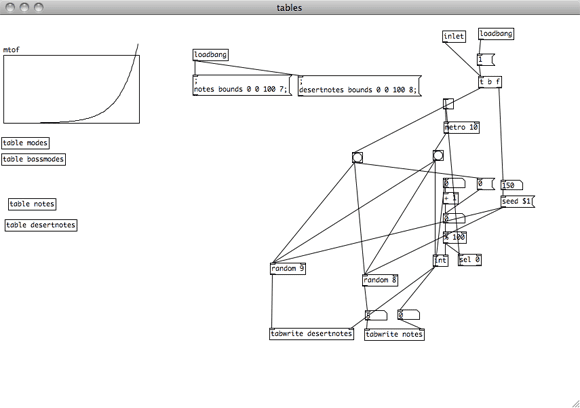

This sounds very simple, but accomplishing it was relatively tricky, because each planet is different. There are millions of potential planets in the game, and it would be impossible to have a database for each one. Instead, we are using a single identifying number for each planet to generate the tables that control the notes.

The planet's ID is used as a random number seed, generating a lookup table that assigns musical note values to every tile on the world. This is a practical application of the theories I outlined above -- it means that the planet will have a complexity in the sense that the arrangement of musical notes will be different on every planet, however it will be reproducible because every time you return to a specific planet, the arrangement of notes will be the same.

Click for large version

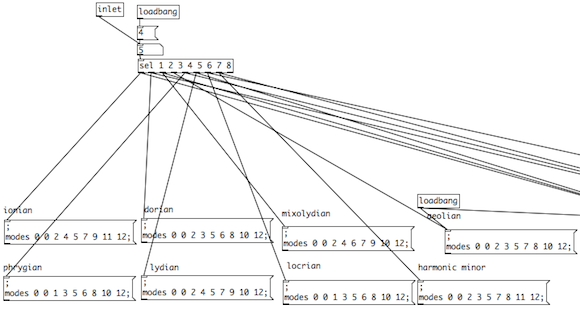

However, the music theory geek in me was not satisfied. I wanted to make sure that every planet would have a musical character. This way, even if you have two planets with exactly the same terrain, they will inherently sound different. We accomplished this by giving each planet a core tempo as well as a musical mode, both selected using the planet's ID as a random number seed.

The mode (or scale) would therefore be applied to all of the melodic notes played on that planet. You might get a traditional major scale, or a traditional harmonic minor scale, or a very strange (to western ears) Locrian. To make sure everything worked together, the galaxy synth is also using the same scale lookup table, so you can jam along in the same key as your planet.

These things give a little glimpse into how we were able to build a really powerful generative music engine for an iOS music game that incorporates some of our theories about interactive music that we've been developing over the past few years. We are now developing a few more music games that try to apply these theories in different ways, using different types of games.

It can sometimes be hard to see the connection between theory and practice, but I hope this article has given some insight into the thought processes that go on at Lucky Frame. We work on a wide variety of projects, and from the outside they may seem rather unrelated. Currently, for example, we are making iOS games like Pugs Luv Beats. Additionally I am currently on tour performing with Matthew Herbert's One Pig Live show, for which we built an interactive musical pigsty.

What links these projects together? Our philosophical interest is really on interaction and interface, particularly with music. This can mean interaction between a player and a game, or a performer and an audience, or any number of other dynamics. Ultimately we want to deepen understanding and engagement, leading to knowledge and fun. That's a pretty great reason to make games.

Read more about:

FeaturesYou May Also Like