Trending

Opinion: How will Project 2025 impact game developers?

The Heritage Foundation's manifesto for the possible next administration could do great harm to many, including large portions of the game development community.

Audio programmer Andrew Clark (Full Auto series, Pseudo Interactive) attempts to define adaptive music for games, using examples ranging from early efforts via LucasArts' iMUSE engine through modern games such as the Rainbow Six series, in this Gamasutra feature.

This section didn’t previously exist. When I originally wrote this piece, I assumed that readers would already know what adaptive music was. I also assumed that they would have some familiarity with the kinds of game implementations that are out there. So, in the introduction, I jumped right into exploring the value of a formal definition, without first introducing the basic idea of adaptive music itself. And, in the body of the article, I focused exclusively on non-gaming examples – figuring the reader would be less familiar with (and therefore much more interested in) these.

As it turned out, the original approach didn’t work at all for non-expert readers. I got some preliminary feedback like: “Wow, interesting piece. But are there any, you know, video game examples of adaptive music?”

So! If you don’t already know what adaptive music is, or how it’s used in games, this brand-spanking-new section is for you. On the other hand, if you are an expert reader, you may want to skip ahead.

“Adaptive music” is a game industry term that seems to have entered general usage in the last ten years or so. (I don’t know who first coined the term.) It replaces the term “interactive music”, which was more immediately obvious, but had some accuracy issues.

If I started talking about “interactive music”, you’d probably immediately know what I was talking about… so actually, let’s start there. (In a minute, I’ll explain why we don’t call it that any more. For now, I’ll use “interactive”, since it’s a bit more user-friendly.)

Interactive music is the game industry’s answer to the Hollywood film score.

At the movies, music has become a powerful tool for clarifying and emphasizing emotional contexts. It complements and reinforces onscreen action and beats. It can add or resolve tension, or create anticipation. It can subtly (or not so subtly) associate intangible aural qualities with different characters, while evoking the spirit of times long past and places long vanished (or yet to come… or never to be).

It would be really cool if game music could complement onscreen action with the same kind of subtlety, depth, and expression. The complication is that, in games, the timing, pacing, contexts, and outcomes of the onscreen action are constantly in flux, depending on the actions of the player.

Is the player winning? How many orcs are left? Is it important that the player just ran out of painkillers? Did the player find the AK47 yet, or are the dragons going to eat the bowling ball before her plate-mail is repaired? How long is this battle going to last? Are the tides turning? Was that hit significant? Or… did the battle start at all, or did the player sneak past with the Cloaking Cloak of Cloaking +2?

Most importantly: how can a game composer score a scene intelligently and compellingly, when she doesn’t know what is going to happen, when?

When a film composer starts working, she sits down with a finished, fixed-length, edited scene. She can custom tailor a musical accompaniment to fit the film perfectly, with subtlety, elegance, and grace. (One hopes.) Linear music is composed to match the linear scene exactly.

Interactive music in games attempts to score non-linear, indeterminate scenes with non-linear music. It uses game code and data to track changing game contexts on the fly, and to cue appropriate score responses. It also has to track the current music context, in order to avoid ugly or musically inappropriate transitions or pacing. It’s an interdisciplinary challenge, since game logic synchronization is squarely in the programmer’s domain, while music logic is best left to the composers.

In most cases, “interactive” music falsely implies direct user interaction with the music. (In some games, this is in fact the case – as in the musical game-play of “PaRappa the Rapper” or “Guitar Hero”.) However, in general, the user should really be concerned with interacting with the game. The music system is supporting the dramatic action by adapting intuitively and discretely in order to remain contextually appropriate. Hence “adaptive” music.

The following survey of examples is by no means comprehensive – it barely scratches the surface. Nor should it be considered representative – the selection criteria was rather sketchy. (By and large these are adaptive music systems that have been presented at GDC lectures, with some arbitrary references to titles I happen to own and enjoy.) But some attempt was made to present a broad sample of important trends, technologies, and techniques.

The “X-Wing” (PC DOS) series, which debuted in 1993, featured MIDI versions of John Williams and John-Williams-esque orchestral music. Lucas Arts’ patented iMUSE music engine handled sophisticated run-time interactions between dramatic onscreen action and a database of music loops, cues, and transitions. (Evolving versions of iMUSE were also used on a number of later Lucas Arts projects.)

These Windows titles (released in 1997 and 1998) featured Microsoft Interactive Music Architecture (IMA) technology – a precursor to the (now deprecated) DirectMusic SDK. [3DSoundSurge01]

DirectMusic provided runtime musical alignment tools, and design-time tools for managing (and auditioning) adaptive music building blocks. Advanced functionality included runtime MIDI variation generation based on composer-designed templates (“Styles” and “Chordmaps”), and standardized methods for switching between context-sensitive content versions via “Groove Levels”. DirectMusic was fully supported in DirectX 7 through early versions of DirectX 9.

The Mark of Kri

In a 2003 GDC presentation, Chuck Doud described custom adaptive music technology and techniques that were developed specifically for this PS2 title. Doud stressed that constant, diligent communication and coordination between programmers, designers, and composers were essential for the interdisciplinary project. The team found that an emphasis on percussive elements and irregular meters allowed for some startlingly fast transitions to remain musically consistent and cohesive. [Doud03] The examples he showed demonstrated some incredibly tight synchronization to on-screen state changes.

This multi-platform 2002 release was able to mine its adaptive building blocks from hours of recorded orchestral cues for the film of the same name. It is a very interesting case, in which big budget music by a big Hollywood composer (Howard Shore) receives a very ambitious interactive video game treatment. [Boyd06]

This 2003 Xbox title is a good example of a contrasting approach to adaptive game music, which could more properly be described as adaptive music editing than adaptive music composition. In “Rainbow Six 3”, use of in-game music is much more sparing than in the other examples. The title avoids the wall-to-wall approach in-game music, and thereby sidesteps the challenge of changing musical forms on the fly. Cues are reserved for a small number of special dramatic contexts, which are, in general, designed not to overlap. A good percentage of game-play is not scored, focusing instead on immersive sim sound design elements.

…I know, I know: defining adaptive music sounds boring, pedantic, academic, theoretical, and of limited practical use. Yawn. Trust me, I was right there with ya. But! As it turns out, this particular exercise was a huge personal breakthrough for me in terms of my own understanding of the topic. So I thought I’d share. (Your mileage may vary.)

This article is targeted mostly to experienced game composers and audio programmers with actual practical adaptive music experience. In general, I don’t expect them to have had much spare time in their production schedules to spend on frivolous musings about the essential nature of the craft. (I know I didn’t.) On the other hand, I think they’ll find this brief journey extremely interesting. (I know I did.)

At the same time, the paper should be easily accessible to a general audience. There are no technical pre-requisites. The only real requirement is a more-than-casual curiosity about the field.

(Warning: gratuitous personal anecdote. Feel free to skip to the Purpose section.)

As far as I know, I’m one of very few people with the following skill set:

a formal training in classical composition (BMus from the University of Toronto)

many years of indie music production experience

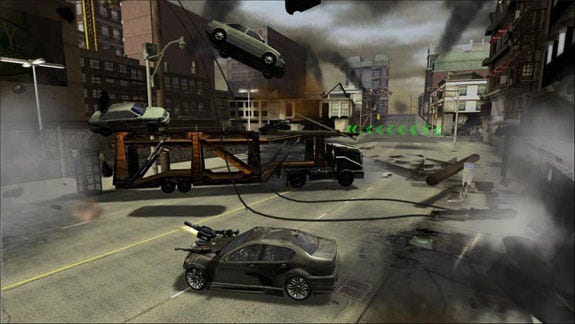

audio programmer credits on two “next gen” console titles (“Full Auto” Xbox 360, “Full Auto 2: Battlelines” PS3)

Full Auto

As such, I like to think that I have a fairly unique and advanced understanding of adaptive music. (Especially since it has been an obsession of mine for quite a while.)

This know-it-all attitude is kind of obnoxious in the game industry, considering I haven’t actually composed music for any (shipped) titles. (Although to be perfectly fair, most game composers haven’t coded adaptive music logic for any shipped titles.) For a while, I thought that academia might be a much better fit for me. (This is way back in aught-thee and aught-four, ah recon.)

I had visions of four-month-long summers of applied research and blissful publishing, all in the name of pure knowledge; holding forth on my favorite topic to a classroom of rapt pupils; tenure; inappropriate relationships with college co-eds; and prolonged legal battles fighting for my very career.

Sadly, getting back on track for an academic career after a few years “on the outside” proved to be at lot less easy than the college co-eds. Nonetheless, the attempt led to the following very interesting encounter.

When I started getting my applications for grad school together, I met with a music theory professor at my alma mater. Preliminary investigations seemed to indicate that Prof. Mark Sallmen would be the most likely candidate to supervise a research degree in my particular area of interest.

I have to admit, I kind of expected an enthusiastic explosion of interest from the music theory community. Finally, after years of analyzing A) obscure points of interest in centuries-old music or B) the 20th century’s enthusiastic deconstruction of all traditional notions of music, along comes An Exciting New Practical Technical Composition Challenge.

In my experience thus far – including interactions with the IASPM (the International Association for the Study of Popular Music) – game music is barely an annoying bleep on the radar of the serious academic music community. Whenever I bring the topic up in those circles I just end up feeling like a flake.

But I digress (as I am wont to do).

My meeting with Prof. Sallmen would prove to be a very interesting encounter, as it led to the following humbling experience.

I thought Prof. Sallmen would be delighted to finally have found a formally trained composer who was also a computer programmer. Now he could finally supervise some applied research in the fascinating emergent field of adaptive music theory. What he actually said was (ok, I’m paraphrasing here): “What the hell are you talking about?”

This was a very interesting moment for me because I suddenly realized that, in a very real sense, I didn’t know.

Sure I could give the usual examples that are typically used to describe adaptive music. I could compare and contrast the scoring of a linear film sequence versus a non-linear game encounter. But how was “adaptive” music different from 20th century composers’ “indeterminacy” and “aleatory” music experiments? (Sallmen’s questions.) Various jazz traditions? And so on. What was adaptive music? For all my annoying, know-it-all, deeper-interdisciplinary-perspective-than-thou attitude, I literally didn’t know what I was talking about.

Dr. Sallmen invited me to write a formal abstract introducing adaptive music as a field of study. I accepted and began my research, starting by trying to determine the exact scope of the term “adaptive music” itself.

The first thing that happened was that I started to feel a bit better, because apparently the game industry didn’t know what it was talking about either. Everywhere I looked, the term was described briefly in terms of examples. But nowhere was it actually defined.

The other thing that happened was that my little abstract feature-crept into a short paper, which eventually turned into the article that you find before you today.

What’s the point? Why bother? Why is a definition important?

Professional game composers and music programmers are faced daily with (LOTS of) immediate, practical concerns. Students and amateurs trying to break in to the industry are frustrated by difficulties involved in getting any applied experience scoring games. Calls for articles on game topics focus on papers with practical, “how-to” solutions. (C.f. the Gamasutra.com Writers Guidelines) Who has time for theoretical exploration?

A definition exercise challenges our assumptions about the craft, and offers fresh perspective. It scopes and frames the problem in a way that helps us start looking for underlying causes and real long-term solutions, getting us out of the mindset of temporary hack fixes and implementation-specific details. A deep understanding of the problem space is an invaluable asset in any discipline. In a way, a formal definition starts teaching us “how-to” think about a field.

“What is adaptive music?” is one of those questions like: “What is music?” or “What is art?��” Everyone takes for granted that they know what art is – until they show up to the first day of Art History 101 and their professor asks the class to define it.

As it turns out, it is almost impossible to define music without referencing preexisting notions of music. “Rhythmic patterns of notes” seems plausible at first… except that some cultures’ music doesn’t have any kind of concept of rhythm, and others’ don’t recognize pitch – let alone notes. “Organized patterns of sound in time” is better in the sense that it is less culture-specific. After all, organization is subjective. (For instance, the way that I like to organize my living space drives my wife mental. And vice versa.) On the other hand, “patterns” implies repetition, and some musical traditions are organized around strictly non-repetitive, organic growth. More importantly, the definition doesn’t differentiate music and, well, speech. Hmm.

To a certain extent, we simply know what music is because we grew up in a musical culture. And, in general, unless you’re into ethnomusicology or philosophy, that’s about all you need to know; a formal definition is not really that useful.

“Adaptive music” is different – a formal definition really is that useful. You did not grow up with an innate cultural understanding of this concept. If you want more than a superficial glimpse of its essential nature, you’re going to have to dig.

For me, the process of defining adaptive music was a door-opening “aha! moment”. It provided a solid foundation for further research and exploration, and led to a number of later “aha! moments”. It provided the basis for the identification of some easily-overlooked but important fundamental truths about the art of adaptive music, which will be the topic of future articles.

Most importantly, this knowledge has been of practical value in applied game production situations.

But as I said before, your mileage may vary.

This section gives proposes a formal definition of adaptive music in a couple of different formats.

Adaptive music is music in which a primary concern in its construction is a system for generating significantly different performance versions of a piece in response to a specified range of input parameters, where the exact timing and/or sequence and/or quantity and/or presence and/or values of input parameters are not predetermined, and where the desired output of the system is coherent and aesthetically satisfying within the musical tradition(s) selected by the composer.

For some reason I love the almost impenetrable density of the single-sentence, dictionary-style adaptive music definition. On the other hand, it has been pointed out to me that: A) it’s almost impenetrably dense, B) it’s probably not even English, and C) that for complex concepts, it can be a better idea to provide a bullet-point-style list of defining criteria. So, for those who prefer that kind of thing:

adaptive music incorporates a system for generating significantly different performance versions of the piece

the generation system is driven by pre-specified input parameters

the actual performance-specific events are expected to have a significant degree of indeterminacy

traditional musical coherency and organization is a priority

As it turns out, the definition itself was rather boring, pedantic, and academic. But let’s take a look at the pieces, and see what we learn.

Or, in other words, “a primary concern … is a system for generating significantly different versions of a piece”.

In the real world, no two performances of any piece of music are ever exactly the same. Consider that even when listening to a CD player under fairly controlled conditions, subtle variations in room temperature, air pressure, background noise, the listener’s head position, heart rate, blood sugar levels, prior experience with the piece (is this the first listen? Or do you have the thing memorized? Was it once “our song”?), and mental state (to name a few factors) all introduce variation in the way a piece is heard. In live performance, variability is much more pronounced. When listening to even a virtuoso performer, variations in the exact execution of a piece of music are to be expected.

The key determining factor in adaptive music is intent. If my piano student massacres a Beethoven sonata (perhaps even, in the process, creating a number of very interesting structural and dynamic variations), it is still not a piece of adaptive music. It was intended by Beethoven to sound a certain way. Composed linear music can be said to have a single representative form. Any given performance is an expression or interpretation of this single, static ideal.

Adaptive music, by contrast, is significantly different from performance to performance by design. The form of a piece of adaptive music as it is expressed in a given performance is essentially flexible. Individual expressions of a piece of adaptive music can be quite different from each other, but each is equally representative of the composition.

The fact that variations are generated “in response to a specified range of input parameters” contains two important ideas. The first is that the system is, in fact, driven by external interaction. The second is that the exact nature of these interactions is carefully designed.

Adaptive music relates specific events (or ranges of events) to variations in the musical performance. An adaptive music system enumerates these events and describes how they are to be handled.

“The exact timing and/or sequence and/or quantity and/or presence and/or values of input parameters are not predetermined.” This describes the “random” nature of adaptive music. At the time of construction, the exact details of which events will arrive when and how are left to be decided at the time of performance. The final form of the piece that is expressed at performance time is generated in response to indeterminate events.

Potentially, even tiny input variations can have a drastic impact on the musical output of an adaptive system. But remember that the system is designed to handle specific events. This means that given the exact same input parameters, the exact same output is generated. Adaptive music is in this sense is ultimately deterministic.

“The desired output of the system is coherent and aesthetically satisfying within the musical tradition(s) selected by the composer.” In other words, ideally, each generated instance of a piece of adaptive music would sound as good as if it were linearly composed. A primary goal is for every performance of the system to work aesthetically as a piece of music.

Randomness as an aesthetic goal is not a defining characteristic of adaptive music. Randomness for randomness’ sake is not part of this tradition.

Note that the formal definition of adaptive music does not specifically mention video games. As it turns out, this type of composition does in fact crop up outside of game music. You may be surprised at the history, richness, and breadth of music that falls into this category. Here is a small sampling of a number of non-gaming adaptive music approaches.

Mozart’s Musikalisches Würfelspiel (1792)

Adaptive music more than 200 years old? Yup. In Mozart’s combinatorial “Musical Dice Game”, parts are generated a measure at a time by rolling dice to pick randomly from a table listing multiple potential versions. The number of potential variations of this piece of music “is so large that any waltz you generate with the dice and actually play is almost certainly a waltz never heard before. If you fail to preserve it, it will be a waltz that will probably never be heard again”. [Gardner, 2001]

Wolfgang Amadeus Mozart - Music Game Innovator

This piece consists of a single page showing nineteen discrete musical sections. The performer is instructed to play the sections in any sequence, as the mood takes her. But the end of each sequence includes specific performance notes regarding how to play whichever section is picked next. [Morgan91]

In Available Forms II, two orchestras rehearse a total of thirty-eight “composed orchestral events”. During performance, two conductors cue these segments “in any combination or sequence”. The conductors may also modify their “ensemble, tempo, and loudness”. [Brown65] Brown combined the flexibility of small group improvisation with the awesome expressive power of full orchestral forces.

This dance club environment installation allowed participants were invited to experiment with a large variety of non-traditional interfaces – hooked into musical responses designed to fit in “immediately and identifiably” with the DJ’s current club mix. (Actually, an “Experience Jockey” (“EJ”) was responsible for maintaining larger scale structure and direction of this unique audio experience.) Dance club lighting effects with a twist triggered musical phrases when overhead light beams were broken in one “zone”; another featured an array of light sensors on the floor that allowed shadows to influence melodic elements. Trigger pads, proximity sensors, pedals, and other interfaces were used to influence musical activity in other areas. [Ulyate02]

The really nice thing about many of the non-gaming examples listed in this section is that you can actually study the scores. Most have been published in one or more editions, so you can likely go to your local library and check out the details of their “implementations” for free. And there is a lot of interesting material out there.

Brown, for instance, experimented with a wide variety of indeterminate and “variable form” scoring techniques for many years. A number of other 20th century composers (such as Feldman or Boulez) have also experimented with aleatory music elements. [Morgan91] As a bonus, there also tends to be readily available analyses and discussions of these non-game works in secondary sources. (For instance, try Googling “Mozart ‘Musical Dice Game’”.)

By contrast, the “score” for “X-Wing”’s adaptive music system (for instance) is accessible only via Lucas Arts’ proprietary iMUSE editor. The actual details of the implementation are hiddenfrom the general public; only a handful of people have ever peeked “under the hood”. This opacity is typical of just about any video game adaptive music score.

A look at a two approaches to musical indeterminacy that aren’t adaptive should help to make our definition even clearer.

Although the score was originally generated through “chance operations”, [Morgan91] the generated score itself is considered to be the definitive form of the piece. There is only one representative performance version of this piece. Only if John Cage’s system for generating this music were actually considered to be the piece would it fit into the category of adaptive music.

From the 1960s onwards, Witold Lutoslawski used orchestral score notation techniques that involved indeterminacy; however, his aleatoric techniques were used to describe particular aural effects, which were intended to sound (essentially) the same from performance to performance. [Morgan91] This type of effect should not be considered adaptive music.

What, if any, jazz music performance traditions should be considered adaptive music systems? Should classical Northern Indian rag improvisation practices be considered adaptive music?

Is truly interactive music a subset of adaptive music?

Is John Cage’s 4’33” an adaptive music composition?

The video game industry is the driving force behind adaptive music technology development right now. However, as adaptive music technology and techniques mature, the craft may well find other important applications. Some for-instances follow.

The same kind of structural and expressive variation that is currently possible using computers and synthesized performers is theoretically also possible using a traditional orchestra in performance. The MIDI output of a software adaptive music system under the control of a conductor/composer/improviser figure could fairly easily be displayed as traditional parts on networked laptops for each member of the ensemble. The result would be spontaneous, cohesive, orchestral improvisation. Realization of the composer’s inspiration – as it strikes.

Club-goers could influence the flow of music by interacting with the DJ and an online adaptive music system using web-enabled cellular devices. At its simplest level, the play-list queue could be displayed on a large screen, with participants merely voting on what track gets played next. With a more involved implementation, any number of performance parameters could be up for grabs: real-time control over the bass line’s resonant filter, dynamic cross-fading control, muting and un-muting of various parts, assigning parts to new instruments, control over builds and breaks, and general ebb and flow within a piece of music. The mood of the music could quite literally follow the mood of the room (or at least of the majority).

This article proposed a formal definition of adaptive music, and examined it in some detail. The scope of the definition was clarified via a number musical examples that fell in- and out-side of the adaptive music category.

Currently, adaptive music approaches and implementations are characterized by their diversity. At first glance, the examples in this article tend to have more that sets them apart than groups them together. And none on their own could really be considered representative.

But the single essential question still remains: “how can a game composer score a scene intelligently and compellingly, when she doesn’t know what is going to happen, when?” The technologies and techniques that provide general, implementation-unspecific solutions to this challenge are the ones that will consistently find traction and evolve the craft as a whole.

And “what exactly are we talking about?” seems like a good place to start.

[3DSoundSurge01] “3DSoundSurge Press Release: Monolith’s 3D Engine to Feature the Interactive Power of Microsoft’s DirectMusic™”, (3DSoundSurge, 2001), online:

3D SoundSurge (accessed: 10 December 2002).

[Boyd06] Andrew Boyd and Robb Mills, “Implementing an Adaptive, Live Orchestral Soundtrack”, lecture at the Game Developers Conference, San Francisco, 2006.

[Brown65] Earle Brown, “Introductory Remarks” to Available Forms 2 for Large Orchestra Four Hands (98 Players), (New York: Associated Music Publishers, Inc., 1965), 1.

[Doud03] Chuck Doud, “Composing, Producing and Implementing an Interactive Music Soundtrack for a Video Game”, lecture at the Game Developers Conference, San Jose, 2003.

[Gardner01] Martin Gardner, The Colossal Book of Mathematics: Classic Puzzles, Paradoxes, and Problems: Number Theory, Algebra, Geometry, Probability, Topology, Game Theory, Infinity, and Other Topics of Recreational Mathematics, (New York: W.W. Norton and Company, Inc., 2001), 632.

[Morgan91] Robert P. Morgan, Twentieth-Century Music: A History of Musical Style in Modern Europe and America, (New York: W. W. Norton & Company, Inc., 1991).

[Ulyate02] R. Ulyate and D. Bianciardi, “The Interactive Dance Club: Avoiding Chaos in a Multi-Participant Environment,” Computer Music Journal, Volume 26, Number 3 (Boston: Massachussetts Institute of Technology, 2002).

Read more about:

FeaturesYou May Also Like