Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs.

Deconstructing Night Trap

I am in the process of porting the Sega CD classic, Night Trap, to the web browser by ripping footage from the disc and rebuilding the game from scratch in JavaScript. This is my development diary of the project.

A few days ago I built a quick prototype for getting Night Trap running in the browser. It’s rough, but hey, it works. Right now I am streaming the assets from Azure Media Servies and playing them through the open source video.js player as a .mp4. I also converted all of the video to adaptive streaming and am now in the process of using the Azure Media Player.

The benefit of doing it this way is that it allows the video player to adapt to the platform you are running on (iOS, Android, web etc.,) but it also allows the quality of the footage to adapt over time, too. The player checks for CPU utilization and bandwidth over time and and can change the video stream on-the-fly. I talk about it in greater detail, here.

As a kid, I loved Night Trap. Sure, it’s cheesey, but at the time I thought it was remarkable. I went from playing 2D side scrolling platformers to what felt like a film that I was engrossed in. I went through it more times than I could count and had several perfect playthroughs, where you catch every auger. The trick was to keep a sheet with all of the time stamps and the room for each capture. I thoought this would be a unique way to re-live my childhood.

Initial prototype

Right now the prototype is just using ~15 minutes of footage for each camera, and as you switch between the cameras, the HTML5 video player switches the URL (video feed) it is pointing to. There isn’t really any gameplay at this point, and you are really just watching the characters as they bouncwe between the rooms. This was captured from Phil Cobley in the Night Trap facebok group from the Mac version.

One issue that I ran into with this is the fact that the browser cannot download 8 video feeds asynchronously (at the same time). I was also working on a way to cache the video locally, so that I don’t need to serve the content from Azure each time a user loads the page. Unfortunately, with adaptive bitrate streaming I am not able to do this. Back to the drawing board.

How is this an issue for me today, in 2015, when the original developers wrote this game code in 1992 using 68k assembly? Even worse, the console only had 6Mb of RAM, so how were they getting these large video clips cached, and then switching between them so quickly? Big Evil Corporate has a fantastic blog about writing assembly for the Genesis, if you are into that kind of thing.

Retreiving footage from the disc

The trick is this: They weren’t storing them as large chunks of video files. Most of them were very small, in fact. I discovered this when I took about the .iso.

I own 2 copies of this game on Sega CD (don’t ask), as well as one copy on the 3DO, where the footage is far cleaner. Unfortunately, I don’t have a way of retriving the data from the disc on the 3DO feed, and I thought the Sega CD version would look pretty poor, due to the Sega CD’s limited color palette of only 512 colors, but could draw only 64 on screen at once. The console also had a display resolution of 320 x 224, but the video size was limited even further: a meager 256 x 224.

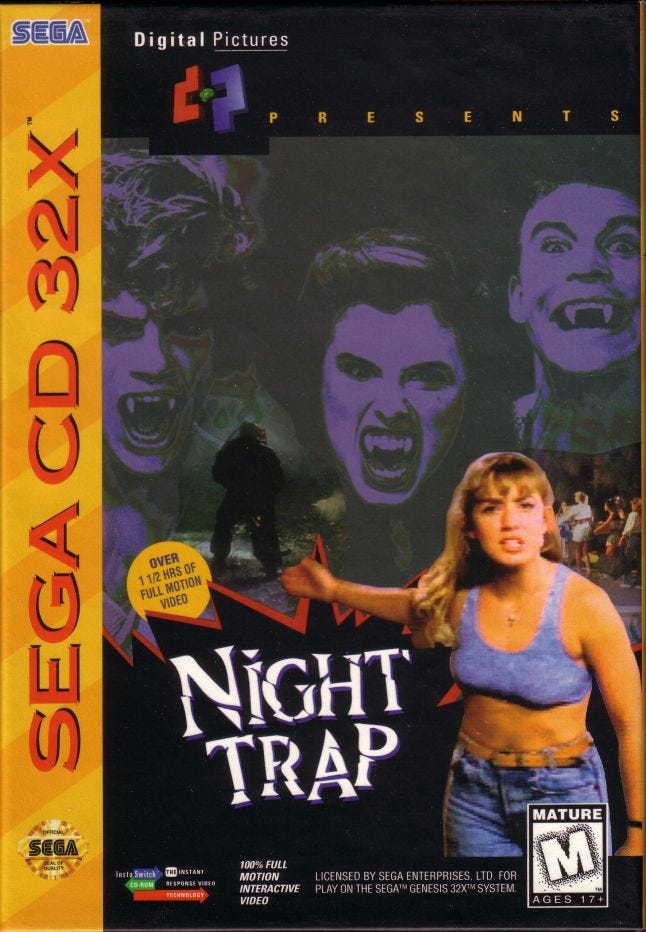

For those reasons, I felt it was more useful to use the 32X version, which had an improved color palette of 32,768 colors. I acqurired a 32X version, mounted the .iso and ran a tool called SCAT, or the SGA Conversaion and Analysis Tool. Sega (or maybe it was Digital Pictures?) had a propreitary video format called SGA, so a tool was necessary to dive into each clip and view the frames. Paul Jensen put this together last year, and wrote it in Visual Basic. I compiled the project in Visual Studio, and was good to go!

The tool allows me to export screen shots as .png, audio clips as .wav, and export entire clips as .avi.

When I opened the disc, all I saw was a series of files named after numbers. Not very helpful. Or so I thought…

After diving into 20 scenes or so, I realized that there was a method to their madness. I kept notes of each file as I was digging through them. Here is a link to my notes. I came to undestand that the final two numbers tell me which room the clip takes place in.

Key

Opening | 0- |

General | 1- |

Hall-1 | 2- |

Nothing | 3- |

Living-Room | 4- |

Driveway | 5- |

Entryway | 6- |

Hall | 7- |

Bedroom | 8- |

Bathroom | 9- |

Game Over | 6-| 1- |

Ah-hah! I’m making progress! The tens digital represents the room. The single place digit represents an event of some sort. For example, some end in B, which means that it is a branching path, and I could capture an auger in this clip.

This led me to the next part of my investigation. I now had a way of understanding which room each clip took place in, but I needed to understand how to piece them together. Fortunately for me, the first few digits represent the general order that they will appear in the game. I emphasize the word general. Just becuase the first few digits of the file are higher doesn’t mean it is guaranteed to be later in the game than the one before it, just that it belongs in that general area.

Reading the frame data

You may have noticed that there are a bunch of numbers at the bottom of the footage, too. A value such as $004f8800 refers to the location in memory where this frame would appear. It continues to increase over time. To the right of that, we have something like T00042B1C. I immediately realized that this was a hex value, after seeing the numbers increase each frame as well. The T is simply short for “Time”.

If you throw 00042B1C into a hex-to-decimal converter, you’ll find that it returns as: 4370880. That’s not very useful. But what if we break it up into sets of two?

00 04 2B 1C

Put those in the converter indivudallly, and you’ll discover:

00:04:43:28

00 hours

04 minutes

43 seconds

28 milliseconds

Now I know the time stamp for each clip! So far we have the (rough) order the clips appear in, the rooms that each clip corresponds to, and now the time stamp where each of them below. Progress!

Branching paths

If you are familiar with Night Trap, then you’ll understand that it’s similar to a Choose Your Own Adventure book, in that you need to capture these augers, or vampires, as they roam throughout a house. As they walk over a trap in a room, you have an opportunity to trigger a trap, such as a book case which rotates 360 degrees and knocks them into a wall. If your trap is successful, then one clip will play, showing the capture, otherwise the film will continue and the auger escapes.

These capture clips are very brief, generally between 5-10 seconds, and need to be spliced seamlessly into the scene. That’s where the hard part of this production is introduced. I need to write code that cleanly allows me to do that. Perhaps that is best left for the next post.

In the next episode….

That’s all I have for now, but I’ll continue to updae this as I move along and begin to implement these short clips into the game. The code is open source and available on my GitHub. If you can think of a simple way of writing this in JavaScript, I’m all ears!

Read more about:

Featured BlogsAbout the Author(s)

You May Also Like