Recording 65 music cues in 2 days with Conservatory musicians in a brand new studio for Gathering Sky...the preparation and the results.

[This is the second of several journal entries that will cover the audio development for the game Gathering Sky. A 'present-mortem' if you will. The first journal can be found here Also, we changed the game title! It's now known as Gathering Sky! ]

Soundtrack Recording Preparation and the Recording Sessions

In the last Gathering Sky Audio Journal I simply outlined some very general points about our initial decisions on the audio approach to the game without getting into many specifics, so I'll start to get into some of the dirty details here.

Before we discuss the organization of a recording session, I'm sure many might first ask, "Why use live musicians at all? That sounds like a big pain when you can just create a soundtrack with sophisticated sound libraries."

Yes, some sample libraries are quite sophisticated, and can yield some very nice results. Especially if one is trying to recreate a large orchestra feel, maybe in a 'hybrid' type of score (a mix of synths/electronics/rock instruments and orchestra). Sometimes it works out nicely, especially if the composer has a knack for manipulating the sample libraries with midi CC (control change) information to emulate real instruments (see Jeremy Soule and Skyrim). But the range of emotion that one can get from sample libraries can certainly be limited. Afterall, Skyrim did have a real choir recorded on top of the sample libraries.

Sample libraries also work well in full orchestral musical pieces because they are modeled on large sectionals (violin section, viola section, brass etc), where it's a little easier to hide the 'samples' when you layer those sections together. But we were going for a smaller sound, with a small ensemble, and while solo instrument sample libraries do exist, the amount of work needed to massage the midi to coax a meaningful performance out of them is labor intensive (at best) and would still never approach the emotional response that a live musician would bring during recording. Here's an example of the same cue, and the first half of this clip is the live musicians, and the second half of the clip is the midi mockup with sample libraries. I tried to volume match these so that there wouldn't be a drastic change in volume between them..

And a quick demonstration of this quiet cue from a point in the game that is very emotional and reflective...I only included the live version here, but try to imagine a sample library mockup that blends and emotes like these musicians do here...

Organization: The Foundation for a Successful Session

I don't think that I can emphasize this enough. Whether you are recording a 70 piece orchestra for $10,000/minute, or a soloist at your home studio, the organization is what gives you a foundation for the success of the session.

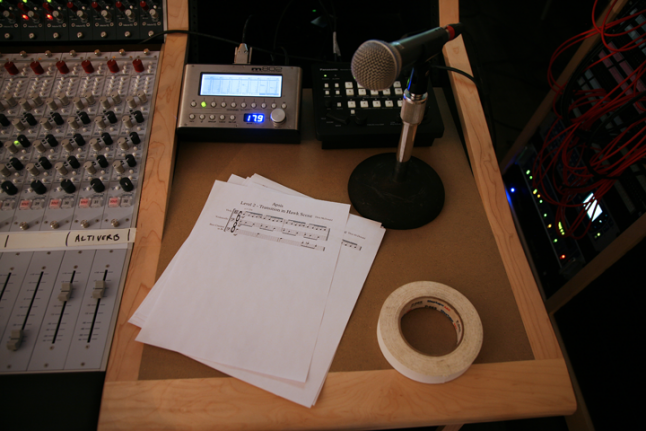

Photo: Heike Liss The score, referenced by the engineers throughout the session. Tape, it seems, is also very important to engineers.

There are a lot of you reading this who have plenty of experience with live recording sessions, so this section is really aimed at those composers who are used to working completely inside the box and are maybe thinking about adding some live players to the mix for your next project.

The written musical score is the roadmap to the session. This is what the musicians are looking at, the engineer, the composer, the score supervisor, and the ProTools (or whatever software you're using) sessions flow from that document. The score has to be clean and accurate!

I don't consider myself a music copyist or any sort of expert in music prep. I've tried to improve my skills in that area over the years, and I'm competent enough for small sessions. But I was writing revisions and new cues every day leading up to the recording dates, so I had to enlist some help on our 'no budget' project. Richard Savery has been working for the Game Audio Network Guild for the last year or so, and I'd been working with him to show him the ropes as I was leaving GANG. He's a graduate student at UCI in music and wanted to learn more about recording game scores. On top of that, he proved to be incredibly reliable, responsible and easy to work with on all of the GANG stuff. When I contacted Richard about this he had just finished his last school paper and had a week with nothing to do. I could not have been luckier. So Richard jumped in, taking my sloppy Logic sessions and importing them into Sibelius to create the charts.

Ultimately we had a great, easy to read score for the musicians and engineers, and this really helped us stay organized through the recording of 65 cues or so. Rather than printing out my score and making a binder for myself for the sessions, I opted to use an iPad app called ForScore, which proved to be another great tool. I just dumped my score pdfs into the app in iTunes, including every single part for each instrument, and I organized them in the app according to instruments. That way I could jump to someone's part very quickly while on the stage, if there was a question about a part, or in some cases, a musician hadn't printed their part, so I was able to let them read off of the iPad. ForScore also includes a metronome, a pitch pipe, a mini piano keyboard and lots of annotation features that make it a really perfect session tool (thanks to those on twitter who recommended that app!)

I did create one more document in google docs as a cue roadmap. Here's a link to that document to see what I did, but largely I wanted the engineers to be able to easily see an overview of each cue, and which instruments had an overdub on which cue, or where we were doubling up some parts etc. The singer was doing some 2 and 3 part harmonies in some cues as well, so this spreadsheet allowed a quick overview of the overdubs for each instrument in each cue.

Recording for Flexibility

Part of the organization of the session recording was also recording the parts in layers (or stems) that would allow for the greatest flexibility when implementing the music with FMOD. If we had recorded all of the parts of the ensemble together at once, then we would be stuck with only that version of the music. But by separating the recorded parts, it gave us options to edit the music as needed for implementation.

As I mentioned in Part 1 of this journal, I had discovered that when the team had initially built the levels, they had been built so that a players progress would sync with the royalty free music that they were using in the game. As you can imagine, this didn't always work out during gameplay. If a player decided to hang out in one area for a while, or to take a minute to go backwards to investigate something instead of forging through the level, then there was a good chance that the music might end prematurely before the player finished the level. However, the length of the existing music pieces did give us a rough map for figuring out how much music each level needed, and we started with that as a guide.

Generally, we'd map out a level into small sections, and generalize how long some of the small sections might take to travel through for the player. If there were a section where it seems like we'd need the music to loop (where it was possible for the player to 'hang out'), then we'd note it that way with an estimation of the length of the loop. In other cases, the small sections of the game would require a linear piece of music to transition from one section of the map to another. So we came up with a few classes of music cues: 1) ambient 2) linear 3) loop 4) stinger.

If we had decided that a loop might need to be about 2 minutes, I'd usually compose something that would be about 1:45 or so, and then create a loop for the last part of the cue. This way, if a player was hanging out in a part of the map, they wouldn't hear the entire cue loop again, but just the last 15-20 seconds. However, I arranged it so that if this short section had a melody part, that it would be recorded separately, and in some cases there would be alternative melodies recorded as well. So the 'base section' of the short loop could play one layer (usually a bass clarinet and cello part) and would be able to loop with other layers like "A melody" and then "B melody" and maybe "no melody" or 'ambient part" to be layered over the 'base part'. This way, if a player were stuck in the section for a long time (usually not likely, but just in case) they wouldn't hear an obvious melodic loop. We had the flexibility to stretch it out a little bit.

This next audio clip demostrates this a bit. You'll hear the cue begin with the main groove/loop, and then the flute solo plays over that, then transitions to a sparse synth part that plays over the loop (the musicians actually played the groove for about 90 seconds, so to avoid the repeating loop of the same 4 bar phrase performance), then the instruments fade to leave a synth part that keeps the loop intact, and eventually that fades to the end of the cue (which will fade into sound design in the game.)

There's also a fail safe we're considering that would simply let the music fade into ambient drones or only the sound design if no progress were made for 'X' amount of time (X being whatever we deemed too long to hang around in that area). Once the player started to progress again, when they came upon an invisible 'stinger' area in the map, they'd simply retrigger the music system again from that 'stinger' transition point onwards. I'm curious if anyone has considered other options for these sorts of issues in their own projects...these were the first things that came to mind for me, but I'd enjoy hearing what others have done.

Composing with Implementation on the Brain

I know that there are some studios that take the philosophy that they want to 'let the composer compose' and not to worry about the implementation. I certainly understand how they came to that decision, and typically those are large teams working on big projects that take that approach. Being the one person audio team, I definitely had to think about the implementation, and honestly this affected my entire approach to the composition for the better.

As I was composing the first draft of the music my process became one where I was finishing a cue and then bouncing the stems and dropping them in my FMOD session to test them out. I'd go back and forth between Logic and FMOD several times before I'd get to a place where I thought we could try something in game.

We did get things set up so that once our parameters and sound events were in code I could simply build the sound banks and drop them into our build to check out the cues in game. I think that this is probably the first time I've been able to have this luxury on a project. Anyhow, that helped me figure these cues out a bit quicker than normal, which is what we needed. The constant loop of 'compose, bounce, implement, test' helped me iterate on the music much quicker than I could have by simply composing the music and later hearing it in game after someone else put it in the game. And it allowed me to focus in on problems and experiment quickly.

One of the first issues I'd noticed was the problem of 'over composing'. When one is starting at one's DAW, looking at those midi notes, thinking about MORE midi notes, us composers tend to get some crazy ideas in our head. Not all of them good ideas.

Whatever crazy things start to bounce around in our heads, this often results in over complicated musical ideas, at least for me. During this process I found that my biggest task was to just simplify the ideas and pare down to get to the core emotion of each cue. There was one cue that had a note from the team that it should slightly 'foreshadow' a heavier scene to come, and the first thing I started to write ended up as a cross between Mozart's Requiem and Neurosis! It was pretty heavy. I got so carried away with my 'genius cue', that by the time I stopped to listen to it again (with a little perspective) I couldn't believe how far off of the mark I had been with it. The creative process is a mysterious thing indeed.

Another issue I was thinking about during the composition phase was looping. I knew that we'd need some loops, so I would be fairly conscious of the types of musical figures that would loop well without needing reverb tails, and that sort of thing. In the score, I'd arranged some of the cues to be recorded with several repeats, so that we had several performances of the musicians playing the loops. That would give me a lot more flexibility than just having a recording of the parts being played one time, and it also gave me a natural feeling transition at the loop points to improve the edits. After all, I'd been bouncing out the stems with the sample library sessions and dropping them into FMOD for a while now, and I was getting a strong feel for how each cue should be performed in order to create a flow within each level.

We had several short 'transition' cues to record, and when we'd set those up in the score we'd also include the first bar or two of the next section that the music would transition to so that we also captured the natural transition of the musicians changing to the next part. Again, with the idea that it would make the edits much easier.

As you'd imagine, we did have to use a click track throughout the recording of most of these cues, especially the ones that were going to need some gluing together in implementation. This was offset by having a handful of cues that had some accelerandos, deccelerandos or linear sections that might begin with a click, but then we could turn off the click somewhere into the recording of the cue to get a natural feel of a breathing tempo.

The Sessions

Photo: Heike Liss The core group, recording in the San Francisco Conservatory of Music's Concert Hall.

One other thing that I did need to be aware of during composition, was the musicians. I often prefer to record with musicians I know as it helps to keep them in mind as you compose, but in this case we were working with conservatory graduate students who I didn't know (actually I knew the violinist from a previous performance this year). Because of this, I tended to keep the parts fairly straightforward and not terribly difficult. I don't tend to write really dense music anyway, but it was certainly something I'd think about as I was composing/revising: "Does this entrance have to be on the upbeat of the 2nd beat, or could it be on the downbeat?" "Will I be overtaxing the bass clarinetist with all of these whole notes?" Issues like that were definitely on my mind and it seemed smarter to make a safe choice when making these choices. I did not want to find ourselves stuck in the session, with the clock running, to watch a musician struggling with a part. That's like having one of those 'in school wearing only my underwear' nightmares that I had in 6th grade and I really wanted to avoid that. (I also got the parts out to the musicians about 3 weeks in advance to help prevent this scenario.)

So how did the sessions go? The experience was overwhelmingly positive. We got through about 65 cues in 2 days, which included a new cue I wrote the night before the session. We did go over time by 3 hours on the first day, but one thing that I may not have mentioned was that this was THE FIRST TIME that the SF Conservatory had used this new equipment in a live session! The individual headphone mix controllers were coming out of their boxes for the first time during our session. Nothing else had been recorded through their brand new Neve console! The acoustic panels still weren't up in the control room (we put up a bunch of temp panels that were from an older room...we weren't mixing!)

So we had a few issues to work out with the process and the gear and I can only say that a true engineer professional is worth their weight in gold. Both of our engineers were on top of every issue that came up and found a solution quickly, helping us avoid any down time on the stage. That's a very valuable skill, and it's important to keep the momentum flowing. Both of our engineers were also musicians (viola and violin players), which helped give us a couple of other intonation experts in the booth who were able to offer advice to the student musicians if they needed a bowing or articulation tip. This was extremely helpful, as I can't really give that sort of advice being primarily a guitar player.

Our fabulous hosts at SFCM, Zach Miley (engineer, left), MaryClare Bryztwa (center, Chair of Technology & Applied Composition Program at SFCM, the great facilitator, & one of our flutists), and Jason O'Connell (engineer, right)

There were some real shining moments throughout the sessions. It's always such a magical thing to hear your compositions come to life when a group of talented musicians plays your music. I'll never, ever get tired of that. But when you hear someone really dig in and begin to interpret the music a bit, and coax a little bit more out of the notes, it's just a moving experience. I'm happy to say that we had several of these moments during the sessions. Did we run into some problems? Yes, there were a few cues that relied on 'feel' a bit more than I'd anticipated. Not being a conservatory trained musician, I probably rely on 'musical feel' a little more than someone who has that sort of training. So some music parts that I had assumed would be pretty simple, ended up taking a little longer to nail down than I'd imagined. Conversely, some sections of the score that I had assumed would be challenging, like some of the fast tempo 16th notey stuff, (especially the crazy chromatic stuff), weren't challenging at all for the players.

The Next Challenge

I'm currently in the middle of mixing the cues, and implementing them into FMOD. In the next journal I'll include some screen shots of the FMOD sessions and talk about how I organized it and show some of the 'tricks' I'm trying to pull off. I have all of the mock up files living in an FMOD session already, so I'll replace the old files with the new performances and then get to tweaking and dialing it all in.

Read more about:

BlogsAbout the Author(s)

You May Also Like